As the threat landscape continues to evolve, cybercriminals are increasingly using artificial intelligence to carry out more complex and coordinated attacks. Similarly, security teams are using AI-powered cybersecurity tools to address their organisation’s challenges. This is leading to faster detection of threats, more intelligent incident response, and resilience against the changing threat and attack landscape. Leading into 2025, AI-enabled security platforms will be a prominent feature of most modern cybersecurity strategies. For both small businesses and large organisations, using the right AI security tools could be the distinguishing factor in being proactive or reactive in ensuring the safety and security of their data.

This blog post will explore AI security tools of note, as well as what makes an AI security tool effective.

What Makes an AI Security Tool Effective? Key Features to Know

Having a fancy algorithm isn’t the only thing valuable about an AI pentesting tool; it ultimately comes down to how it fits within your security operations. In the broader landscape of AI in cybersecurity, an effective AI security tool should have autonomous threat detection — that means it learns what “normal” looks like in your environment and will raise flags when abnormal behaviour is detected.

The tool should also automate incident response to take some of the workload off of analysts. The tools should learn continuously from new threat data as the attack landscape changes, rather than relying on static rules. Another important piece of this is scalability; the AI tool should be capable of ingesting data from endpoints, cloud, identity, and network data.

Finally, explainability is critical — security teams must be able to trust the decisions made by AI and having a clear explanation of how the AI arrived at its inferences is important, especially when conducting an AI risk assessment.

Here are the key features in more detail:

Behavioural Analytics & Anomaly Detection

These types of tools characterise “normal” behaviour across networks or endpoints and leverage machine learning to detect deviations from that normal behaviour. By focusing on behaviour rather than merely signatures, behavioural analytics applications can catch zero-day or insider attacks that traditional security solutions cannot.

Automated Response and Remediation

A powerful AI security platform not only alerts about a situation; it will take action, for example, isolating an endpoint, killing a session, or triggering a playbook. With an automated response, dwell time can be reduced, decreasing the extent of damage during a breach.

Threat Intelligence & Prediction

By utilising threat feeds, historical data, and real-time telemetry, AI tools can predict the most likely attack vectors, prioritise alerts intelligently, and surface high-risk issues before they escalate. Learn more about AI-Powered Threat Intelligence.

Scalability Across Environments

Functional platforms deploy seamlessly in cloud, on-premises and hybrid environments. They ingest data from identities, endpoints, and networks, providing a fully integrated view so your security team is not “blind” in any part of your environments.

Explainability and Transparency

When an AI flags something, your security analysts need to understand the capabilities. Good AI security tools provide context on why the AI reached a conclusion or flag, and assist the human analyst in determining if the AI was correct, instead of operating in a black box.

Access our sample pentesting report and discover the Level of insight, clarity, and technical depth you can expect from a professional security assessment.

Get a Sample Compliance Audit Report

Top 10 AI Security Tools to Protect Your Organisation in 2026

AI security is not a concept of the future anymore — many of the leading cybersecurity platforms of today have already embedded enhanced AI capabilities. Listed are ten security tools that are shaping AI security in 2025:

Darktrace

Darktrace is often compared to a digital immune system: it uses self-learning AI to learn your organisation’s “normal” and identify deviations in real-time. Its Antigena module can autonomously respond to threats by quarantining or slowing suspicious activity. Darktrace covers networks, cloud infrastructure, IoT, and email threads, which makes it a comprehensive solution with adaptable guardrails.

CrowdStrike Falcon / Falcon XDR

CrowdStrike’s Falcon platform is a cloud-native endpoint protection suite powered by AI and behavioural analytics. It can detect zero-day threats, ransomware, and the like by inspecting patterns across endpoints. The XDR version extends protection capabilities to workloads, identities, and apps, providing security teams with extensive visibility and the ability to hunt for advanced threats.

Vectra AI (Cognito)

The Cognito platform from Vectra employs AI and deep learning to identify hidden threats, such as command-and-control or lateral movement, by analysing network traffic. Additionally, it has an identity-based threat detection capability that can detect compromised accounts and insider risks. It helps in further enhancing AI application security in modern environments.

SentinelOne Singularity

SentinelOne’s Singularity platform leverages AI and behavioural insight for proactive detection and response to threats. It has autonomous response capabilities (rollback), which allow organisations to restore devices to a safe state following a breach. The unified agent provides coverage for endpoints, cloud workloads, and identity.

Check Point Infinity AI Security Services

Check Point employs a range of AI engines via its ThreatCloud to unite its Infinity architecture. This architecture offers centralised management of network, endpoint, and cloud security utilising AI-based threat intelligence.

Fortinet FortiAI

FortiAI uses deep neural networks to analyse network traffic and identify malware, APTs, and other advanced threats. Because the model is self-learning, it can adapt continuously and reduce reliance on signatures, as well as support threat hunting with forensic insights.

IBM QRadar with AI

IBM QRadar, a leading SIEM tool, now employs AI and machine learning to enhance threat detection and event correlation. The tool is capable of ingesting high volumes of security logs to apply AI and identify anomalies, then produce enriched alerts to help SOC analysts be more efficient.

Microsoft Security Copilot / Defender

Microsoft’s Security Copilot utilises large language models in conjunction with security telemetry in order to help an analyst with incident investigation, summarise threats, and recommend response plans. Security Copilot integrates with Microsoft Defender to enable AI capabilities for incident response, threat prioritisation, and automated investigation workflows.

Microsoft is also building its AI agent capacity. Under the Security Copilot offering, Microsoft will be developing AI agents to handle routine tasks like triaging phishing alerts and prioritising vulnerabilities and addressing AI security vulnerabilities more efficiently.

CylancePROTECT

CylancePROTECT leverages AI and predictive models to thwart malware from executing before it runs. Unlike signature-based antivirus tools, CylancePROTECT analyses attributes and behaviour of a file before execution, which allows it to be effective against zero-days.

Abnormal Security

Abnormal Security specialises in email threat protection through the application of behavioural AI to learn normal communication patterns within an organisation. Once that learning process has been completed, Abnormal Security is able to identify anomalies that could indicate phishing attempts, BEC (business email compromise), or compromised accounts. Abnormal Security supports all common email platforms, including Google Workspace and Microsoft 365.

Contact us for a thorough evaluation and professional advice if you’re not sure which AI-powered security products best fit your company’s needs.

Comparison Overview: Which Tool Fits Your Security Needs?

Various AI security tools are intended for various environments. Some tools are endpoint-focused, some are hybrid or cloud-focused, some work for email, some are for SIEM, and so on. The proper tool is dependent on where your risk is placed (endpoint, cloud, or email communication). Cost, deployment depth, and the level of automation desired also need to be taken into account. Here is a comparison of the tools to assist with your decision:

| Tool | Primary Strength | Best For | Trade off / Consideration |

| Darktrace | Behavioural anomaly detection, autonomous response | Organizations wanting a self-learning defence for network/cloud / IoT | Can be complex to tune; may generate false positives initially |

| CrowdStrike Falcon / XDR | Endpoint + workload protection, threat hunting | Enterprises with distributed endpoints and cloud workloads | Requires cloud infrastructure and may need experienced analysts |

| Vectra AI (Cognito) | Network + identity threat detection | Hybrid or complex network architecture | Needs deep visibility into network flows, may require integration effort |

| SentinelOne Singularity | Automated endpoint remediation | Teams that want strong endpoint resilience | Rollback features may need storage/disk space; pricing factors for cloud workloads |

| Check Point Infinity AI | Consolidated security across cloud, mobile, and network | Businesses want unified control across multiple security domains | The central console may need admin training; it depends on the Check Point ecosystem |

| FortiAI | Deep-learning-based threat hunting | Organizations already using Fortinet security fabric | Performance depends on data quality; may need a GPU or high compute |

| IBM QRadar + AI | SIEM with AI-enriched alerts | Large SOCs that need advanced correlation and alert prioritization | SIEM setup can be resource-intensive; AI models need proper configuration |

| Microsoft Security Copilot / Defender | AI-powered investigation, automated triage | Enterprises in the Microsoft environment (Azure, MS 365) | Requires integration with the Microsoft stack; LLM usage may have cost or compliance issues |

| CylancePROTECT | Predictive malware prevention | Organizations wanting low-footprint endpoint protection | Does not focus on response; may need to pair with other tools for remediation |

| Abnormal Security | Email behaviour-based threat detection | Businesses heavily reliant on email (e.g., remote teams) | Focused on email; not a full SOC or EDR replacement |

Read also: What Is AI Cloud Security? Definition, Benefits & Challenges

Confused About Which AI Security Tool Fits Your Needs? Speak to Our Experts.

How These AI Tools Complement Penetration Testing Services

AI-powered security tools will help you enhance penetration testing, not replace it. Here’s how:

Continuous Monitoring Between Pentests

AI tools are operating around the clock and can potentially find issues that appear after a pentest. Pentesters will simulate attacks only at that specific point in time; an AI security platform will look for anomalous behaviours in real-time 24/7, lowering risk during the time between pentests.

Prioritising Findings for Penetration Tester

When AI tools find suspicious or anomalous behaviour, security teams can contribute those findings back to AI pentesting. This narrows the pentesters’ focus on more likely attack vectors.

Validating Remediation Following a Pentest

After conducting a pentest, as teams take steps to remediate vulnerable areas, AI tools can also verify if the remediation worked, even in the presence of normal and malicious conditions. This “verification loop” ensures that remediation attempts are effective.

Augmenting Threat-Hunting Recreation

Pentesting is frequently more offensive in nature (find and exploit vulnerabilities), while AI security systems operate more defensively by conducting threat hunting. Both approaches provide an overall counterbalance: pentesters find vulnerabilities, while pentesting AI tools simulate detecting a threat, often focused on exploiting areas that have just been patched.

Automate Response During Simulated Nationwide Attacks

During a pentest, you may even be able to simulate a real attack and observe how well your AI tools respond. This will test historically pentest findings, in addition to the ability of the AI to respond according to real-world capabilities: do they isolate the systems, shut down the session, or contain a threat as expected?

Want to understand the real impact of AI-driven security and pentesting? Explore our in-depth case studies today.

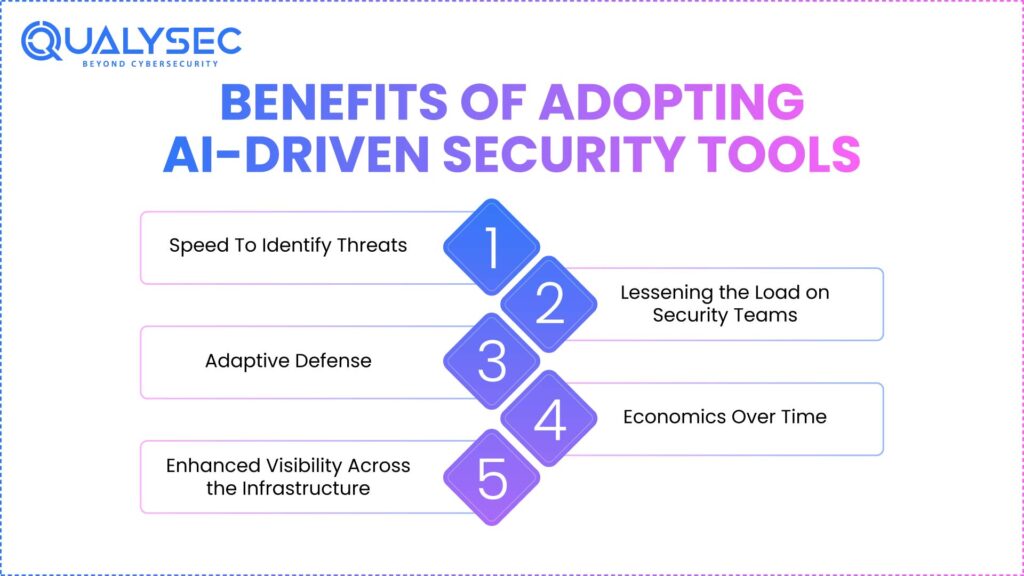

Benefits of Adopting AI-Driven Security Tools in 2026

Security solutions powered by artificial intelligence offer significant and crucial benefits, making them central to modern cybersecurity initiatives and highlighting the impact of artificial intelligence in cybersecurity. The key benefits are as follows:

Speed To Identify Threats

AI can review large sets of data in real-time, much faster than humans can sift through logs. If there is an anomaly or threat, AI typically identifies it immediately and allows for the incident to be responded to quickly. This helps reduce the time an attacker has to facilitate a breach.

Lessening the Load on Security Teams

AI tools automate triage and response to alerts, which frees the human analyst to concentrate on higher-value activities like threat hunting, planning, or vulnerability assessments, versus being required to respond to all alerts from the security tool automations.

Adaptive Defense

AI does not handicap detection or response models with fixed rule sets. AI models continue to learn new information with fresh telemetry and threat intelligence, and they can do this with an environmental change in attack patterns.

Economics Over Time

Though the upfront cost of many AI security platforms can be high, they often drive down operational costs over time, which they do by automating the manual workload, driving down costs of incident response, and preventing breaches before they become costly incidents.

Enhanced Visibility Across the Infrastructure

AI tools can often aggregate data from endpoints, identities, cloud workloads, and networks into a single view. This all-encompassing view of the risk helps security teams identify, understand, and mitigate risk across the entire attack surface.

Set up a meeting right away to find out how AI-driven solutions can help you detect dangers more quickly and minimise risks throughout your company.

Talk to our Cybersecurity Expert to discuss your specific needs and how we can help your business.

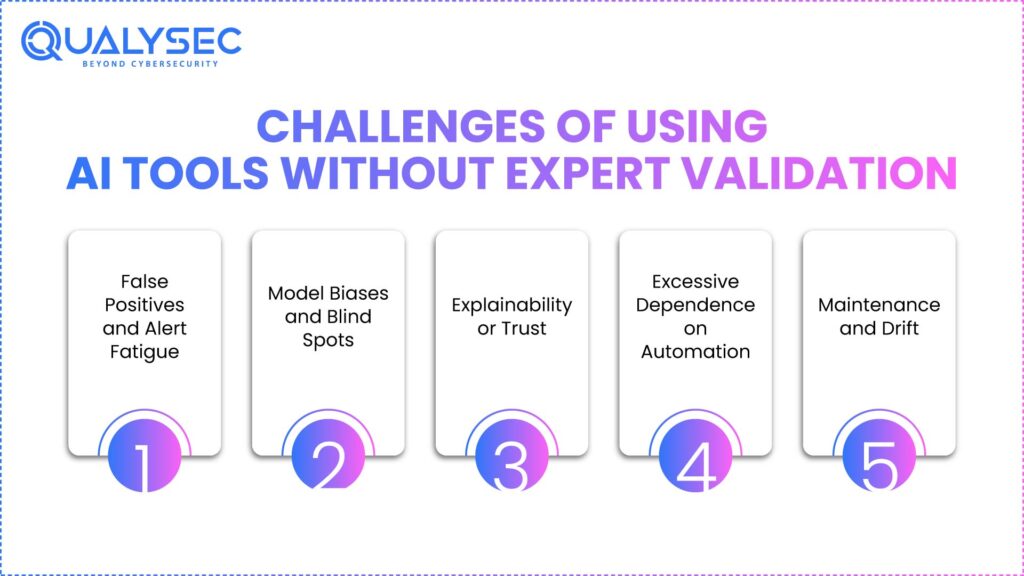

Challenges of Using AI Tools Without Expert Validation

Although security analytics tools have advantages, they are not without their limitations. If not validated and properly managed, AI tools can introduce risk or create false confidence. Here are the primary risks:

False Positives and Alert Fatigue

AI wasn’t developed to be 100% accurate. It may flag totally benign anomalies as malicious, especially going forward. This can generate multiple, and even overwhelming, false alerts. When there is a flood of false alerts, the analyst team can lose valuable time and may lose trust in the tool if they must sort through numerous triggered alerts. Alternatively, alert fatigue can easily arise and desensitise analysts to a legitimate threat.

Model Biases and Blind Spots

If the data the AI model trained on was skewed or there were blind spots in the telemetry, it might fail to recognise certain cyberattacks, or it might mistakenly flag a legitimate and normal behaviour as malicious. These blind spots can only be audited and amended by human experts.

Explainability or Trust

At times, the security team may hesitate to take action due to a lack of understanding of how the AI flagged the incident. The ability to explain how an AI-made decision regarding an alert was generated or informed is invaluable and can help inspire trust within the security incident response.

Excessive Dependence on Automation

The ability to automate a response is impressive, but if someone does not carefully set it up to begin with, they can produce undesired outcomes, such as disconnecting a critical server or stopping a legitimate process. Human assurance is required to establish safe boundaries.

Maintenance and Drift

AI models must be retrained, tuned, and updated as environments shift. Failure to have someone in a subject matter expert role monitoring is likely to lead to model drift, and therefore, the performance of the model diminishes, and the effectiveness of the tool decreases over time.

Secure your organisation with advanced security tools- Learn More.

Why Organisations Still Need Manual + AI-Assisted Pentesting

Even as AI tools now demonstrate advanced capabilities, the need for a manual penetration test is still vitally important. Here are some rationales that support the use of both:

Creative Human Attackers

Though AI is effective in recognising patterns, actual attackers are creative in their approach and may not always obey a predictable pattern of behaviour. Human pentesters are capable of analysing vulnerabilities and threats while thinking like an adversary, utilising inventive tactics that an AI may not have encountered.

Deep Exploitation Skills

Pentesters employ strains of exploitation that consist of chaining vulnerabilities, bypassing business logic, or exploiting a misconfiguration. Also, if these attacks do not trigger a behavioural AI model or even surface during AI video analysis and go unnoticed, they ultimately demonstrate the depth of techniques a human can utilise.

Adversarial Testing of AI Models

Pentesters can recreate adversarial attacks, such as prompt injection or model poisoning adversarial attacks, that Pam can rely on to test how resilient your AI security platform is. Adversarial attacks can identify weaknesses of the AI itself, where an algorithm and a model can fail.

Risk Validation for High-Value Assets

For systems of critical missions, a human-led pentest can yield a deeper and more validated risk assessment of security than other means of testing. Security teams can combine the outcomes of the pentest with AI alerts to obtain a fuller picture of risk.

Compliance and Reporting

The majority of regulatory frameworks and audits continue to favour or require third-party pentesting. The human-led test findings, reinforced with behaviours observed by AI monitoring, present compelling evidence in order to demonstrate compliance and governance.

How Qualysec Helps Businesses Validate AI Security Tool Effectiveness

At Qualysec, we are focused on measuring and assessing the efficacy of AI-based security tools in concert with traditional security controls. We conduct controlled scenarios and pentests simulating real-world attack behaviours, including advanced AI-based threats such as model exploitation or data poisoning. The bulk of the analysis examines how your AI tools detect, respond, and adapt during these exercises.

Based on our analysis, we provide prescriptive recommendations on tuning, training, and model governance relative to false positive rates, explainability, and overall automation rules. Our goal is to maximise your return on investment for AI security-based capabilities — ensuring confidence in resilient, trustworthy systems relative to your risk profile.

You might like to know more about the Top 25 AI Cybersecurity Companies Worldwide

Conclusion

AI security tools mark an innovative shift in security. They allow organisations to detect and respond to threats faster, adaptively, and more effectively than ever before. After 2025, platforms including Darktrace, CrowdStrike Falcon, SentinelOne, etc., are proving themselves valuable in a wide range of environments from endpoints to email, network, and cloud.

AI is automating and giving intelligence to detection and response, but it is not a silver bullet. It requires an expert to validate, tune continuously, and combine AI tools with manual pentesting so that a strong security posture is built. By layering AI security tools, human insight, and expert testing, organisations can constantly stay one step ahead of adversaries, with strong, resilient, and future-proof defences.

With the appropriate AI Security Tool, secure your organisation- speak with our AI Assistant right now.

Chat with our intelligent AI Assistant and get tailored insights in seconds.

FAQ’s

1. What are the best AI security tools for small businesses?

Small businesses are seeking an AI security tool that is easy to deploy, cost-effective, and requires minimal in-house expertise. Microsoft Defender for Business, SentinelOne, and Abnormal Security all offer a high level of protection with minimal management concerns. In addition, each AI-based security tool includes automated detection and response that does not necessitate extensive configuration.

2. How do AI security tools integrate with existing infrastructure?

The majority of AI security platforms relevant to small businesses are either APIs, agents or cloud connectors. These tools grab data from the endpoints, networks, identities and cloud workloads to provide unified visibility. Integration is usually straightforward and designed to sit alongside your existing firewalled SIEMs and endpoint security tools.

3. What is the ROI of implementing AI security tools?

They reduce the amount of time it takes to detect & respond, therefore lowering the overall cost of a security incident or business disruption. AI tools also reduce the manual workload and labour costs, all while improving the team’s efficiency. Over time, even preventing a single significant incident or disruption delivers a high return on investment.

4. What is a security AI tool?

A security AI tool is a cybersecurity mechanism that employs artificial intelligence to automatically discover, analyse, and respond to threats. Security AI tools learn normal behaviour, recognise deviations in patterns, and take action much more quickly than traditional technology. The rapid nature of the tools helps to improve an organisation’s overall defence across endpoints, networks, cloud, and applications.

5. How do I choose the correct AI security tool for my organisation?

First, understand your environment, tools & attack surface, as well as internal skillset. The tool should be compatible with your specific infrastructure, whether cloud, hybrid, or on-premises, have solid automation, and provide clear explainability. Before making a decision, always evaluate features, pricing, and integrations, as well as independent verification through pentesting.

0 Comments