AI is no longer lying silently in the background of systems. It currently exists right on corporate endpoints. Endpoints have developed into smart decision-makers rather than passive devices, from fraud detection engines operating on fintech employee laptops to AI-assisted diagnostic workstations in hospitals. This change dramatically affects the threat surface. Static hazards were the focus of original endpoint security. AI endpoints are autonomous, dynamic, and adaptive. That means attackers are no longer simply using software weaknesses. They are investigating how, under pressure, artificial intelligence models learn, behave, and respond. Rather than an optional improvement, this is where AI endpoint security becomes absolutely crucial.

Most businesses are still testing artificial intelligence endpoints utilizing outdated penetration testing techniques, therefore exacerbating the issue. These techniques ignore assessment of AI logic, data integrity, and behavioral manipulation, even as they concentrate on ports, patches, and malware execution. Consequently, while attackers silently explore evasion methods, businesses think their endpoint protection solution is good.

The consequences of such blind spots in healthcare and fintech are rather serious. One damaged artificial intelligence endpoint can cause patient harm, financial fraud, legal penalties, and public trust breakdown. This is why testing for AI-enabled endpoints is now mandated. One fundamental security need is this.

Organizations hoping to confirm real-world resilience often start with a focused Qualysec artificial intelligence endpoint penetration evaluation, where testing goes beyond infrastructure and into artificial intelligence behavior itself.

What is AI Endpoint Security?

AI endpoint security is the total application of artificial intelligence and machine learning methods to safeguard dispersed devices, including laptops, servers, mobile devices, and specific medical systems. These systems actively examine behavior, spot anomalies, and, in real time, react to threats rather than just wait for a known virus to surface. Artificial intelligence endpoint protection enables a dynamic defense that develops with the threat environment by departing from the fixed blacklist method of the past.

Unlike conventional antivirus programs that depend on a database of recognized signatures, AI-powered endpoint security discovers outliers by means of deep behavioral analysis. For instance, should a medical imaging station suddenly start transferring 5 GB of encrypted data to an unseen outside server at 3:00 AM, the artificial intelligence flags this as suspicious activity even in the absence of any known virus. Stopping zero-day attacks not yet recorded by worldwide security databases requires this aggressive approach.

Fundamental Elements of Artificial Intelligence Defense Ecosystem

- AI-Powered Endpoint Security Engines: The main processors that examine millions of data points across the device to find patterns of malicious activity are AI-powered endpoint security systems. Acting like the endpoint’s brain, they continually link system calls, file changes, and network traffic to preserve a baseline of regular operations.

- Machine Learning Endpoint Security Models: Machine learning endpoint security models forecast the probability of a threat by training on huge datasets, both historical and live. Machine learning security becomes progressively harder for attackers to match with real traffic over time as it learns the specific behaviors of the user and the device.

- Endpoint Threat Intelligence Feeds: High-quality data is necessary for a successful endpoint protection system. Real-time updates on worldwide attack patterns from AI-driven intelligence let local endpoints learn from attacks occurring thousands of miles away in a matter of seconds.

- Automatic Containment and Response: The capacity to work without human interaction is one of artificial intelligence security’s strongest points for business endpoints. The system can automatically separate the device, kill suspicious processes, and undo illegal file changes when a threat is identified with great assurance.

Although this computerized approach greatly increases detection rates, it also presents a black box hazard. Clever attackers might be able to influence the AI’s decision-making process if it is not open. Consult the 2025 Global Threat Report, which emphasizes the increasing use of artificial intelligence-driven attackers, for a thorough look at how these technologies are transforming the sector.

Why Healthcare And Fintech Are High-Risk Targets

Since AI endpoints in these industries handle the most sensitive data possible and frequently run with high privileges, healthcare and fintech are top priorities. In these sectors, the endpoint is sometimes the sole obstacle preventing a multi-million dollar payload from a hacker. Because of the sheer amount of financial data and PII (personally identifiable information), these industries are always on the radar of cybercartels as well as state-sponsored actors.

Healthcare: The Human Cost of Artificial Intelligence Vulnerabilities

Healthcare companies are under an ongoing threat as they cannot spare even a minute of downtime. A threat to an AI-enabled insulin pump or diagnostic imaging system is a life-threatening incident rather than only a data breach. Attacks are now targeting data poisoning, where they subtly change medical information to create mayhem rather than basic encryption, as AI security for corporate endpoints becomes commonplace in contemporary hospitals.

- AI-Assisted Diagnostics: Modern endpoints help in real-time diagnosis and patient monitoring. If these are compromised, the integrity of the medical counsel delivered is ruined.

- Legacy System Integration: Many AI-enabled medical devices interface with antiquated legacy systems, establishing a vulnerable link. Attackers might use this to avoid sophisticated threat detection powered by artificial intelligence.

- Critical Infrastructure Safety: Unlike a typical office, healthcare downtime immediately affects patient safety. Hence, hospitals are more prone to paying ransoms to bring back AI-driven services.

Fintech: The Accuracy Heist in the Digital Age

The incentive is purely financial in the fintech scene, and the attacks are very targeted. From fraud detection to automated high-frequency trading, AI-powered endpoint security in banking applications controls all. Hackers look for flaws in the ML endpoint security models by targeting these endpoints. Once they know how the artificial intelligence categorizes a transaction, they can plan bogus acts to appear quite ordinary.

- Fraud Detection Logic: Attackers spend months investigating AI endpoints to learn the thresholds of fraud detection and ultimately bypass them with artificial personas.

- Core System Access: Direct tunnels from fintech endpoints to core banking systems mean a single compromised laptop might result in a full-scale digital bank heist.

- Persistent Adversaries: Because the reward is so great, fintech attackers are very persistent and counter your defenses using their own endpoint threat intelligence.

Healthcare and finance still have the most expensive remediation costs, according to IBM’s Cost of a Data Breach Report. To avoid becoming a statistic, companies should make sure their endpoint security system is tested aggressively.

Strategic Defense Begins with a Map: Identifying hidden attack paths in AI workflows requires an expert eye. Don’t leave your endpoints to chance- Book a Strategy Session with Qualysec’s AI Architectsto build a threat model that protects your most critical data assets!

Protect Your AI Systems from Cyber Threats

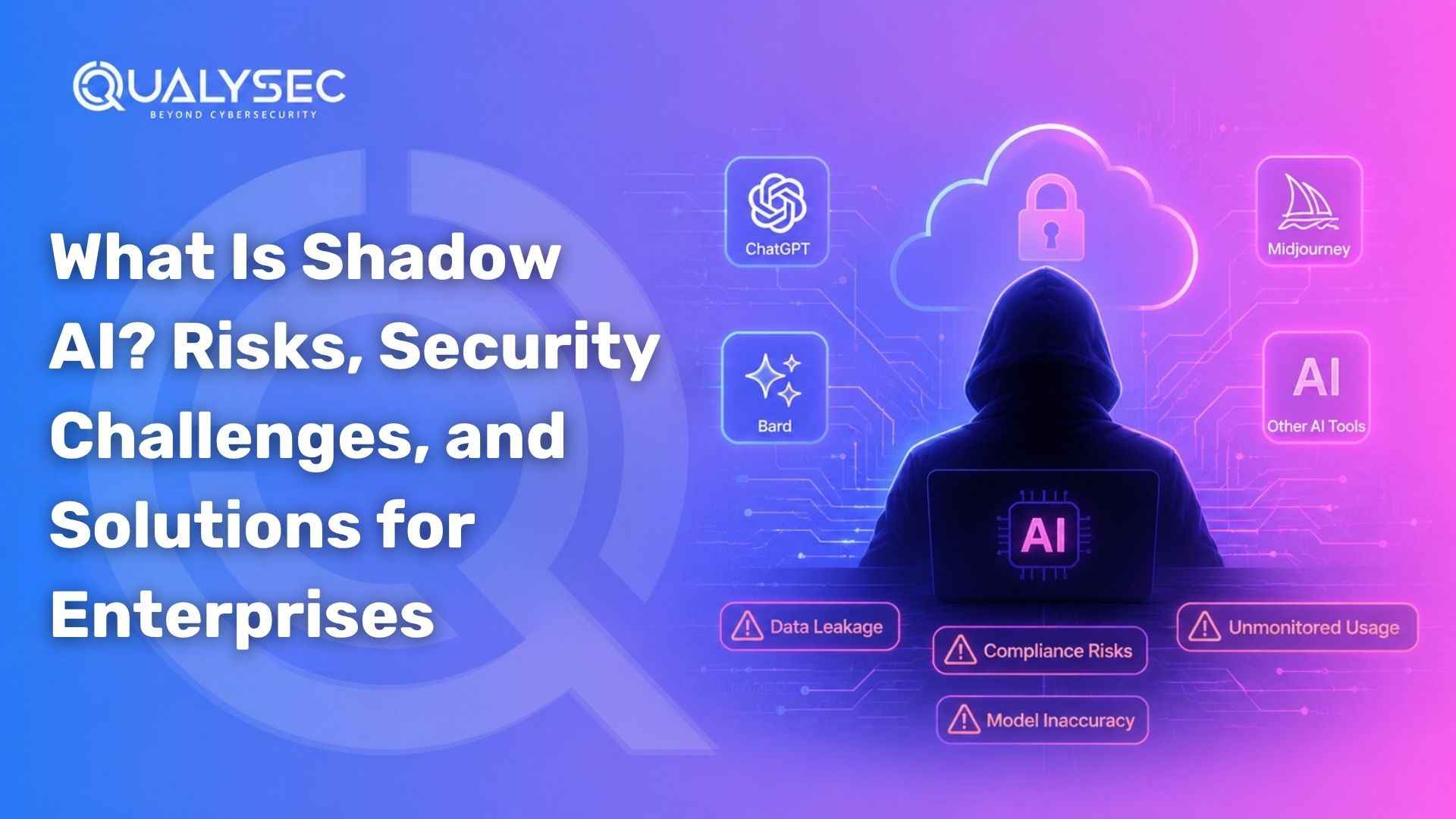

Unique Threat Landscape For AI Endpoints

Introducing artificial intelligence into your endpoint protection strategy adds a sophisticated system that may be misled, rather than just a shield. While standard malware is straightforward to identify, adversarial attacks aim at the reasoning of the artificial intelligence itself. This sets up a dangerous environment where the system’s intelligence is used against the company.

| Threat Type | Description | Impact on AI Endpoints |

| Model Evasion | Modifying malicious files slightly so the AI misclassifies them as “safe.” | Bypasses advanced threat detection using AI entirely. |

| Data Poisoning | Injecting corrupt data into the learning phase of an AI model. | Causes the AI to “learn” that certain attacks are actually normal behavior. |

| Response Abuse | Triggering false positives to force the AI to shut down legitimate services. | Leads to massive operational downtime and “denial of service.” |

| API Manipulation | Attacking the communication layer between the device and the AI cloud. | Can lead to unauthorized commands being sent to the endpoint. |

Investigate vulnerabilities unique to artificial intelligence

- Adversarial Samples: These are inputs meant specifically to deceive machine learning systems. Though a human might not see it, a hacker may inject noise into a piece of code that causes the AI endpoint protection to reject the threat.

- Model Inversion Attacks: Attackers query the AI repeatedly to get the data it was trained on: model inversion threats. In medicine, this may involve an assailant speculating on patient records by observing the reaction of the artificial intelligence to particular queries.

- Exploitation of Algorithmic Bias: Attackers may use a flawed AI model to mask their actions inside the expected system faults.

- Prompt Injection: Attacks can inject instructions that circumvent security restrictions for endpoints using natural language interfaces. So it’s possible to get administrative power over the endpoint protection solution.

For more on these new hazards, the MITRE ATLAS framework offers an all-encompassing database of hostile strategies against artificial intelligence systems. The first step in developing a robust AI-powered endpoint security solution is understanding these.

Role Of Penetration Testing in AI Endpoint Security

Standard penetration testing is not enough for the year 2026. Although checking for weak passwords and open ports is still essential, penetration testing for AI endpoint security entails a thorough investigation into your defenses’ brain. It’s about seeing if the guard of the artificial intelligence can be coerced or duped, not about locating a hole in the fence.

Whereas AI-focused testing is adversarial and dynamic, conventional testing is sometimes static and compliance-driven. It simulates a clever attacker who knows machine learning endpoint security works. Testers may find flaws in the detection logic that a conventional scan would never discover by purposely giving the AI poor information and watching its response.

The Strategic Significance of Artificial Intelligence Testing

- Beyond Signatures: Testing confirms whether your sophisticated AI-powered threat detection is able to effectively manage unknown, obfuscated dangers devoid of a recognized signature beyond signatures.

- Response Validation: Response validation guarantees that the automatic response (such as isolating a medical gadget) happens accurately during an attack and avoids additional harm beyond the attack itself.

- Eliminating False Sense of Security: Many companies think their artificial intelligence is flawless; therefore, they remove their false sense of security. Penetration testing offers a reality check, exposing precisely where the endpoint protection system breaks down under stress.

- Logic Gap Analysis: Logic Gap Analysis finds the gray areas where the AI is uncertain about its choice, exactly where hackers would like to work.

See the Gartner Guide on AI Security for an industry point of view on the reasons behind this change. It underlines that more than half of cyberattacks will directly target AI weaknesses by 2026.

Download Our Detailed AI Penetration Testing Report to Identify Security Gaps in AI Models and Connected Systems.

Get a Free Sample Pentest Report

Penetration Testing Methodology For AI Endpoints

Artificial intelligence’s fast adoption into endpoint devices has completely changed the cybersecurity scene for 2026. The techniques used to check these defenses must also change as healthcare and financial technology businesses move toward AI-powered endpoint security. Conventional vulnerability scanning is no longer adequate to spot the sophisticated logic mistakes present in machine learning models. For an effective AI endpoint security strategy, penetration testing must be proactive, adversarial, and completely incorporated into the particular operational context of the company.

The modern approach turns attention away from basic software issues to the veracity of the decision-making process of artificial intelligence. It entails a methodical approach uniting data science and conventional offensive security. This approach guarantees that your endpoint protection solution can resist not only malware but also intelligent exploitation of its own underlying algorithms by reproducing the advanced methods of modern threat actors.

1. Threat Modeling for AI-Enabled Endpoints

The first stage in any sophisticated security effort is a thorough threat modeling meeting catered just for AI components. Unlike traditional systems, artificial intelligence endpoints have particular trust boundaries that span into training data pipelines and the cloud. Where artificial intelligence opens new attack routes that conventional endpoint testing would unavoidably miss, threat modeling may be used to find them. Testers may identify precisely where a smart device is most vulnerable by charting the course of data through the AI endpoint security system.

This process includes:

- Mapping Artificial Intelligence Models, APIs, and Data Flows: Every location where the endpoint connects with its AI brain is noted, whether that is located locally on the device or in a remote cloud environment.

- Identifying Trust Boundaries: We guarantee no unapproved data can escape across these crossings by looking at the handshakes between the cloud infrastructure, the AI services, and the endpoint.

- Defining Attacker Goals: We set particular goals, including model manipulation, automated system privilege abuse, or AI endpoint protection evasion, for simulation.

Qualysec offers focused threat modeling solutions for companies aiming to go above theoretical risks that match the OWASP Top 10 for LLMs and artificial intelligence. Before the first line of code is ever tried, our experts let you view your smart attack surface.

2. Testing AI-Based Detection and Response Mechanisms

AI-driven endpoints sometimes make important, automatic decisions like killing a doubtful banking process or isolating a damaged medical device. Double-edged swords, though, are these automated replies. Should an attacker be aware of the functioning of advanced threat detection based on artificial intelligence, they may dupe the system into paralysis or compel it to disregard an actual breach. The threshold at which the artificial intelligence changes from monitoring to mitigation is examined in penetration testing.

Our testing concentrates on:

- Malicious Behavior Disguise: To see whether the machine learning endpoint security fails to categorize them properly, we try to wrap bad payloads in loud or innocuous-appearing data.

- Low and Slow Attacks: We emulate APT-style (Advanced Persistent Threat) motions with a pace intended to be under the behavioral detection alerts of the artificial intelligence.

- Response Abuse: We investigate whether an attacker can intentionally set off the response mechanism of the artificial intelligence (AI) to produce a denial-of-service (DoS) on vital financial or medical systems.

Operational continuity depends on making sure your AI security for enterprise endpoints responds appropriately under pressure. For more technical understanding, businesses should consult the MITRE ATLAS Framework, which lists actual adversarial approaches against artificial intelligence systems.

3. API, Model, and Integration Security Testing

Most artificial intelligence endpoints rely heavily on APIs to get updates, log telemetry, and engage with global endpoint threat intelligence feeds rather than being discrete devices. Frequently, the weakest link in the chain is these integrations. An attacker who penetrates the API can bypass the entire local security of the device. This phase’s testing emphasizes the artificial intelligence system’s plumbing, guaranteeing correct authorization and cryptographically secure model updates and data transfers.

Among the major testing grounds are

- Authentication and Authorization of Artificial Intelligence APIs: We guarantee that only legitimate endpoints can ask the AI model, so as to stop model scraping or unwanted data extraction.

- Model Update Integrity: We check that attackers cannot grab your endpoint protection solution updates and replace them with poisoned or compromised models.

- Telemetry Security: We check if sensitive system data is exposed during the transfer of AI performance logs or behavioral telemetry.

Modern hackers’ main point of entry is weak integrations. The Google Cloud Cybersecurity Forecast 2026 predicts a 40% year-over-year rise in API-centric attacks on AI platforms.

4. Adversarial and Behavioral Attack Simulation

For artificial endpoint security, the pinnacle of penetration testing is adversarial testing. It simulates strikes, especially meant to confuse or otherwise control the machine learning vision of the security tool. Testers may locate the exact boundaries of the AI’s defensive abilities by creating adversarial examples of inputs that appear to be regular to people, but the system sees as non-threats. This is a basic need for any endpoint threat intelligence verification system.

Among typical simulated situations are

- Evasion Input Generation: Creating bespoke scripts that the artificial intelligence-powered endpoint security confuses as standard system maintenance tasks.

- Alert Fatigue Induction: Triggering a barrage of false positives to divert human analysts allows the real threat to go undetected.

- Model Learning Manipulation: For systems that learn on the job, we simulate data poisoning to slowly teach the AI to ignore specific malicious behaviors over time.

Validating the ROI of your artificial intelligence endpoint protection investments depends on this stage. You are simply hoping your artificial intelligence functions without adversarial simulation; with it, you learn it really is.

Penetration Testing For Healthcare AI Endpoints

The stakes for artificial intelligence security in a clinical setting are very great. Among healthcare goals are nurse stations, AI-enabled imaging methods (MRI/CT), and even patient-facing mobile apps. Often targeting high-privilege devices, these contain the secrets to Protected Health Information (PHI). AI penetration testing makes sure your AI security for enterprise endpoints does not fail quietly, therefore preventing inappropriate medical dosages or unlawful access to private data.

Important areas of healthcare testing include:

- Unauthorized PHI Access: We check if the AI endpoint permits lateral motion into the hospital’s main electronic health record (EHR) databases.

- Validating Medical Logic: We make sure an attacker cannot subtly tamper with the AI model to affect patient vitals or diagnostic results.

- Privacy Compliance (HIPAA/GDPR): We investigate whether the AI leaks patient information in its output or by means of locally stored weights that are not secure.

More than a firewall is necessary to preserve patients’ lives. See the HHS Cybersecurity Guidance for updated 2026 guidelines on medical device security.

Zero-Disruption Security for Life-Critical Systems: Healthcare AI requires a delicate balance of deep testing and operational uptime. Partner with Qualysec for Healthcare-Specific Pentesting and ensure your clinical endpoints meet HIPAA standards while remaining resilient against ransomware!

Find Your Perfect Security Partner

Penetration Testing For Fintech AI Endpoints

Operating in a high-velocity environment where artificial intelligence is utilized for everything from high-frequency trading to real-time fraud detection, fintech businesses are present. For these companies, a final compromise might result in millions in unauthorized transactions within seconds. For fintech endpoints, penetration testing concentrates on the transaction’s integrity and the resilience of the machine learning endpoint security against complex financial fraud.

Our financial technology testing emphasizes:

- Fraud Detection Bypass: We seek to execute microtransactions, remaining barely below the detection threshold of the artificial intelligence, to see whether we may siphon money unnoticed.

- Regulatory Alignment: We confirm that the artificial intelligence adheres to Know Your Customer (KYC) and Anti-Money Laundering (AML) processes without being duped by deepfakes or fake IDs.

- Trading System Access: To see if we could get persistence in automated payment processing or trading systems, we imitate an attack on an employee workstation.

A secureendpoint protection solution is non-negotiable in an industry in which trust is the main currency. The Cyber Report emphasizes the need for consistent penetration testing for all AI-driven financial systems for further information.

Secure Your High-Value Financial Perimeters: In the world of fintech, a single logic flaw can lead to a multi-million dollar heist. Validate your fraud detection and trading models with the industry leaders. Consult with Qualysec’s Fintech Security Expertsto harden your financial endpoints today!

AI Endpoint Security Vs. Traditional Endpoint Security Testing

One has to compare the emphasis of conventional audits with the demands of the artificial intelligence age to grasp why specialized testing is necessary. Usually binary, conventional testing either finds a vulnerability or does not. Still, AI testing is about likelihood and behavior. Organizations develop a false sense of security without AI-focused testing, believing their intelligent defenses are impregnable because a clean vulnerability scan suggests as much.

| Feature | Traditional Testing | AI Endpoint Testing |

| Primary Focus | Known vulnerabilities (CVEs) and patches | Logic flaws, data integrity, and adversarial robustness |

| Attack Vector | Exploiting software bugs and misconfigurations | Tricking the AI model and bypassing behavioral detection |

| Data Integrity | Focused purely on encryption and access | Focused on training data “purity” and model weights |

| Response Type | Block/Allow based on pre-defined static rules | Adaptive response based on perceived real-time behavior |

| Goal | Achieving compliance and “box-ticking.” | Validating true resilience against intelligent adversaries |

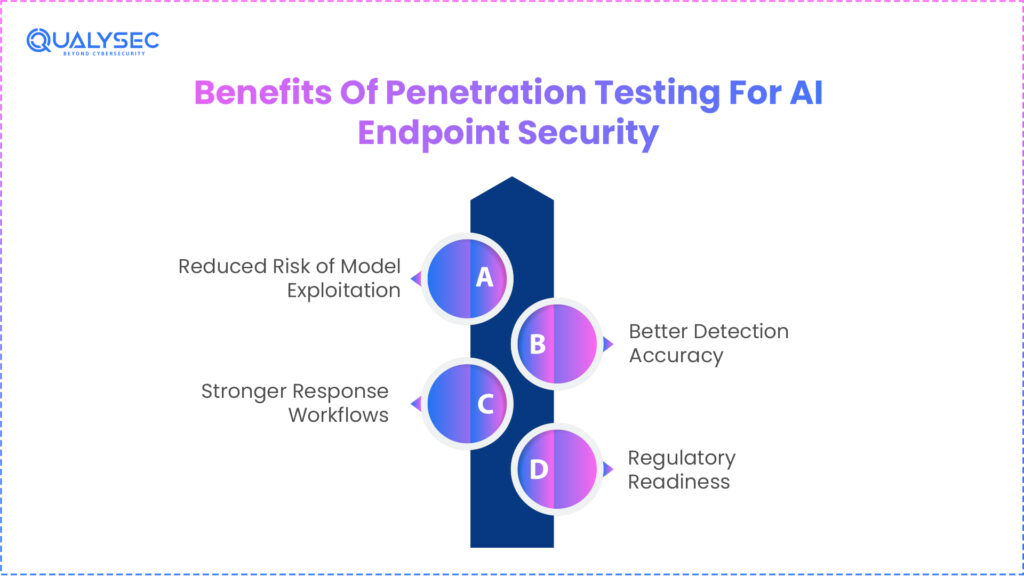

Benefits Of Penetration Testing For AI Endpoint Security

Regular penetration testing for AI endpoint security offers benefits well beyond risk reduction. It gives stakeholders and the board of directors concrete evidence that the digital change of the company is being handled appropriately. An extensive pentest report is a great tool during audits in both finance and healthcare, where regulations are getting tighter.

The quantifiable advantages are

- Reduced Risk of Model Exploitation: Finding the places where a genuine attacker can avoid your sophisticated threat detection using artificial intelligence before discovering them. This will help you to reduce your model’s exploitation risk.

- Better Detection Accuracy: Fine-tune your machine learning endpoint security to minimize false positives that interrupt valid company flows.

- Stronger Response Workflows: Make sure your automated containment solutions are rapid, precise, and non-disruptive.

- Regulatory Readiness: Offering the evidence-based security proof needed for HIPAA, PCI DSS, and the developing EU AI Act.

Investing in AI-powered endpoint security is a significant financial commitment, penetration testing helps to guarantee that the promised protection is actually provided via investment.

Choosing The Right Penetration Testing Partner

Not every cybersecurity company is ready for the particular problems of 2026. A good partner should straddle both worlds: the analytical knowledge of data science and the technical depth of conventional offensive security. Choose a company offering a strategic road plan for AI hardening when picking a partner to assess your AI security for enterprise endpoints, not just a list of errors.

A competent partner should:

- Understand AI Architectures: Know AI architectures; they must be capable of describing how data traverses a neural network, not just a subnet.

- Run Manual Adversarial Testing: Automated tools alone won’t help you to identify the subtle logic errors hackers exploit in artificial intelligence.

- Provide Actionable Remediation: Give your developers concrete instructions on how to securely retrain models and correct vulnerabilities.

With specialized knowledge in AI endpoint protection validation for some of the most sensitive industries worldwide, Qualysec is a global leader in this sector.

Conclusion

Deeper into 2026, reliance on AI endpoint security in finance and healthcare will only speed up. These technologies provide a groundbreaking advancement in defense but also bring a new kind of smart hazard for which conventional testing cannot provide answers. You cannot forecast the future by depending on past tools. The only way to guarantee that your smart systems stay smart under attack is continuous, adversarial penetration testing.

Including expert testing in your endpoint protection system changes your AI from a possible liability into an impregnable stronghold. Whether you are safeguarding important patient diagnostics or high-stakes financial transactions, the aim is always to be one step ahead of a driven enemy.

Is Your AI a Fortress or a Backdoor? As we move through 2026, the complexity of attacks will only increase. Ensure your AI-powered defenses are truly impenetrable. Schedule Your Comprehensive AI Endpoint Audit with Qualysec and stay one step ahead of the modern adversary!

Speak directly with Qualysec’s certified professionals to identify vulnerabilities before attackers do.

FAQs

Q. Why do we use AI in endpoint security?

Conventional tools depend on signatures and cannot expand to match the pace of current dangers. Detecting unknown threats in milliseconds with artificial intelligence-driven endpoint security offers a degree of protection that is humanly unachievable by hand.

Q. What are AI endpoints?

An AI endpoint is any piece of hardware, from a smartphone banking app to an MRI machine, that uses local or cloud-based artificial intelligence to carry out its primary functions and manage its own security stance.

Q. What are the three main types of endpoint security?

- Designed to stop threats, preventative, EPP (Endpoint Protection Platform).

- Monitoring-oriented endpoint detection and reaction (EDR) is meant to find and examine threats.

- XDR (Extended Detection and Response): Integration-oriented, linking data across networks and endpoints.

Q. What are the AI-powered endpoint security solutions?

Solutions for top endpoint protection in 2026 are automated quarantine, behavioral artificial intelligence for enhanced threat detection, and proactive threat hunting across the company.

Q. Can AI endpoint security prevent ransomware attacks?

Indeed. These systems can detect the behavior patterns of illicit encryption and prevent the threat before any data is lost by employing machine learning endpoint security.

Q. Does AI endpoint security replace EDR or traditional security tools?

No, it improves them. Artificial intelligence is the intelligence that improves your current EDR and firewall solutions, therefore lowering false positives and speeding up incident response.

0 Comments