Today, artificial intelligence determines which transactions are flagged as fraud, which patients get medical attention, and who qualifies for loans. Here, though, is the inconvenient reality: most companies protect artificial intelligence like standard software even while it acts nothing like it. One poisoned data collection or a modified prompt can quietly taint thousands of decisions. This is the reason why security risks of artificial intelligence have risen among the top worries in cybersecurity.

Often forgotten by companies embracing AI based cyber security and automation is the new attack surface produced by every model, dataset, and API. Real-world systems’ play of security risks of artificial intelligence, AI cybersecurity threats, and the AI threat to cyber security, as well as their testing and management, are broken down in this handbook. The year 2026 marks a turning point where AI-powered businesses only have one rational posture: assume compromise.

Good security calls for transcending typical firewalls in favor of behavioral integrity. We must recognize that the system’s brain is now under target as machine learning is incorporated into essential infrastructure. The very instruments created to improve our companies might become the main vectors for their collapse if not purposefully planned.

Why Artificial Intelligence Expands The Cyber Attack Surface

Conventional software adheres to set, determined rules. AI does not. It learns, adjusts, and modifies behavior based on interaction and data. That alone presents fresh security risks of artificial intelligence never covered in the training of security teams. These highlight the growing importance of AI security testing. The perimeter becomes porous and erratic when the logic of an application is inferred from trillions of data points rather than being manually entered.

Every artificial intelligence system depends on many levels: training data, security teams training, feature engineering, model weights, inference pipelines, APIs, plugins, and subsequent automation. Every layer provides attacking access points. A flaw in a typical software stack may result in data theft; a flaw in an artificial intelligence stack can result in logic hijacking, whereby the artificial intelligence still works but helps the opponent with decisions.

Unlike a hacked website, artificial intelligence mistakes are silent and subtle. Rather than bringing the system down, a poisoned training data set subtly alters the model’s perception of reality. A modified prompt just changes the result; it does not set off typical intruder detection alerts. This concealment causes the cybersecurity artificial intelligence risks to run faster than conventional security measures can identify.

The change toward agentic artificial intelligence in 2026 implies that AI systems are acting autonomously-sending emails, handling funds, or overseeing servers. This Excessive Agency is a huge enlargement of the attack surface. Control over the actions the agent is permitted to execute is acquired if an attacker can affect the model’s logic.

Moreover, artificial intelligence has a highly complicated supply chain. Most businesses employ pre-trained models either from open-source repositories or from third parties. Every business using these base models inherits those same AI cybersecurity threats without even realizing it if they are hacked or have backdoors inserted during their first training.

Key Components Of AI Systems That Are Vulnerable To Attacks

Effective management of AI security risks requires, first a definition of precisely what is in danger. An artificial intelligence system is an intricate data and computational ecology, not a monolith. The first step toward a strong defense is knowing the particular flaws of every element.

- Training information: AI’s fuel is training data. The model will behave incorrectly if the data is biased, poisoned, or altered. Open datasets, web-scraped material gathered using proxies for web scraping, and third-party data feeds are often targets for attackers. Once harmful samples start training, the model permanently incorporates them. Artificial intelligence’s influence on every future choice makes this one of the most severe security concerns it creates.

- The Model Architecture: The mathematical brain is a model architecture. Should an attacker get access to the model weights, they can do model extraction—in effect stealing your intellectual property and discovering methods to go around it offline.

- Inference APIs: These gateways through which users (and attackers) engage with the artificial intelligence are known as inference APIs. These APIs are ideal targets for brute-force attacks and prompt injection attacks unless rate limiting and input cleansing are strictly enforced.

- The Infrastructure: Conventional security assessments sometimes miss GPUs and other specialized AI clusters. But the high-performance computing systems necessary for artificial intelligence are themselves targets for cloud exploits and resource-exhaustion threats.

| AI System Component | Primary Vulnerability | 2026 Threat Level |

| Data Pipelines | Data Poisoning / Bias Injection | Critical |

| Model Weights | Extraction / Reverse Engineering | High |

| Prompt Interfaces | Injection / Jailbreaking | Very High |

| Output Handlers | SSRF / Cross-Site Scripting (XSS) | Medium |

Concerned about vulnerabilities in your AI systems? Contact us to assess and secure your AI environment today.

Protect Your AI Systems from Cyber Threats

Common Security Risks In Artificial Intelligence Systems

1. Data Poisoning Attacks in Machine Learning Models

Maybe the most covert of all AI security risks is data poisoning. An attacker may establish a backdoor by introducing hostile samples into the training set. A security AI could be instructed, for example, to disregard any malware containing a certain secret string of bytes. Because the model seems to work flawlessly on all other data, this kind of threat is exceedingly hard to spot.

Data poisoning results from attackers, including altered or destructive data in training systems. This leads the model to pick up erroneous patterns that eventually affect actual life decisions. In finance, this can cause fraud systems to ignore actual fraud. In healthcare, it can lead to misdiagnosis. Content moderation, it can help bad content rise.

Persistence is what makes this one of the most serious artificial intelligence security concerns. Though the model is retrained, the poisoned data is usually used; thus, the threat persists over iterations. This converts one breach into a long-term settlement.

2. Adversarial Attacks on AI Models

Feeding the model inputs that have been mathematically disturbed to produce misclassifications is the aim of this threat. In Digital Camouflage in 2026, attackers wear unique patterns to vanish from AI-monitored surveillance or employ sound in audio to set off unauthorized commands in voice-activated business systems. It creates new liabilities and exposes critical AI security vulnerabilities.

Adversarial attacks take advantage of how models see input. Minor alterations in images, text, or transaction information can lead to major changes in results. A few pixels allow an autonomous vehicle to change a stop sign into a speed restriction indicator. Some characters can turn malware into benign programs.

These attacks directly challenge AI based cyber security measures, including biometric authentication, fraud scoring, and malware detection. Though it seems to be running, the system is really being duped.

3. Model Inversion and Sensitive Data Leakage Risks

Leaks are artificial intelligence systems. Model inversion lets an attacker continually interrogate an API to rebuild the training data. For healthcare and financial companies, where models trained on private patient or client data could unintentionally recall and disclose PII (Personally Identifiable Information) when prodded the right way, this is a nightmare.

You might like to read about AI Cybersecurity in Healthcare.

4. Prompt Injection Attacks in LLM-Powered Applications

Prompt injection is the SQL injection of the artificial intelligence age. Attacks utilize jailbreaks to fool the model into bypassing its security filters. Indirect prompt injection, which occurs when an artificial intelligence scans a compromised website or email and then follows hidden commands contained therein to steal the user’s data, has developed into a major AI threat to cyber security in 2026.

By hiding commands in user input, documents, or web content, prompt injection lets attackers override system instructions. This can compel artificial intelligence to show sensitive information, run tools, or circumvent safety protocols.

Particularly for chatbots and copilots linked to internal systems, this is among the most rapidly developing security threats artificial intelligence poses.

Qualysec conducts prompt Injection testing to find these hazards before intruders do.

5. API and Integration Vulnerabilities in AI Systems

Usually found in processes through APIs, artificial intelligence is seldom used as a solo tool. An AI might generate a malicious script that the underlying system then executes if these integrations don’t have adequate output validation. This transfers the threat from the AI model to the core corporate network, producing either Remote Code Execution (RCE) or Server-Side Request Forgery (SSRF).

Many times, artificial intelligence APIs do not have appropriate rate restrictions, output filtering, and authentication. This lets attackers misuse automation, perform cost-based denial attacks, and gather data. These constitute major artificial intelligence threats against cybersecurity concerns.

As attackers would, Qualysec tests artificial intelligence APIs to show how easily they could be misused.

6. Insider Threats and Unauthorized Model Access

The worth of a proprietary artificial intelligence model is enormous. Malicious or unintentional insider threats present a major danger. An employee revealing the system prompt or the fine-tuning information provides competitors, or hackers blueprint to break down the firm’s AI protections. That’s why making continuous AI threat intelligence crucial for early detection of misuse.

Adversarial attacks take advantage of the model’s interpretation of input. Small adjustments to images, text, or financial information can lead to major changes in results. A few pixels can change an autonomous vehicle’s speed restriction marker from a stop sign. Few people may transform malware into secure software.

These attacks directly undercut artificial intelligence-based cybersecurity systems, including malware detection, biometric authentication, and fraud assessment. Though it seems to be running, the mechanism is really being manipulated.

Identify hidden weaknesses in your AI models and applications before attackers do. Contact us for a comprehensive AI security assessment.

Get Your Free Security Assessment

AI Security Risks Across The Model Lifecycle

AI security does not begin when a model is released. It starts when the second data is gathered and goes on for the duration the system is functioning. Most companies only consider security when they are rolled out, therefore leaving major blind spots throughout the lifespan. These blind spots provide the most damaging artificial intelligence security hazards.

Unlike conventional programs, artificial intelligence evolves as it learns. This means that flaws change over time. After new data, new prompts, or new user behavior, a model that was secure at debut might turn hazardous. This is why the security risks of artificial intelligence have to be constantly assessed instead of by means of one-time audits.

Data poisoning during training to prompt abuse in production includes various AI cybersecurity hazards introduced throughout the lifecycle. Attacks need not sacrifice infrastructure. They only seek the most feeble stage in life.

Qualysec’s AI penetration testing is meant to map these lifecycle risks end-to-end, therefore allowing companies to see their most exposed areas.

Lifecycle Risk Overview

| Lifecycle Stage | Risk Type | Security Action Item |

| Data Collection | Poisoning | Verify data provenance and integrity. |

| Training | Inversion | Apply Differential Privacy (DP) techniques. |

| Testing | Evasion | Perform adversarial “Red Teaming.” |

| Deployment | Injection | Implement robust input/output guardrails. |

| Monitoring | Model Drift | Set alerts for behavioral anomalies. |

1. Risks During Data Collection and Training

Training data shapes the way artificial intelligence operates. Attackers control the model if they control the data. This is the cause of data poisoning being among the most serious security risks of artificial intelligence.

Web scraping, open datasets, and external vendors are used by most businesses. Rarely are these sources checked. Malicious samples can be inserted by attackers such that the model picks up incorrect correlations, backdoors, or prejudiced behavior. This data becomes incorporated in every subsequent revision of the model once it is utilized.

Particularly perilous for AI based cyber security solutions. Should a threat detection model be poisoned, it may learn to disregard genuine attacks while marking innocent activity. That lets assailants move unhindered.

2. Deployment and Runtime Security Threats

Attacks move from data to interaction once artificial intelligence models start running. This is where artificial intelligence cybersecurity threats take off. Runtime occurrences include quick injection, API misuse, and automation hijacking.

Especially vulnerable are applications driven by LLM. Frequently linked to internal databases, ticket systems, payment gateways, and cloud storage. One constructed prompt might induce the AI to find or alter sensitive information.

This is the reason why cybersecurity’s artificial intelligence threat is today related directly to business processes. Attackers are not cracking servers anymore. They are telling artificial intelligence algorithms to conduct the hacking for them.

Qualysec assesses live AI deployments by simulating actual prompt injection, tool misuse, and API abuse so companies can see their actual danger.

Explore LLM security to help protect applications that are vulnerable due to being driven by LLMs.

3. Post-Deployment Model Drift and Exploitation

AI fluctuates. The model moves over time as a result of user behavior, fresh data, and feedback loops. Attacks can either cause this drift, or it can be natural.

Attackers can gradually feed inputs that move the model toward dangerous behavior without setting off alerts. Over months or weeks, the artificial intelligence becomes exploitable, biased, or incorrect. This makes drift one of the most silent security risks of artificial intelligence.

Organizations have no way to know when this occurs without ongoing testing. Qualysec, hence, suggests regular artificial intelligence red teaming and penetration testing to identify these changes before damage happens.

Real-world Examples Of AI Security Breaches

The security risks of artificial intelligence are no longer academic. High-profile events in 2025 and early 2026 have shown that even the most cutting-edge systems are susceptible:

The LLM Leak (2025): An inside chatbot of a worldwide technology company was tricked by a prompt injection attack on the LLM Leak (2025). Hackers compelled the bot to disclose the company’s Q3 financial forecasts before they were public by asking it to convert a hidden base64 string.

The “Truman Show” Fraud (2026): Hackers industrialized investment fraud with the help of artificial intelligence-based cybersecurity solutions in the Truman Show Fraud (2026). Millions in losses resulted from their invention of thousands of deepfake identities that successfully circumvented KYC (Know Your Customer) policies at several large financial institutions.

Autonomous Vehicle Sabotage: Researchers showed how pixel-thin stickers—Digital Dust—could cause highway AI systems to misinterpret lane markings, therefore confirming the cybersecurity threat’s reach into the real world.

The 2025 World Economic Forum Global Cybersecurity Outlook states that AI-powered attacks have grown 58% year-over-year, therefore making proactive testing even more important.

Business Impact Of AI Security Vulnerabilities

Should security risks of artificial intelligence present themselves, the damage is not confined to the IT departments. It impacts every department.

Fraud, data breaches, and the cost of resetting compromised models all add to financial loss. Restoring a poisoned artificial intelligence system can take months and millions of dollars.

Even more difficult to retrieve is reputation. Customers lose faith when artificial intelligence systems expose data or make bad judgments. Much of the time, this loss of trust is everlasting.

Paying attention are also regulators. Under laws like GDPR and the EU AI Act, firms have to show they are managing AI security issues. Failing to act will cause legal action and significant penalties.

Qualysec thus collaborates with compliance teams as well as engineers to deliver artificial intelligence risk evaluations meeting both security and regulatory standards.

Role Of Penetration Testing In Identifying AI Security Risks

Traditional penetration testing targets web apps, servers, and networks. It does not evaluate the behavior of artificial intelligence. This causes businesses to overlook the most serious security risks of artificial intelligence.

AI penetration testing looks at how models respond to harmful data, triggers, and abuse. It assesses the AI’s susceptibility to trickery, manipulation, or enforced information release.

This covers:

- Examination of data retrieval

- Limiting hostile inputs

- Abusing APIs and automatic operation

- Pushing dangerous choices

This strategy unveils artificial intelligence cyberattacks that no vulnerability scanner will ever detect.

Qualysec helps companies understand how their artificial intelligence may be used, in addition to where it runs, by means of this kind of testing.

Look at how penetration testing uncovers hidden AI vulnerabilities. Download the sample AI Pentesting Report now.

Get a Free Sample Pentest Report

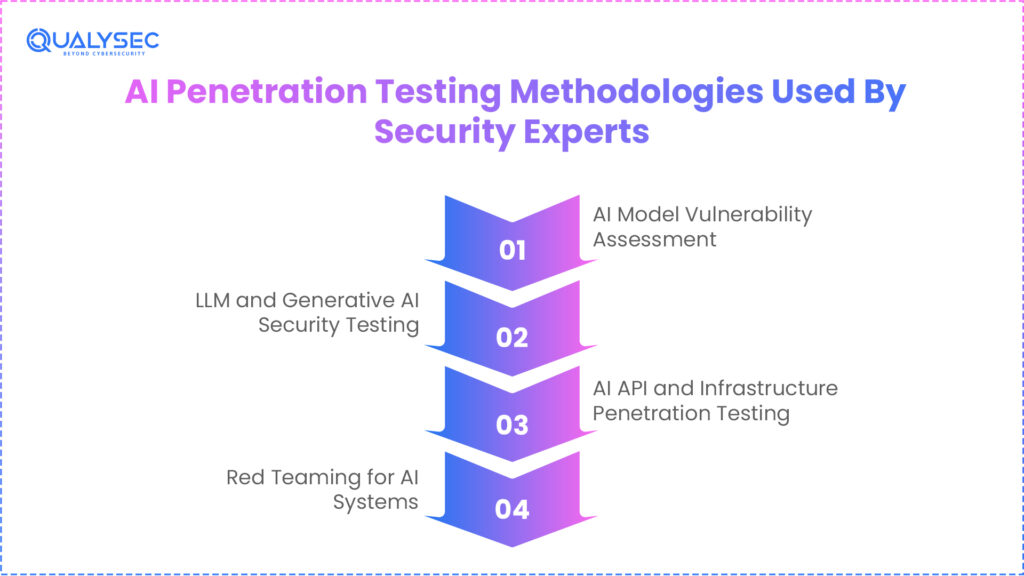

AI Penetration Testing Methodologies Used By Security Experts

Qualysec and other industry pioneers adopt a multi-tiered strategy to address the particular security threats of artificial intelligence:

1. AI Model Vulnerability Assessment

We explore the model’s design to learn how it responds to out-of-distribution data. This determines whether the model will crash or leak data given unanticipated inputs.

This entails testing the model’s reaction using aberrant, flawed, and opposing data. Attackers employ these tactics to discover logic flaws, backdoors, and hazardous conduct. Testing this exposes serious artificial intelligence security issues inside the model itself.

2. LLM and Generative AI Security Testing

This calls for extreme red teaming of prompts. We attempt to circumvent safety guardrails, gather system instructions, and compel the model to produce forbidden material.

This emphasizes fast injection, jailbreaks, hallucination abuse, and data leakage. It assesses the capacity of attackers to bypass security guidelines and steal secret data. Nowadays, companies’ most frequent AI cyberattacks are these.

3. AI API and Infrastructure Penetration Testing

We evaluate the plumbing surrounding artificial intelligence. This includes validating that the cloud infrastructure hosting the AI is hardened and looking for OWASP Top 10 flaws in the APIs.

This checks the systems surrounding the AI. For exposure and misuse, APIs, vector databases, cloud permissions, and tool integrations are examined. This is where many AI hazards to cybersecurity problems dwell.

4. Red Teaming for AI Systems

This is a thorough, multi-vector simulation. We show the real-world effects of a breach by poisoning the data, fooling the model, and taking advantage of the ensuing business logic faults.

This is a complete adversarial simulation. Using every available technique, ethical hackers strive to breach the artificial intelligence: social engineering, data poisoning, and prompt abuse. This illustrates how artificial intelligence security threats affect the actual world.

Qualysec applies all of these methods in its artificial intelligence VAPT projects.

Qualysec validates AI driven API and plugin security across real production integrations!

AI Security Compliance And Regulatory Considerations

Just like financial systems or essential networks, governments all over now regard artificial intelligence as a high-risk digital infrastructure. This is so that the security threats of AI no longer impact just IT systems. They affect surveillance, healthcare results, loan decisions, and even public safety. Regulators expect businesses now to show that they grasp and manage their artificial intelligence security issues.

For artificial intelligence governance, the European Union’s AI Act has become the worldwide standard. It obligates firms to record how models are trained, the data they employ, how bias is mitigated, and how risks are tracked. Legal consequences are now possible from any failure to control artificial intelligence cybersecurity threats.

Likewise, NIST in the United States published its AI Risk Management Framework, which emphasizes governance, testing, and risk detection.

Companies must now demonstrate four main compliance cornerstones that specifically deal with AI threats to cybersecurity:

- Risk assessments

- Data protection measures

- Human oversight

- Incident response controls

1. Risk Assessments

Companies must analyze how their artificial intelligence might be misused or changed under risk evaluations. This includes output leakage, data poisoning, model drift, and prompt injection. These hazards stay invisible without testing. This is why AI based cyber security now calls for adversarial testing instead of checkbox audits.

Qualysec helps organizations show that their AI security concerns are under ongoing management by running artificial intelligence penetration testing that maps every attack path.

2. Data Protection Measures

AI technologies handle enormous amounts of personal and financial information. Leaking this information via model results under GDPR and the EU AI Act is viewed the same as a database breach. Consequently, memorization and model inversion turn regulatory violations into not only technical flaws.

This is the reason testing for output-based data leakage is now required in controlled sectors. As part of its AI VAPT, Qualysec performs AI data leakage testing so companies may show that their models do not disclose personal data.

3. Human Oversight

High-risk artificial intelligence systems have to have a human in the loop per regulatory requirements. This implies judgments must be overturnable and subject to audit. Should attackers control artificial intelligence behavior, people have to be able to identify and stop it. This oversight has little purpose absent behavioral testing.

Qualysec supports businesses in confirming that their overriding and monitoring systems function under hostile circumstances.

4. Incident Response and Accountability

Organizations have to demonstrate how they identify, contain, and restore if an AI system is compromised. Traditional incident response plans do not include prompt-based data leaks nor poisoned models. This is a top priority AI security threat for teams working on compliance.

Not simply infrastructure breaches, Qualysec offers attack simulations demonstrating whether your company can react to genuine artificial intelligence events.

Assess AI risks in cloud environments, get in touch with Qualysec!

Regulatory Control vs Real World AI Risk

| Regulatory Requirement | What It Means in Practice | How It Reduces AI Security Risks |

| EU AI Act Risk Classification | AI systems must be labeled high, medium, or low risk | Forces companies to test the most dangerous AI models |

| GDPR Data Protection | Models must not leak personal data | Prevents model inversion and memorization |

| NIST AI RMF | Continuous risk monitoring | Stops model drift and silent exploitation |

| Audit Trails | Decisions must be traceable | Enables detection of manipulated AI behavior |

See how organizations achieved AI security compliance in real-world environments. Explore our case studies.

See How We Helped Businesses Stay Secure

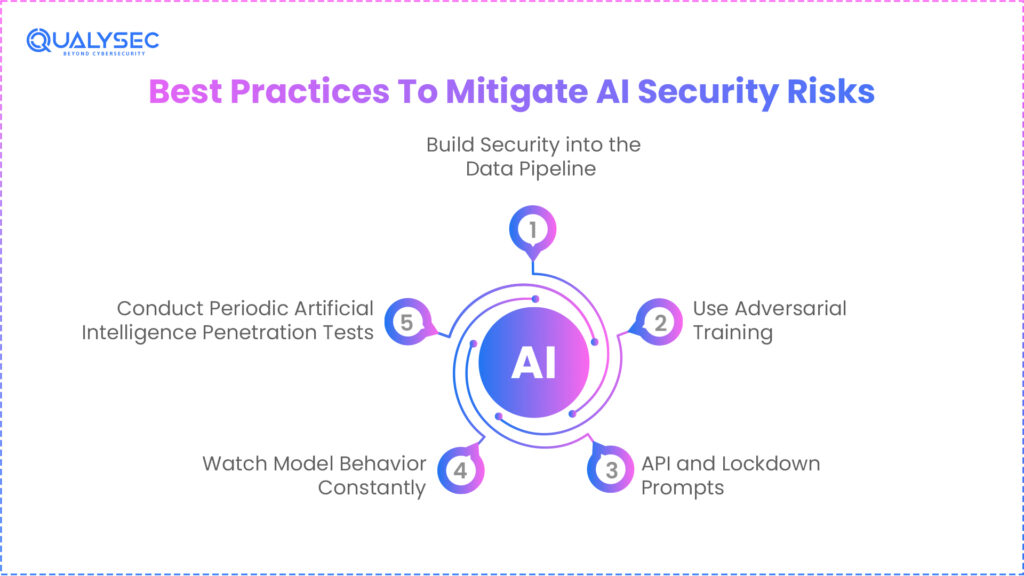

Best Practices To Mitigate AI Security Risks

Reducing artificial intelligence security threats calls for more than firewalls and encryption. AI systems must be designed, trained, and tested with adversarial behavior in mind. This is the foundation of modern AI cyber security.

1. Build Security into the Data Pipeline

Security must begin at data collection. Every dataset should be verified, versioned, and access-controlled. Open data and third-party feeds are the most common sources of poisoning. Sanitization and validation are essential to stop attackers from shaping how your AI learns.

2. Use Adversarial Training

Models should be trained on malicious inputs as well as clean data. This helps the AI learn what attacks look like. It reduces vulnerability to adversarial examples, which are one of the biggest AI cybersecurity threats today.

3. API and Lockdown Prompts

System prompts, tool instructions, and APIs have to be handled as secret assets. Attackers may override directions and misuse automation if there is no filtering and validation. A major artificial intelligence threat to cybersecurity now is prompt injection.

4. Watch Model Behavior Constantly

Static security inspections are ineffective against drifting artificial intelligence. You need to keep track of production trends, bias variations, and outlier spikes. These signals frequently show ongoing attacks long before data is taken.

5. Conduct Periodic Artificial Intelligence Penetration Tests

The sole way to determine whether your defenses are effective is to attempt to break them. Together, Qualysec performs AI penetration testing on models, data, prompts, and APIs.

How Penetration Testing Companies Secure AI-Driven Systems

Companies are concentrating on artificial intelligence and doing far more than port and API scans. Not only infrastructure, but they also show how actual attackers misuse artificial intelligence behavior. This is how artificial intelligence’s security concerns are really revealed.

They see whether models may be deceived into leaking data, endorsing fraud, or producing dangerous content. This exposes hidden artificial intelligence security issues undetected by any automated scanning.

They additionally attack training systems to check whether tainted data might go undetected. For AI based cyber security tools, where poisoned models become blind, this is especially important.

Modern AI pentesting also goes after embeddings, vector databases, and orchestrated layers, which are more often utilized to link LLMs to corporate systems. These now present significant security risks of artificial intelligence.

Qualysec focuses on this whole stack strategy by integrating conventional VAPT with hostile ML testing.

What Actual Artificial Intelligence Concentrated Penetration Testing Involves

1. Testing Logic and Model Behavior

Attackers do not target code. They threaten decisions. To observe how models react, AI pentesters provide them with highly skewed, untrue, and adversary data. This exposes whether the artificial intelligence may be made to support fraud, misclassify malware, or create hazardous content.

Not the infrastructure; this kind of testing specifically seekssecurity risks of artificial intelligence that dwell inside the model itself.

To find behavioral flaws that conventional scanners miss, Qualysec performs these tests throughout every AI VAPT project.

2. Pipeline for Data Poisoning Testing and Training

Furthermore, artificial intelligence testers are how information enters your training pipeline. They investigate whether bad or prejudiced samples can be introduced unnoticed. Should this occur, the model would suffer internally.

This is especially harmful for AI based cyber security tools since poisoned models prevent real threats from being found. Read more about AI Security Tools to Protect Your Organisation.

3. Prompt Injection and LLM Abuse Validation

Systems driven by LLMs are examined for quick injection, jailbreaks, and instruction overrides. Testers try to push the model to expose secret information, system prompts, or internal documents.

This reveals some of the most serious AI cybersecurity threats found in contemporary companies.

4. Abuse of API, Plugin, and Automation

Pentesters target the interfaces surrounding artificial intelligence as well. Model makers seek to get the model call APIs, access files, or trigger workflows. should not. This is where the majority of AI risk to cybersecurity turns into actual data breaches. Included in Qualysec’s AI pentesting scope is the whole API and plugin misuse testing.

See how our AI penetration testing helped organizations uncover their AI models’ critical vulnerabilities. Read our client testimonials.

Why Choose Qualysec For AI Penetration Testing Services

Most security companies evaluate artificial intelligence like a web application. They stop after scanning the API. That method misses where the true security dangers of artificial intelligence reside. Treating artificial intelligence as a living decision system that can be influenced by data, prompts, and logic, Qualysec seeks to change it.

Qualysec tests how your models think, not only where they run. This covers data poisoning, fast injection, model reversal, hostile inputs, API misuse, and automated abuse. These are the approaches attackers use to generate actual artificial intelligence cyberattacks.

Unlike standard reports, Qualysec links every technical result to corporate risk. This implies you do not simply discover what is wrong. You find out what fraud, data exposure, compliance penalties, and reputational damage might cost you. For companies handling artificial intelligence security issues under laws like the GDPR and EU AI Act, this is essential.

Qualysec also confirms if your AI based cyber security measures are genuinely guarding you or just fostering a false sense of security. Many businesses use artificial intelligence to combat threats and fraud, but they never check whether that artificial intelligence can be circumvented.

Qualysec offers AI security audits for businesses in financial, healthcare, SaaS, and fintech that satisfy operational as well as legal needs.

Conclusion

The security risks of artificial intelligence will only get more complex as we approach 2026. The scene changes daily, from AI-based cybersecurity defenses to the approaching AI threat to cybersecurity. Knowing the weaknesses in your artificial intelligence lifecycle, from data collecting to distribution and using strict AI penetration testing helps you to make sure your system continues to be valuable.

In the digital era, securing artificial intelligence is now a basic necessity for corporate continuity rather than a luxury. The companies that succeed will be those that accept artificial intelligence not only for its effectiveness but also for its tenacity. Create the basis of your artificial intelligence plan to stay one step ahead of the changing threat environment.

Don’t let your AI become a liability. Book an AI Security Consultation with Qualysec today to identify hidden risks before they impact your reputation!

Speak directly with Qualysec’s certified professionals to identify vulnerabilities before attackers do.

FAQs

1. What are the security risks of using AI?

Data poisoning, adversarial attacks, and prompt injection are among the major hazards. These might cause prejudiced or damaging decision-making, system alteration, and illegal access to data.

2. What is a common security threat in AI?

At present, prompt injection poses the most danger. It lets hackers trick a Large Language Model (LLM) into dismissing its security policies so they can undertake illicit activities or steal information.

3. What is the major security risk with the advancement of generative AI?

GenAI’s black box character makes it challenging to forecast its response to hostile requests. This raises the possibility of sensitive data leakage and the production of bogus code or disinformation.

4. How can penetration testing reduce the security risks of artificial intelligence?

Penetration testing finds flaws in the model’s logic by replicating actual attacks. Early detection of these flaws allows businesses to install protection and fixes before a genuine attacker abuses them.

5. Why do traditional security controls fail against AI-specific risks?

Conventional controls search for illegal access or malicious code. AI attacks sometimes use authentic-looking data to alter the model’s inner logic, which standard firewalls cannot identify.

0 Comments