Introduction

AI system development is taking a lot to take place now that AI systems are affecting real-world decisions such as medical devices, credit scores, chatbots, and recommender systems. Most of the artificial intelligence systems are created with no prior review for security, and the bad news is that it only takes one exposed API, a contaminated dataset, or one prompt injection to erode trust, expose data, or put you into a legal bind. This book will outline what professionals verify in AI, what they evaluate, and why having an organized AI security audit checklist is more important than it has ever been before.

The truth of the matter is, AI brings new types of risk that aren’t found with typical programming. Just one prompt injection can potentially invalidate an AI’s safety functions. Over time, a contaminated dataset can create subtle modifications to an AI’s behavior. An AI’s publicly exposed API can also expose sensitive training data without you ever knowing it because, once again, this new technology requires you to have a security audit checklist to help you evaluate it as soon as possible.

The Urgent Need for AI Security Audits

The product you made with technology is like a bomb going off any minute now, don’t you think? With the 2025 average cost of a data breach around the world being $4.44 million (in the US it’ll be $10.22 million), not taking the necessary precautions when it comes to your machine learning models is simply not possible. Your only option for ensuring your automated systems and LLMs are not leaked or affected is by performing artificial intelligence security audits. The following will detail how qualified experts will stress test artificial intelligence products for the purpose of legal compliance and continued business.

Companies just starting this journey might contact a service provider, such as Qualysec, to determine their true artificial intelligence footprint ahead of any governing body or malicious entity.

What Is an AI Security Audit?

An AI security audit involves a comprehensive examination of an AI model, its input and output data, and any related infrastructure to find any misuse, abuse, exploitation, and/or leakage. Unlike an evaluation of a traditional application, an AI audit will look at behavioral evidence rather than purely code. Where a normal Vulnerability Assessment and Penetration Testing (VAPT) looks for SQL injection attempts, an AI system security audit checklist seeks out unanticipated logic that could ultimately force the AI to act in an undesirable manner.

The model’s training and retraining have to take into account how inputs influence performance and how data is distributed across a network in the performance of an ML security audit. The audit will assess if the model can be tricked into producing destructive responses while everything about it is functioning normally. Unlike a traditional penetration test, the behavior of AI has been the primary focus of the AI audit.

During a thorough AI-based system security audit, the auditor will examine how the model interacts with automated agents, plugins, applications, and application programming interfaces (APIs). Most AI incidents are the result of excessive permissions or integration failures rather than model deficiencies. Therefore, the wider scope of the audit will complicate the audit process.

Critical Elements of an AI System Security Audit

- Training Integrity: Confirmation of the integrity of the training of the model, which confirms that no adverse instructions have been imparted to it.

- Input Robustness: Testing of the model’s response to contrary information (opposing signals) will give one insight into the degree to which the model has the ability to withstand erroneous inputs.

- Data Privacy: Confirming that queries cannot be used to retrieve training data.

- Infrastructure: Securing the APIs and cloud settings in which artificial intelligence resides is infrastructure.

Early on, organizations intending to expose artificial intelligence outside or seeking AI security audit certification usually hire seasoned pen testing companies like Qualysec to pinpoint blind spots before implementation instead of following a pricey event.

Why AI Models and Applications Need Security Audits

Many companies ignore a crucial reality in their hurry to use generative AI: AI creates a big new attack surface. Conventional firewalls cannot block a quick injection; typical encryption will not prevent model poisoning. Its mistakes turn into systematic risks as artificial intelligence assumes control of company activities.

In the panic to deploy, many times organizations underestimate how much attack surface they are producing via generative AI. A prompt injection attack cannot be stopped by conventional firewalls. At rest, encryption does not stop model inversion. Standard vulnerability scanners are clueless as to how a large language model fails or reasons.

The cost is great. One hallucinating reaction, leaking consumer data, could quickly obliterate years of brand trust. Additionally, catching up quickly are regulators. Organizations are now legally responsible for dangerous artificial intelligence conduct, not only breaches, with regulations like the EU AI Act becoming enforceable. Actual results include fines, service restrictions, and required revelations.

Another significant motivator is intellectual property risk. Attackers can gradually rebuild proprietary models by means of tactics like model extraction, whereby they continually question exposed APIs. This can cause the loss of a competitive edge without any conventional breach ever happening.

The High Stakes of AI Neglect

- Reputational Damage: One could overnight ruin brand trust with a hallucinating artificial intelligence that either publishes inappropriate material or leaks consumer information.

- Regulatory penalties: Non-compliance could draw fines of up to 7% of worldwide turnover as the EU AI Act and ISO/IEC 42001 standards become law of the land in 2026.

- Intellectual Property Theft: By model inversion, attackers may steal the secret recipe of your company by reverse-engineering your proprietary algorithms or training sets, hence stealing intellectual property.

- Operational Disruption: If someone fools an artificial intelligence agent and it controls your calendar or email, it may carry out unapproved actions that result in a cascaded security breach.

This changing risk environment helps to explain why AI in cybersecurity audits has evolved to be its own independent field. Many businesses turn to experts like Qualysec today to perform focused artificial intelligence audits that emphasize how hostile pressure causes models to falter, not simply whether servers are updated.

Explore Qualysec’s approach to securing AI training pipelines with a perfect AI security checklist!

See How We Helped Businesses Stay Secure

Key Security Risks in AI Systems

Before using an AI security audit checklist, businesses need to have a clear grasp of the main threat categories molding artificial intelligence security now. Unlike conventional applications, artificial intelligence systems do not collapse just due to flaws in code. They fall apart via behavior, data manipulation, and uncontrolled learning routes. These dangers have a direct bearing on the execution and scope of an artificial intelligence system security audit.

Misconception About AI Risks

The largest error businesses make is assuming artificial intelligence has theoretical risks. Attackers are actually using generative models via delicate inputs, poisoned datasets, and frequent API requests. These strikes seldom set off usual security warnings. This is why IT security audits based on artificial intelligence concentrate on hostile behavior instead of perimeter defense.

Impact of Scale on AI Security

Scale is another major consideration. Massive amounts of data and requests are handled by artificial intelligence systems. Every hour, a single flaw can be used thousands of times, increasing influence. Early identification of core threat categories is therefore absolutely vital for arranging audit effort and remediation investment.

Common Operational AI Security Risks

Apart from these kinds, operational risks include:

- Prompt injection

- Jailbreak threats

- Output-based data leakage

- Hazardous artificial intelligence APIs

- Unmonitored retraining cycles

These are becoming more and more widespread. The problems cause AI system security audits to be radically different from conventional VAPT activities.

These hazards shape the field of artificial intelligence in cybersecurity inspections. Unlike a traditional VAPT, whose aim is usually to find a way in, an AI/ML security audit mostly concerns what the system does once a user is already in and interacting with the model. Companies must understand that the target is the model itself, not only the server housing it.

Primary AI Attack Vectors and Business Impact

| Threat Category | Technical Description | Primary Target | Potential Business Impact |

| Adversarial Attacks | Crafted inputs designed to trigger incorrect model behavior. | Inference Layer | Bypassed safety filters and unauthorized actions. |

| Data Poisoning | Injecting malicious data into training or fine-tuning sets. | Training Pipeline | Permanent “backdoors” and corrupted model logic. |

| Model Extraction | Querying an API to reconstruct the model’s parameters. | API / Model Weights | Loss of competitive advantage and IP theft. |

| Prompt Injection | Overriding system instructions via user-provided text. | LLM Logic | Data exfiltration and unauthorized tool execution. |

Organizations starting this risk analysis often use Qualysec to identify early threats before production or regulators’ visibility of vulnerabilities.

Assess AI risks in cloud environments, get in touch with Qualysec!

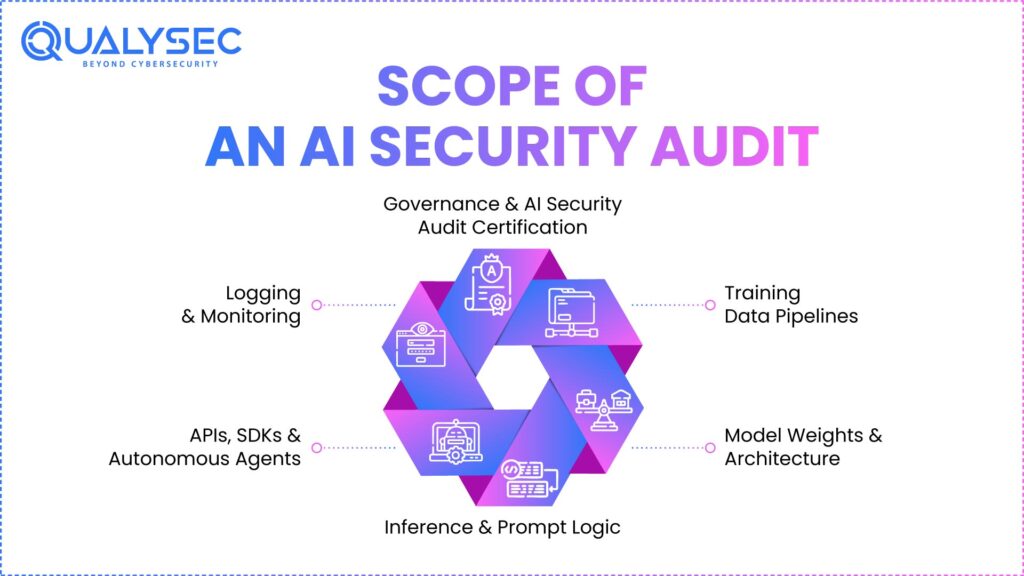

Scope of an AI Security Audit

The model layer is never where a severe AI security audit checklist finishes. Narrowing the focus just to the inference logic generates a hazardous, mistaken sense of security. AI systems are complicated ecosystems, not individual parts. An audit must examine the data at rest, the data in motion, the model’s internal parameters, and the peripheral devices that the artificial intelligence is permitted to use to be successful.

Full-scope audits look at training data pipelines to find unauthorized access points and poisoning dangers linked to AI security vulnerabilities. It analyzes the model design and weights to estimate exposure and integrity. A review of inference logic and prompt management helps to find possible paths of manipulation leading to jailbreaks. At last, the audit should include autonomous agents, SDKs, and APIs, often the weakest links of the chain, as well as APIs.

Key Components of an AI Security Audit

- Training Data Pipelines: Inspecting the data lake’s security and confirming that unauthorized people haven’t altered training sets.

- Model Weights and Architecture: Verifiers check that the model file itself hasn’t been substituted or tampered with to add malicious subroutines: model weights and architecture.

- Inference and Prompt Logic: Stress-testing the mechanisms controlling LLM behavior guarantees that they cannot be readily avoided by inference and prompt logic.

- Logging and Monitoring: Logging and monitoring guarantee that during the AI’s thinking process, they do not retain sensitive inputs such as passwords or PII in plain-text logs.

Training data pipelines start a comprehensive artificial intelligence system security audit. Auditors investigate where information comes from, who is allowed to change it, and how adjustments are approved. This stage is absolutely vital, as poisoned data can fundamentally change model behavior long before inference even starts.

Next is a review of model architecture and weights. Auditors evaluate the integrity checks in use, how access is managed, and the place of models’ storage. Access here without permission might result in stealing or silent damage.

To find paths of manipulation, inference logic, and prompt management are examined. This comprises context windows, guardrails, and system prompts that attackers might use by means of indirect inputs.

Governance, monitoring, and logging complete the scope. At scale, debug logs sometimes reveal personal data, responses, or prompts. Until compliance audits happen, poor access controls here often go unnoticed.

Many times, businesses getting ready for an AI security audit certification define this scope with Qualysec early on to avoid any vital layer being missed.

Contact Us to speak with our experts and start a comprehensive AI security audit to identify critical risks across your AI systems.

Contact Us to Detect and Mitigate AI Security Risks

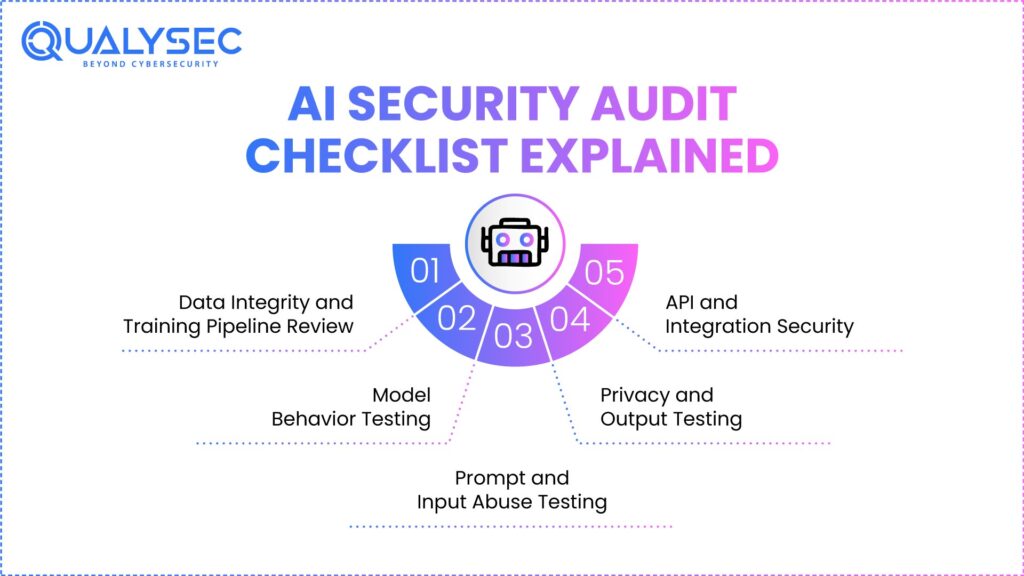

AI Security Audit Checklist Explained

A simple task list is not an AI security audit checklist. It’s a methodical system that reflects how actual attackers probe, change, and exploit artificial intelligence systems put to use in production settings, while supporting regulatory compliance. Unlike lab testing, production artificial intelligence has erratic inputs, changing data, and constant user contact; hence, static validation is useless.

Expert auditors create this checklist to cover the whole AI lifecycle. Every stage builds on the one before it to guarantee that flaws found earlier are revalidated in later stages. This multi-layered approach helps to avoid false assurance resulting from a single passed test concealing more significant structural problems.

Accountability is another major reason this checklist matters. Regulatory agencies and corporate boards increasingly anticipate written proof that AI hazards were found, evaluated, and reduced as part of modern AI-driven cybersecurity practices. One’s traceability comes from a checklist-driven artificial intelligence system security audit.

Additionally, the checklist guarantees consistency across audits. Teams usually over-test obvious hazards like prompts without them, while neglecting less noticeable areas like data provenance or logging exposure.

For companies considering long-term artificial intelligence deployment or AI security audit certification, this checklist forms the foundation of regular governance instead of a one-time effort.

1. Data Integrity and Training Pipeline Review

Every artificial intelligence system starts with training data; therefore, it also constitutes one of the most often disregarded attack surfaces. Auditors first verify the source of the training data in an AI security audit and whether those sources can be relied upon over time.

Auditors investigate who is authorized to add, change, or erase datasets. These safeguards provide insider or hacked accounts a chance to insert malicious data in many companies since they are informal or unrecorded. Model behavior can be permanently changed even by a modestly affected dataset, making early AI malware detection essential.

Next examined are the dataset lineage and version management. Auditors check whether earlier versions of training data may be restored if corruption is discovered. Models poisoned without rollback ability might need whole retraining, therefore raising cost and downtime.

Workflow retraining is likewise assessed. Many times, continuous learning pipelines swallow live data devoid of adequate verification. Auditors verify if unusual patterns are found before they are included in the model.

This stage views training channels as essential infrastructure, not behind-the-scenes activities. Every AI/ML security audit must have this as a core pillar.

2. Model Behavior Testing

Model behavior testing emphasizes an artificial intelligence system’s responses under stress, ambiguity, and enemy pressure. This stage goes beyond accuracy measures in an AI security audit checklist to cover safety, consistency, and dependability.

To identify failure modes, auditors purposely push models into doubtful or hazardous settings. This includes testing hallucination tendencies, contradictory instructions, and incomplete context. These tests show whether the model yields confident but incorrect outputs or decomposes securely.

Another important consideration is refusal logic. Auditors check whether the model always rejects unauthorized activities or if small phrasing changes enable rule circumvention. One of the most significant compliance concerns is erratic refusal behavior.

Edge-case thinking is also studied. While models may perform well on usual searches, they can fail terribly when confronted with uncommon or antagonistic inputs. These mistakes usually only come to light when applied in the real world.

A crucial part of AI in cybersecurity audits is behavior testing, which links technical performance to corporate risk.

3. Prompt and Input Abuse Testing

One of the most used AI flaws now is targeted by quick and input misuse testing. Attackers use input to overwhelm system instructions, switch off defenses, or force models into risky conduct.

Auditors start with direct prompt injection and try to see if a clear direction overcomes obstacles. Then they move to indirect prompt injection, in which they tuck malevolent instructions inside papers, websites, or metadata that the artificial intelligence is permitted to handle.

Multi-step jailbreak tries to let persistent attackers who repeatedly modify inputs based on model responses simulate. This shows if protections weaken with time rather than fail right away. Auditors check context window modification as well. Particularly in agent-based systems, big context windows may unwittingly amplify negative commands.

This stage helps to determine whether guardrails are truly enforced or just recommended, therefore affecting AI system security audits.

4. Privacy and Output Testing

AI systems can leak sensitive information without any conventional violation. Output and privacy testing emphasize what the model exposes, not only how it is accessed.

Auditors check whether models have memorized training examples, particularly when those samples include PII, proprietary data, or regulated information. Membership inference attacks help one establish if certain information was used in training.

Checks of the log and monitoring systems help to pinpoint whether outputs or cues are saved improperly. Many companies unwittingly log sensitive data en masse. To determine whether output filters constantly erase sensitive data under unfavorable wording, stress tests are conducted. Partial masking errors abound and are hazardous.

Organizations working under GDPR, HIPAA, PCI DSS, or financial rules must go through this phase.

5. API and Integration Security

Most often, artificial intelligence abuse occurs via APIs. Exposed through poor interactions, even a well-protected model becomes dangerous.

Auditors verify least-privilege access by testing authentication and authorization logic. Common results include over-permissioned API keys and shared tokens.

Rate limiting is assessed to stop token flooding threats that result in denial-of-service circumstances or high usage expenses. The systems for detecting abuse are also discussed.

To make certain internal prompts, system messages, or debugging details are not apparent through API responses, someone checks them.

Agents, plugins, and tool integrations draw special focus. Attacks may use over-permitted tools to remotely access databases or carry out tasks employing artificial intelligence.

Testing for Model Poisoning and Data Integrity Risks

Model poisoning is among the most severe artificial intelligence threats since it rarely sets off usual security alerts. Attackers establish training data malpatterns that only under particular, hidden circumstances allow for long-term buried activity. These pipelines have to be regarded as essential infrastructure in an AI/ML security audit checklist to stop Shadow AI or unproven datasets from altering the fundamental premise of the company.

Practical Approach to Data Integrity:

- Check Data Origin: To make sure no unlawful modification has taken place, follow the digital lineage of every dataset with cryptographic hashes; check data origin.

- Implement Anomaly Detection: Statistical methods may be applied to find in the training set outlier data points that might indicate planned poisoning; this is anomaly detection.

- Review Versioning Controls: Always make certain that older known-good model versions are accessible for fast rollback should an audit reveal a backdoor via review versioning mechanisms.

- Sanitize External Data: External information has to be cleaned. Before including any information obtained from the internet or outside sources in the fine-tuning system, a thorough cleaning procedure has to be undergone.

Download our comprehensive penetration testing report to review detailed security findings, identify vulnerabilities, and understand how to strengthen your systems against potential threats.

Get a Free Sample Pentest Report

Identifying Prompt Injection and Jailbreak Vulnerabilities

The most often seen flaw in LLM-based systems now on the market is prompt injection. Attacks either reappropriate connected instruments for data exfiltration, turn off security logic, or alter inputs to get around system commands. Auditors apply the MITRE ATLAS framework to imitate actual aggressive strategies, including direct and indirect injections, which might occur when artificial intelligence gets access to a poisoned website or document.

Advanced testing methodologies include:

- Adversarial Red Teaming: Manually generating jailbreak cues to determine whether the model may be tricked into ignoring its system-level safeguards.

- Multi-Step Attacks: Multi-step threat modeling of relentless attackers slowly lowering the model’s safety filters across several conversations.

- Indirect Injection Testing: One confirms the sensitivity of the artificial intelligence to hazardous commands concealed in approved sources of external data using indirect injection testing.

Detecting AI Data Leakage and Privacy Issues

Systems of artificial intelligence can reveal data devoid of any conventional breach. Exactly crafted enemy questions let models inadvertently recall sensitive training situations or reveal personal data. Companies must adhere to the IBM Cost of a Data Breach Report 2025 results, which point to significantly higher breach costs brought on by ungoverned artificial intelligence (AI) systems. This is a nightmare for them.

| Leakage Type | Technical Cause | Mitigation Strategy |

| Training Data Extraction | The model memorizes specific PII samples. | Differential Privacy & Data Scrubbing. |

| System Prompt Leakage | Improper handling of “forget previous instruction” queries. | Instruction Isolation & Masking. |

| Membership Inference | Output variances reveal if a user was in the training set. | Noise Injection & Output Normalization. |

| Log Exposure | Sensitive prompts are cached in unencrypted text files. | Secure Logging & Automatic PII Masking. |

Securing AI APIs, LLMs, and Integrations

The most often used beginning for artificial intelligence abuse is APIs. Even the safest, well-trained models turn into hazardous ones when exposed via defective endpoints. To stop token flooding and denial of service (DoS) attacks, auditors verify authentication, permission, and rate restrictions. Moreover, the integration with automated agents and plugin calls for a Zero Trust strategy to prevent an attacker from using artificial intelligence to execute code within the business’s internal network.

- API Authentication Review: Regular rotations of keys prevent unauthorized access and guarantee protection of all endpoints, and strengthen LLM security.

- Token Rate Limiting: Strict quotas on API consumption prevent large cloud billing surges brought on by evil, recursive queries, that is, token rate limiting.

- Permissions for Plug-in Sandboxing: Restricting the ability of artificial intelligence tools so they can only get access to the information absolutely needed for their particular objective.

- Error Message Sanitization: Ensuring that external parties are not shown internal system cues or architecture details through API error codes.

AI Risk Assessment and Threat Modeling

AI risk assessment and threat modeling stand at the point where technical testing transforms from just a security activity into a business conversation. Because it helps to explain the value of a finding, this step is not elective in an artificial intelligence security audit certification process. Auditors see flaws as risks affecting income, compliance, safety, and trust instead of just listing them. This enables leadership organizations and regulators to clearly see how an artificial intelligence solution might not succeed in the real world.

Identifying Critical Assets

The first stage is identifying which needs protecting. Beyond servers and APIs, critical resources in artificial intelligence systems include:

- Model weights

- Embeddings

- Prompts

- System instructions

- Training material

- Feedback loops

- Decision results

All of which are under the auditor’s focus. Each of these assets could be aimed at or used inappropriately in several respects. For instance, damaged embeddings can somewhat change suggestions; poisoned training data can result in long-run bias that persists throughout model updates.

Data Flow and Trust Boundaries

Once assets are defined, auditors trace data flows from start to finish. This covers where information is kept, how it moves between components, how it enters the system, and where decisions are made. The bounds of trust are clearly defined. These are spots where data or control passes among users, systems, vendors, or programmable entities. At these borders, many artificial intelligence failures happen, including when third-party tools gain more background than anticipated or when user inputs cross into internal prompts.

Adapting Threat Modeling Frameworks for AI

Traditional threat modeling methods like STRIDE or DREAD are then adapted for artificial intelligence environments using well-known pentesting frameworks. Rather than only denial of service or comedy, auditors use these models to cover artificial intelligence-specific risks. This includes bias amplification, model manipulation, fast injection, overreliance on outputs, and unfair decision authority. The goal is to modify those frameworks such that they reflect how artificial intelligence systems actually behave rather than to force AI into ancient systems.

Compliance Considerations for AI Security Audits Checklist

Not just a suggestion, compliance is a necessary operational demand in 2026. To ensure security and openness, regulators demand confirmed evidence of autonomous artificial intelligence audits and risk management of artificial intelligence. Ignoring global standards can have terrible consequences, including forced closure of your artificial intelligence systems in major markets, including the European Union.

| Framework | Regulatory Body | Core Focus | Required Evidence |

| ISO/IEC 42001 | International (ISO) | AI Management & Governance | Proof of risk assessment and secure lifecycle. |

| EU AI Act | European Union | Safety & Fundamental Rights | Technical documentation and conformity audits. |

| NIST AI RMF | USA (NIST) | Trustworthiness & Robustness | Mapped risks and documented mitigation steps. |

| HIPAA/GDPR | Various | Data Privacy & PII Protection | Proof that AI doesn’t leak personal data. |

Obtaining an AI security audit certification and checklist using these methods gives the technical foundation needed to foster public trust. Companies frequently quote the NIST AI Risk Management Frameworkas their primary resource for planning a secure lifespan for their machine learning systems.

Don’t let your AI become a liability. Book an AI Security Consultation with Qualysec today to identify hidden risks before they impact your reputation!

Speak directly with Qualysec’s certified professionals to identify vulnerabilities before attackers do.

Tools and Techniques Used in AI Security Audits Checklist

Modern audits combine human expertise with specialized technologies intended to discover flaws that regular security systems miss. While automation is required for scanning large datasets, the creative nature of AI attacks, such as jailbreaks, still calls for human interaction. Experts use dynamic testing (interacting with the live model) together with static analysis (code review) to ensure complete coverage.

- Static Analysis of Pipelines: Evaluating ML code and infrastructure for vulnerabilities, including insecure deserialization or unencrypted data movement.

- Behavioral Fuzzing: Behavioral fuzzing is the bombardment of millions of random, unusual inputs on the model to see if it produces illegal or undesirable reactions.

- Specialized AI Scanners: Specialized artificial intelligence scanners are created in part by real-time model drift tracking and prompt injection detection employing technologies like Giskard, Lasso, or Mindgard.

- Manual Red Teaming: The gold standard of AI security, manual red teaming uses instinct and social engineering by human hackers to seek to breach the system.

Best Practices for Securing AI Models and Applications

Once the audit report is handed in, security does not cease; programs using artificial intelligence are always evolving. As they gather new information, models can alter; yesterday’s safe could be vulnerable today. Following these top standards assures long-term resiliency and helps to preserve the integrity of your AI security audit certification.

- Continuous Testing: Regular audits following any significant retraining or tweaking of your models will help you to find fresh weaknesses.

- Human-in-the-Loop (HITL): Before an artificial intelligence finalizes any high-stakes choice, make sure a human expert validates it.

- Strict access control: least privilege should be applied to all training data and prompt-management interfaces.

- Output Monitoring: Establish real-time filters that monitor and block sensitive or prohibited model outputs before they reach the end user.

- Zero Trust Architecture: Never trust an input merely because it originates internally; every prompt should be treated as potentially malicious.

Why Choose Qualysec for AI Security Audits

Selecting the right partner will define how well your audit performs. Based on actual attack simulation and sophisticated adversarial research, Qualysec fosters a security-first perspective built on AI for cyberattack prevention. Our staff are committed security researchers who know how to break and repair the most sophisticated artificial intelligence systems available; we are not only compliance inspectors.

We conduct manual artificial intelligence red teaming, use a strict artificial intelligence security audit checklist, and provide reports precisely suited to the high standards of NIST, ISO 42001, and the EU AI Act. Above all, we offer practical fixes. We help your developers to implement the solutions and fortify your infrastructure against tomorrow’s threats rather than just give you a list of problems. Most importantly, we offer practical correction advice; that is, we demonstrate precisely how to repair what has broken rather than merely say what is wrong.

- Specialized Expertise: Our auditors are taught the newest hostile machine learning methods and are therefore ahead of the jailbreak trends.

- Compliance-Ready Reports: We provide the necessary paperwork to pass outside audits and get an AI security audit certification.

- End-to-End Testing: From the training data to the final API response, end-to-end testing covers every inch of your AI attack surface.

Conclusion

AI is influencing the course of international business, yet that future depends on openness and security to maintain trust, a responsibility increasingly addressed by AI cybersecurity companies. Once a luxury only accessible to tech behemoths, an organized artificial intelligence security audit is now a basic, non-negotiable need for any company employing machine learning in 2026. The hazards of model poisoning and prompt injection cannot be disregarded as models grow more potent and intimately intertwined with important decisions.

Following a thorough AI security audit checklist and partnering with seasoned allies like Qualysec enables your company to innovate fearlessly rather than hesitantly. Data paves the way to AI-driven development, but it is secured with constant awareness. Begin your evaluation right away to guarantee your road to the future is a secure and resilient one.

Talk to Our AI Assistant for Expert AI Security Audit Insights and Risk Assessment Guidance.

Chat with our intelligent AI Assistant and get tailored insights in seconds.

Frequently Asked Questions

1. How do you audit an AI system?

An audit entails several stages: examining data pipelines for poisoning, stressing the model with hostile probes (red teaming), verifying privacy measures to avoid data leakage, and protecting the APIs and infrastructure hosting the model.

2. What is the ISO standard for AI security?

Focusing on Artificial Intelligence Management Systems (AIMS), ISO/IEC 42001 is the main benchmark. Usually deployed with ISO/IEC 27001 for more general information security administration,

3. Can an audit be done by AI?

Although an AI-assisted security audit checklist helps to scan large codebases or datasets, human skill is irreplaceable. Human red teamers need to appreciate the complicated, inventive, and logical hallucinations that cause artificial intelligence security problems.

4. What is the security assessment of AI?

It assesses how an artificial intelligence system could be abused, controlled, or used, and how those dangers affect compliance, safety, and privacy.

5. When should organizations conduct an AI security audit?

Before the first deployment, after any major model retraining or fine-tuning, and at least yearly to reflect model drift and new attack methods found in the field, the team ought to perform an audit.

6. How do AI security audits support regulatory compliance?

Proving to authorities that your artificial intelligence system is safe, transparent, and secure, they provide the documented technical proof, risk assessments, and mitigation proof demanded by laws like the EU AI Act.

0 Comments