Introduction

AI systems have emerged from the shadows, so to speak. Apart from being the first interaction point, the current chatbots derived from big language models (LLMs) are also the most potent tools running behind, performing tasks like resume analysis, pattern recognition, and assisting doctors in diagnosis, among others. The consequences are almost instant, and it may even be fast to correct any errors made, therefore showing a really significant impact over time. This is why AI Pentesting Tools are so necessary to make sure that these systems can safely, securely, and without any vulnerabilities, that makes it possible for them to be taken down.

An AI mistake might result in a range of bad repercussions, including preventing someone from seeking justice, revealing sensitive client data, generating immoral activities, or getting fined. Unlike typical software problems, which are often quickly noticeable, malfunctioning artificial intelligence can still function normally as the harm is done. This characteristic of artificial intelligence problems makes them both more dangerous and more challenging to detect.

Why Traditional Penetration Testing Fails With AI

From the outside, classic penetration testing looked at applications, servers, and online systems. It depended on expected patterns of thought and a set path of actions. It was never intended to assess the degree to which a model could be duped through discussion, its grasp of the vagueness of language, or its reasoning capacity.

Companies are adopting artificial intelligence much quicker than they can put in place security measures, hence increasing the gap between the two sides. Attacks had to be conducted through the infrastructure available in earlier times. They only drilled into the AI layer, not deep. Taking advantage of APIs tied to the AI or modifying prompts to such a degree that they bypass already in place conventional security mechanisms helped one to accomplish this.

The Shift From Infrastructure Security to AI Behavior Security

This is exactly why contemporary security teams lean toward including artificial intelligence (AI) penetration testing solutions in their arsenal. Rather than the installation difficulty, these instruments concentrate on the behavioral risk. The way the AI algorithms will behave under stress, how the prompts might trick the reasoning, and how the AI will engage with the sensitive systems at the backend are all under examination.

You will realize throughout this session that the corporate circumstances are the real results of the AI in the cybersecurity testing and AI-assisted penetration testing interaction. Before the attackers arrive at the artificial intelligence systems, the security teams are evaluating the threats and testing the methodologies, tools, and verification methods.

What Are AI Pentesting Tools?

Exclusively intended for AI models, LLM-based applications, and AI-empowered APIs are the artificial intelligence penetration testing tools. From the new breed of artificial intelligence, rather than from the conventional vulnerability scanners looking for recognized vulnerability fingerprints, comes the cutting-edge solution for penetration testing.

How AI Pentesting Tools Differ From Traditional Scanners

Unlike their more conventional automated equivalents, the new AI-powered penetration testing tools examine the client’s AI systems rather than relying on the user-defined system behavior. From the point of view of behavioral analysis, they are trying to comprehend the mind rather than looking for human-like errors.

Core Testing Methods Used in AI Pentesting

Among the methods employed in the security evaluation of artificial intelligence is volatility testing. Among the elements they examine are

- output consistency

- fast handling

- decision logic

- chains of thought

- situational memory.

Rather than checking whether the machine is accessible, they urge people to think like machines. This method works well for machine learning in cybersecurity, where behavior matters as much as configuration.

One of the most important components of this is the Bad Actor Simulation. These techniques imitate the real attacker’s strategies, which include indirect command overrides, prompt injection chains, role misplacement, and slowly losing security over extended meetings.

Such techniques enable the security teams to identify flaws that testing infrastructure would never uncover. Though technically secure, the AI is also logistically vulnerable.

Almost totally, artificial intelligence penetration testing solves difficulties that other technologies cannot. It thinks in ideas rather than in relationships. One of the reasons artificial intelligence model security testing has developed into its own security area is this.

Why This Level of Testing Is No Longer Optional

Companies utilizing artificial intelligence for manufacturing purposes run the danger of loss of confidentiality should they fail to meet this degree of security evaluation. While classical systems’ security is at the level of fundamental security, that of AI-based systems is up to the standard of pentesting.

Qualysec validates AI-driven API and plugin security across real production integrations!

Why AI Models and LLM-Powered Apps Need Security Testing?

The attack surfaces of artificial intelligence systems are entirely distinct from those of traditional software. LLMs are analyzing the language, determining the intent, and dynamically generating the answers. All such adaptability goes along with the possibility of abuse.

The operations of the artificial intelligence system produce probabilistic judgments, somewhat opposite to deterministic software. The result might be greatly affected by even a little alteration in context or language. Therefore, the attackers find it simpler to go undetected past existing security measures.

LLM-based applications are usually the ones that act like mediators between the sensitive systems and users. They are granted high-level permissions to communicate with live APIs, cloud services, databases, and even plugins. Thus, a compromised AI turns into a credible attack intermediary. As a result, the need and importance of AI/ML penetration testing continues to grow nowadays.

Another issue that raises eyebrows is that of data disclosure. The repeated use of such systems can lead to unintended, but sudden, disclosures of internal documents, personal data, and other types of information, like that used for training or system prompts. This is especially critical in the case of controlled sectors where the risk is already high, and the risk is multiplied several times.

In the process of security testing, not only technical defects but also verbal abuse have to be included. Therefore, AI pentesting tools are the ones that categorize the AI failure modes for further analysis.

Organizations that view artificial intelligence as low-risk software often find faults only after a leak. Proactive artificial intelligence penetration testing helps to prevent legislative, economic, and reputational damage.

AI Security Standards Landscape

| Framework | Focus Area |

| NIST AI RMF | AI risk governance |

| OWASP LLM Top 10 | LLM threats |

| ISO 23894 | AI risk management |

When To Schedule AI Pentesting

| Scenario | Recommended Action |

| New AI deployment | Full AI pentesting |

| Model retraining | Re test |

| Plugin added | API testing |

| Compliance audit | Manual review |

Ensure your AI models meet NIST AI RMF, OWASP LLM Top 10, and ISO 23894 requirements. Contact us to start your AI security assessment.

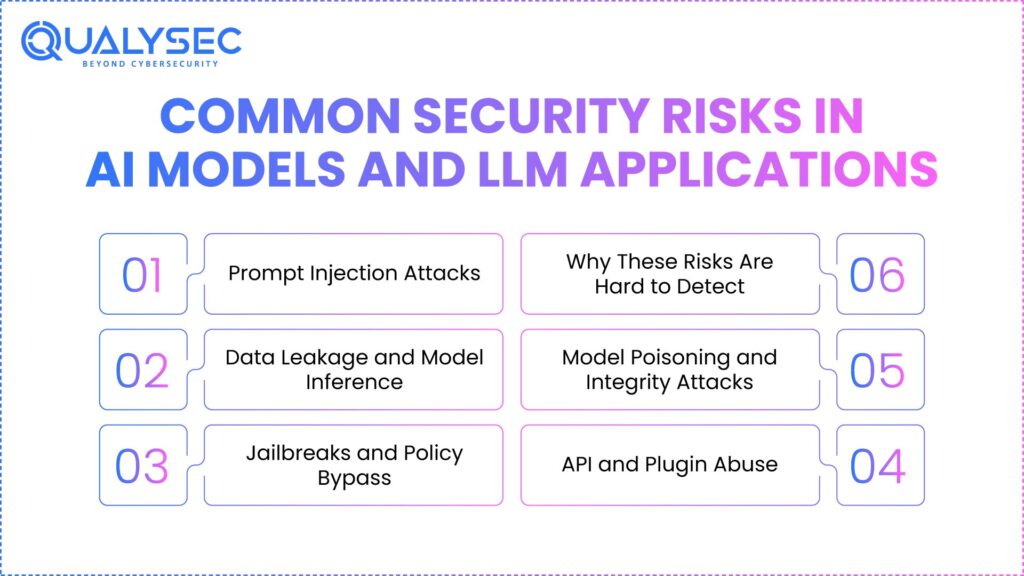

Common Security Risks in AI Models and LLM Applications

Applications based on LLMs and AI algorithms provide security flaws impossible to see by normal application testing. Rather than just from code errors, these risks come from the way artificial intelligence (AI) systems recall and react to language. Because artificial intelligence functions probabilistically, the same system might produce unsafe results one minute and safe ones the next.

Security teams occasionally miss these dangers since the surroundings of the artificial intelligence appear secure. It is feasible to set up firewalls, access restrictions, and authentication properly. Attacks still bypass everything by interacting directly with the AI interface.

Instead of technical misconfigurations, the most dangerous artificial intelligence threats hunt behavioral flaws. Only during real interactions spread over time do these imperfections become evident. This clarifies why AI pentesting tools focus on mimicking attacker conversations rather than studying endpoints.

LLMs also obliterate the limits of trust. Usually, they lie between users and sensitive systems. Once compromised, they act with authorized access, making attacks difficult to distinguish from typical behavior.

Below are the most important AI cybersecurity threats that AI pentesting, pentesting AI, and AI-powered penetration testing are supposed to expose.

1. Prompt Injection Attacks

Prompt injection uses how LLMs rate directions across developer prompts, system prompts, and user inputs. By sneaking malicious commands into user communications, attackers circumvent inner rules the artificial intelligence was never meant to reveal.

Unlike conventional injection strikes, rapid injection does not rely on apparent payloads or erroneous syntax. Natural language is reminiscent of the strike. Detection is therefore extremely difficult using traditional security methods.

Effective prompt injection may cause artificial intelligence systems to reveal system prompts, divulge secret data, circumvent content restrictions, or execute actions beyond their targeted scope. Some assaults enable hackers to affect artificial intelligence to contact high-permission internal APIs.

Regular injection is adaptive and steady. Over several interactions, attackers perfect their inputs, therefore gradually weakening safeguards. One-time testing is inadequate. Pentesting AI should mimic real conversations that vary over time.

Because they use logic instead of code, scanners are blind to these assaults. Only artificial intelligence penetration testing solutions utilizing multi-turn behavior can effectively reveal these.

2. Data Leakage and Model Inference

Data leaking in artificial intelligence systems rarely stems from one unambiguous response. Instead, it grows gradually via deduction. Models are always questioned by attackers who also search patterns and rebuild private data bit by bit.

LLMs trained on private, personal, or ruled data can unintentionally disclose bits of that data via carefully designed prompts. Attackers can increasingly infer internal papers, training records, or user-specific data.

This risk is notably great for industries regulated by financial regulations, GDPR, and HIPAA.

3. Jailbreaks and Policy Bypass

Jailbreak attacks alter the thinking process of an artificial intelligence model so as to circumvent internal restrictions. Instead of asking for banned material directly, the attacks guide the model via imagined, role-play, or abstract thought paths.

Static rules and keyword filters battle quickly against jailbreak approaches. Attacks keep adjusting their prompts till the model produces bounded results.

These attacks are especially dangerous in consumer-facing chatbots, copilots, and artificial intelligence assistants, where customers rely on outputs. One Jailbreak attack can cause a regulatory investigation or reputational damage.

Continuous artificial intelligence penetration testing is vital, given that jailbreak techniques are always changing. Once-off validation is not consistent with real attacker behavior.

Security teams must examine whether models preserve policy limitations throughout prolonged discussions, emotional control, and implicit thought scenarios.

4. API and Plugin Abuse

Usually linked with APIs, plugins, and underlying services, apps driven by LLMs inspire others. These connections raise risk together with growing roles.

Through authentic-looking requests, attackers may launch illicit API calls, access restricted resources, or exfiltrate data by changing artificial intelligence signals.

Often, artificial intelligence’s permissions go further than those of end users. If used, it becomes a privileged assault proxy working inside trustworthy systems.

During traditional API security testing, pathways reached via artificial intelligence are ignored. Artificial intelligence-driven penetration testing has to assess how AI commands generate backend operations.

This includes unneeded permissions testing, missed authorization verifications, and dangerous plugin setting analysis.

5. Model Poisoning and Integrity Attacks

Model poison strikes emphasize training and pipeline adjustment. Assaults can bring harmful data that gradually alters model behavior over time if the data sources are not protected.

Unlike open attacks, poisoning is almost unnoticeable. Though the model still functions, decisions gradually travel in a way advantageous for the attacker.

Particularly hazardous here are fraud detection, risk scoring, and recommendation systems, where minor changes can have major effects.

Using pentest artificial intelligence model methods, integrity testing determines if findings remain steady, neutral, and consistent with aims.

6. Why These Risks Are Hard to Detect

One characteristic is common to the dangers given. They depend on acts rather than structures.

Standard scanners count what is visible. The manner in which systems function is a benefit for artificial intelligence attacks. This makes clear why artificial intelligence pentesting techniques arrange categories depending on violent contact rather than defect signatures.

Today Download our sample penetration testing report to understand real-world security risks in AI models and LLM applications.

How Security Teams Perform AI Pentesting

Artificial intelligence pen testing is a mix of adversarial testing, behavioral analysis, and commercial risk verification for security teams. Finding faults is not the only objective; rather, it is also to learn how artificial intelligence systems fail as a result of actual attacker circumstances.

AI Asset Discovery and Mapping

Starting with artificial intelligence asset mapping, it is known as under review. Teams examine every model, LLM, API, plugin, vector store, embedding pipeline, and data source. Even in the lack of clear visibility, testing gaps exist.

The following is threat modeling. Including quick manipulation, data inference, hallucination abuse, and API exploitation, teams define abuse scenarios relevant to LLM security. This guarantees that testing accurately mirrors real risks rather than imagined ones.

Pentesting artificial intelligence depends on difficult adversarial questions. By intentionally pushing models over suitable limits in several contexts, testers replicate hostile performers.

The investigation explores model behavior under stress. Results that violate policies, are erratic, or are dangerous expose exploitable flaws.

Data exposure validation determines whether sensitive information may be retrieved from cross-session interaction or frequent queries.

Professional manual assessment connects technical results with commercial relevance. Human testers find contextual mistakes robots miss.

Assess AI risks in cloud environments, get in touch with Qualysec!

AI Pentesting Tools for Model Security Testing

Model-based penetration testing methods for artificial intelligence aim to assess AI systems apart from the underlying infrastructure or applications. Rather than first checking APIs, servers, or access limits, these tools emphasize how the artificial intelligence model acts when faced with unfriendly environments. Given that several artificial intelligence faults still happen even with a formally safe application layer, this is especially important.

Adversarial input and logic probing

Attackers do not need conventional exploits at the model level. Frequent contact, language manipulation, and inference probing make up their foundation. Testing of the Pentest AI model reveals how it thinks, if it often adheres to instruction hierarchy, and if, under duress, it generates dangerous or erratic results.

- Antagonistic input creation is among the main approaches these instruments use. This covers malformed prompts, conflicting instructions, edge case scenarios, and vague language. These sources hope to expose hidden flaws in logic revealed by daily usage.

- One of the most important components of artificial intelligence penetration testing is model robustness testing. It checks if minor changes in input result in disproportionate swings in output. High variance typically reveals routes with exploitable logic where attackers purposefully affect replies.

- Honesty and bias checks extend beyond just reasonable arguments. Security testing evaluates whether particular linguistic patterns or data lead to erratic or hazardous activity. Models showing aberrant behavior under particular triggers are simpler to misuse.

Exposure testing of training data guarantees models do not learn and duplicate weak inputs. Under GDPR or HIPAA, even partial memorization might cause legal infractions.

AI Pentesting Tools for Prompt Injection Testing

One of the most serious and widespread modern artificial intelligence hazards is the subject of methods of prompt injection testing. Prompt injection avoids abusing program flaws. Traditional security systems miss it because language models assess instructions and assign weight.

These technologies produce actual, several-turn attack arguments instead of just single inputs. Rarely do attackers succeed on only one incorrect prompt. Over a duration of time, they look at, alter, and link instructions. Good artificial intelligence penetration testing needs to match this behavior.

How attackers manipulate instructions over time

By means of contextual manipulation, system prompt leakage testing tries to uncover hidden commands. Small leakage can expose guardrails, internal logic, or elaborate process details that attackers may eventually abuse.

Instruction override testing helps to see if user-supplied indicators might replace system-level restrictions. A model overruling internal policies in favor of user instructions would render safety precautions pointless, a known risk highlighted in AI threat intelligence research.

Role and identity manipulation

Role ambiguity tests models’ ability to be tricked into altering their identities or forgetting constraints. Usually, this occurs when cues comprise nested roles or fictitious circumstances that question educational hierarchy.

Multiple-step chaining enables one to determine whether defenses deteriorate across lengthy chats. Many LLM-driven systems first take off admirably, but as the scenario evolves, they slowly vanish. Rapid injection testing is required for chatbots, copilots, and internal assistants interacting with delicate systems or data, as well as customer service robots.

Discuss your AI security-related concerns or get step-by-step insights on AI application security with our AI chatbot.

AI Pentesting Tools for Data Leakage Detection

Focusing on one of the most underappreciated threats in contemporary artificial intelligence systems, unintentional information disclosure, AI pentesting tools for finding data leaks help. Unlike conventional applications, artificial intelligence models go beyond simple data retrieval. Based on patterns gleaned from instruction and interactions, they deduce, recreate, and generate replies. Data leakage appears innocuous, persistent, and challenging to spot without thorough AI pen testing.

What these tools actively look for

Rather than merely inputs, these technologies study findings from artificial intelligence. Along with personally identifiable information, they search for answers for financial record disclosure, internal papers, system cues, or private training materials. Usually covering multiple dialogues rather than just one answer, leakage calls AI-driven penetration testing to simulate constant questioning and conversational persistence.

One excellent ability is memorizing test training data. Some models unconsciously save sensitive information included in instructional material. By looking for exact reconstruction or verbatim recall, data leakage testing tools assist organizations in evaluating whether their pentest artificial intelligence model findings indicate an intolerable memorization risk.

Training data memorization risks

Another major priority is inferential attacks. Though they do not directly acquire raw data, attackers’ conclusions about sensitive traits derive from deliberately created questions. Through outputs across several responses, these tools assess implicit information leakage in models.

Cross-user data leakage is also taken into consideration. Multi-tenant artificial intelligence systems have tools that examine if one user may get access to another user’s data via conversation history ambiguity, session overlap, or embedded reuse.

Regulatory and compliance impact

This testing fits from a compliance perspective with governmental requirements exactly. Among other systems, GDPA, HIPAA, and SOC 2 demand robust defenses against unwanted data exposure, including AI-generated outputs.

Data leakage AI penetration testing is now required for businesses using artificial intelligence in regulated or customer-facing situations.

You might also like to know about What Is AI Threat Detection? How ML Identifies Cyber Threats in Real-Time.

AI Pentesting Tools for API and Plugin Security

Apps driven by contemporary LLMs seldom operate alone. By calling APIs, plugins, databases, and internal services depending on user commands, they function as orchestral levels. For artificial intelligence pentesting tools, this elevates API and plugin security to the first rank.

Whether artificial intelligence-mediated API requests abide by permission, authentication, and corporate logic restrictions is decided by these technologies. Attacks often include the creation of triggers that cause the model to mistakenly access sensitive APIs, hence influencing artificial intelligence systems. Classic API security solutions ignore this since the API call seems to be authentic.

Another important risk is privileged plugin access. Many artificial intelligence tools make operations easier by plugging in with broad permissions. AI pentesting technologies evaluate if those authorizations may be used through prompt change, hence leading to unauthorized system activities or data exfiltration.

Data exfiltration testing determines if sensitive backend information may be indirectly accessed through tool calls. Artificial intelligence reasoning can organize calls in methods that developers never imagined, even if APIs are protected separately.

Tools confirm whether AI-mediated requests support suitable identity boundaries or if session confusion enables privilege escalation.

These tests are definitely required for businesses using artificial intelligence with CRMs, payment systems, internal dashboards, or cloud services. AI becomes a reliable proxy that attackers may abuse silently without pentesting it. Businesses integrating artificial intelligence should see this level as critical infrastructure.

Schedule a comprehensive security assessment to ensure your APIs and plugins are fully protected.

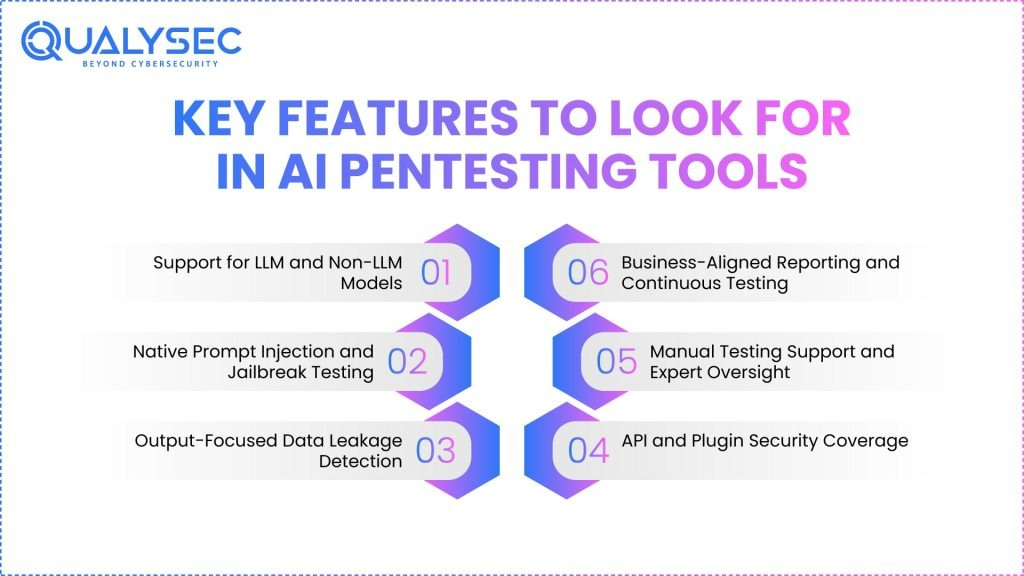

Key Features to Look for in AI Pentesting Tools

The depth of testing should be the priority for security teams reviewing an AI pentesting tool collection, not the number of automated pentesting tools tests a tool might run. Lack of advanced techniques in artificial intelligence systems provides a mistaken feeling of safety via superficial scanning. Good artificial intelligence penetration testing systems discover behavioral hazards as opposed to just surface misconfigurations.

I. Support for LLM and Non-LLM Models

Good artificial intelligence penetration testing tools must support non-LLM models as well as LLMs. Many companies depend on chat-based services, suggestion engines, ranking models, and classification systems. Limiting testing just to LLMs causes significant data gaps in decision-making artificial intelligence systems.

Given unfavorable inputs, non-LLM models can still leak data, show bias, or act erratically. Tools have to evaluate inference logic, feature modification, and model response limitations for a number of artificial intelligence systems. In financial, healthcare, and insurance systems, this is especially important.

Learn also about Cybersecurity for AI in Fintech: Key Risks & Controls

II. Native Prompt Injection and Jailbreak Testing

Bolt-on capability is not a jailbreak or instant injection assault. Every good artificial intelligence penetration testing solution must have these inherent qualities. Static laws are ineffective; these assaults are constantly evolving.

Excellent tools enable direct commands, actual multi-turn conversations, and simulated role conflict situations. They point out how defenses diminish over time, in addition to verifying a single demand. Teams miss the most common artificial intelligence attack vector without depth.

III. Output-Focused Data Leakage Detection

Output analysis is required for AI security; conventional technologies examine inputs. Efficient artificial intelligence pentesting techniques analyze model responses for PII, financial information, internal documents, and artifacts of training data.

This includes memory testing, inference leaking, and cross-session data exposure. GDPR, HIPAA, and SOC 2 compliance depend on output analysis, whereby unintentional disclosure continues to violate.

IV. API and Plugin Security Coverage

Applications based on LLMs rarely work on their own. They call APIs, activate plugins, and interface with internal systems. The AI pentesting tools list has to assess whether conversational interaction could enable the exploitation of these interfaces.

This comprises the above privileged plugins, defective authentication, and hazardous tool call logic testing. Artificial intelligence turns an unmonitored entrance into a fine system devoid of this.

V. Manual Testing Support and Expert Oversight

Automation by itself comes up short. The most effective AI-powered penetration testing approaches let human testers create unique adversarial situations. Tools ought to help with manual prompt creation, behavioral analysis, and attack replays.

Human control is necessary to validate actual company impact and differentiate dreams from exploitable conduct.

VI. Business-Aligned Reporting and Continuous Testing

Reports have to convert results into business risk as opposed to only technical output. Decision makers should be familiar with the cure requirements, chance, and consequence.

Artificial intelligence models sometimes evolve. Continuous pen testing guarantees that security matches timely updates, fine-tuning, and retraining.

Read case studies on AI security to see real-world examples of how organizations protect their AI systems.

Challenges in Testing AI Models and LLM Applications

Testing artificial intelligence systems differs significantly from testing traditional programs. The first major challenge is non-deterministic conduct. Verifying is challenging since one input can result in several different outcomes.

Updates for models cause ongoing transformation. Overnight behavior is changed by retraining, fine-tuning, and swift adjustments. Annual retesting of security staff, not constant, is necessary.

Hallucinations complicate analysis. Every erroneous outcome is not necessarily a bug. Differentiating exploitable conduct from benign delusion requires skilled discretion.

There are no global artificial intelligence security norms. Artificial intelligence security guidelines are still in development, unlike the OWASP Top 10 for web apps. Teams are driven by this to come up with original test plans.

Used alone, automated pentesting tools generate false positives. They could miss subtle logical abuse and mark anticipated volatility as danger.

Especially in healthcare and finance, legal and ethical limitations limit coverage even more.

This is why AI-powered penetration testing calls for tools combined with experienced human testers who have extensive knowledge of AI behavior.

Explore more about OWASP Top 10 LLM Vulnerabilities.

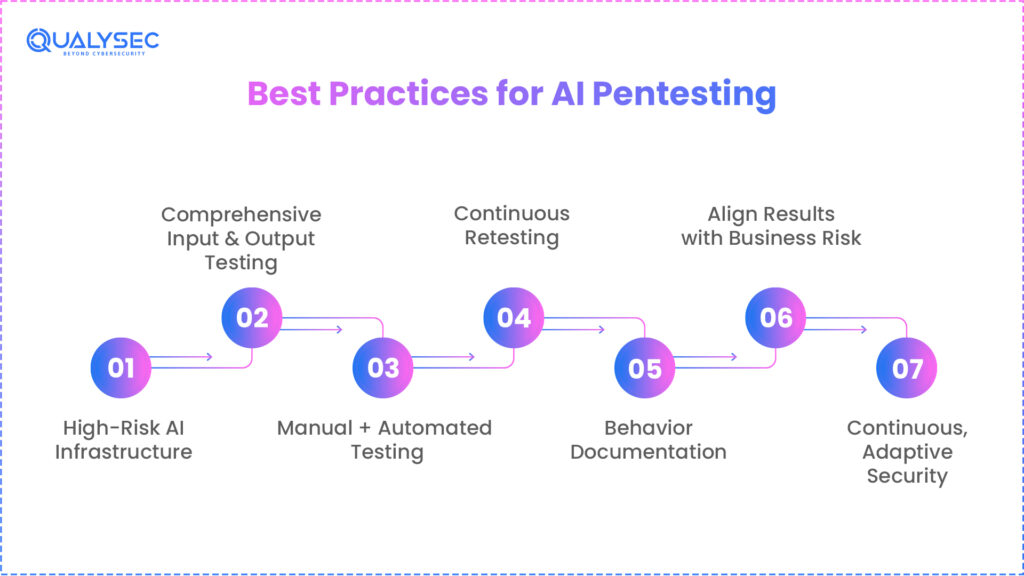

Best Practices for AI Pentesting

Companies should regard artificial intelligence systems as high-risk infrastructure rather than as experimental traits. Users, data, and compliance are directly affected by artificial intelligence choices.

Testing has to include both inputs and outputs. Input validation alone overlooks the dangers of leakage, inference, and hallucination.

Manual adversarial testing should be used with automation. Scale emerges from instruments; people contribute knowledge.

Every model upgrade, retraining cycle, or prompt change necessitates retesting. AI safety is constantly changing, and AI risk assessment must evolve alongside it.

One must properly note both acceptable and unacceptable behaviors. This creates a basis for testing and administration.

Results have to match corporate influence eventually. The top priority for security teams should be problems based on operational, financial, and regulatory risk.

Constant, adaptable, and behavior-driven artificial intelligence security is always on.

Why Choose Qualysec for AI Pentesting Services

Rarely are artificial intelligence security flaws coming from one defect. They arise from intricate interactions between incentives, model behavior, APIs, and data flows. Because of this complexity, checkbox testing falls flat. Good artificial intelligence penetration testing calls for hostile human logic.

Qualysec sees artificial intelligence pentesting from an attacker’s point of view as more than just a tooling technique. Their approach starts with artificial intelligence threat modeling designed for actual corporate operations, thus emphasizing areas where artificial intelligence choices cross sensitive data or important activities.

They conduct thorough penetration testing of artificial intelligence models to assess integrity, robustness, inference risk, and quick manipulation. By mirroring the complexity, terminology, and context that actual attackers use, this goes beyond automated inspections.

Not in isolation, LLM-powered APIs and plugins are evaluated in the whole workflow context. This reveals linked paths of abuse that automated pentesting tools overlook.

Manual adversarial testing is vital. Human testers investigate extreme scenarios, long-form debates, and changing attack paths that AI-powered penetration testing tools cannot completely automate.

Reporting emphasizes business effects instead of only technical discoveries. Financial loss, operational disruption, and compliance exposure correlate with risks.

Discover why organizations trust Qualysec for AI security. Check out our client testimonials.

Conclusion

Artificial intelligence has fundamentally altered both the functioning of the systems and the mode in which attackers act. Built for static applications, security designs fall somewhat short of probabilistic, language-driven systems.

Using experience-led testing together with artificial intelligence penetration testing techniques, businesses may see how artificial intelligence systems fail before attackers exploit them. Rapid handling, data exposure, and AI-powered connections help security teams regain control by validating model behavior.

Your security plan must include artificial intelligence penetration testing if your company employs it.

Speak directly with Qualysec AI security specialists to assess your AI risk posture!

FAQs

1. What are AI pentesting tools and how are they used in security testing?

Designed to assess how well LLM-powered applications and artificial intelligence models perform under harsh circumstances, AI pentesting tools are security testing solutions. Instead of just physical infrastructure, they stress rational errors, quick manipulation, data leakage, and AI-driven API misuse.

2. How effective are AI pentesting tools compared to manual penetration testing?

No. Pentesting AI and adversarial testing to find context-based flaws, logical abuse, and multi-step exploitation pathways meet the need.

3. What types of AI systems can be tested using AI pentesting tools?

AI pentesting tools can examine LLMs, chatbots, copilots, decision engines, AI APIs, AI-integrated business applications, and recommendation systems.

4. Can AI pentesting tools detect prompt injection and data leakage risks?

AI pentesting tools can examine LLMs, chatbots, copilots, decision engines, AI APIs, AI-integrated business applications, and recommendation systems.

5. How should organizations choose the right AI pentesting tools?

Companies should all give top priority to depth, behavioral testing, manual validation support, and reporting that links results to commercial risk.

6. What are the limitations of AI in pentesting?

Fast model development, hallucinations, and false positives call for constant testing and expert examination rather than a one-time assessment.

0 Comments