In 2025, the average cost of a data breach in the United States reached an astounding $10.22 million, an all-time high. Recent IBM research points to this amazing figure, highlighting a 9% increase driven by stringent sanctions and the rising sophistication of AI-driven assaults. Because almost one in six breaches now include hackers utilizing artificial intelligence for phishing or deepfakes, the era of fast-moving and breaking things in development is formally over. Nearly 63% of violated companies now lack any formal artificial intelligence policy to prevent these leaks. Organizations are hurrying now to fill the governance gap before authorities intervene with severe penalties. ISO/IEC 42001 compliance determines the exact structured framework your business has to construct a safe and moral Artificial Intelligence Management System (AIMS).

The norm seeks to control the undetermined nature of artificial intelligence, whereby the same input does not always lead to the same output. Although static data benefits from traditional security measures like ISO 27001, modern threats like model poisoning or membership inference attacks go untreated by them. These assaults let burglars steal the inside of your artificial intelligence’s very logic. A complete artificial intelligence audit checklist lets you locate these hidden defects before they burst out as attention-grabbing disasters. In the 2026 global market, firms without certified ISO AI standards are progressively excluded from government and business contracts. This book is your total guide for preserving a competitive edge by navigating these high-stakes waters.

What is ISO 42001 And Why Does an AI Security Checklist matter?

ISO/IEC 42001 is the first worldwide standard for developing and honing an Artificial Intelligence Management System (AIMS). ISO 42001 is laser-targeted at the black box of artificial intelligence logic and its unique lifespan, even if ISO 27001 focuses on general data security. By a consistent and verifiable process, it ensures that a corporation manages artificial intelligence rather than just applying it. This standard is especially important in 2026 as autonomous agentic artificial intelligence begins to execute actual transactions without human clicks. Because AI poses threats that conventional security measures cannot identify, an AI audit checklist is essential.

1. Openness and Explainability

Can a regulator be guided through an artificial intelligence decision? For Explainable AI (XAI), a major EU AI Act need is this one. Stakeholders may grasp model boundaries and hazards through openness. Your company runs the risk of black box failures, which are impossible to justify in court without it.

2. Accountability and Responsibility

Who takes responsibility when an automated system makes an expensive error? Under ISO 42001, you need to delineate specific roles and employment. This avoids blame games among managers and developers. The only means to demonstrate to auditors that you care about AI safety is through clear accountability.

3. Data Integrity and Quality

Is your training data representative, legal, and clean? Inaccurate data produces partial or failing results. An ISO/IEC 42001 compliance checklist calls for rigorous data management, including PII leak checks. The fundamental fuel that keeps your AI from becoming a legal catastrophe is high-quality data.

4. Establishment of Long-Term Trust

For creating loyalty in an age of deepfakes, a certified AIMS is your best tool. Trust comes from concrete evidence rather than only claims. Certification lets the market know you follow global best standards. Trust will be the main money for corporate success in 2026.

Secure Your Models with Qualysec’s AI Security Testing!

Who Needs an ISO/IEC 42001 Compliance Checklist?

Under the current legal environment, every company employing machine learning has to comply with ISO AI guidelines. Though primarily intended for tech behemoths, any company where artificial intelligence interacts with essential data can profit from it. The norm is intended to be sector-agnostic; thus, it applies to a 10-person firm as well as a global bank. You are most probably within the purview of this standard if your company makes use of predictive analytics, generative chatbots, or automated decision-making.

Particularly for:

- AI Providers: Businesses developing and selling LLMs or neural networks need to show their products are safe by design to obtain corporate contracts

- AI Users/Deployers: Businesses, including Microsoft 365 Copilot into core processes, and thus needing to handle Shadow AI dangers, are AI users/deployers.

- Regulated Industries: Financial and healthcare industries where artificial intelligence decisions have immediate legal and life-changing effects.

- Global Exporters: Companies wanting to follow the EU AI Act, which, starting February 2025, imposed unacceptable-risk restrictions.

- Public Sector Contractors: Government agencies in 2026 will give first consideration to contractors from the public sector who can show an ISO 42001 AI management system.

Why does AI Security need ISO 42001 Compliance?

While conventional cybersecurity safeguards data in transit and at rest, artificial intelligence security needs to protect data in mind. Because it deals with the contemporary threat environment that conventional firewalls overlook, ISO 42001 is vital. A hacker might poison your model so that it starts giving incorrect advice to your consumers instead of stealing your database, for instance. This is why, in 2026, AI compliance consulting has grown to be a multi-billion-dollar business.

- Adversarial Attacks: It establishes safeguards to stop data poisoning that could change a chatbot into a security vulnerability or a timely injection.

- Algorithmic Bias: It requires testing to guarantee your artificial intelligence doesn’t generate prejudiced results, causing public uproar.

- Model Reliability: Model reliability helps to guarantee your artificial intelligence stays accurate and doesn’t wander as the environment around it evolves.

- Stakeholder Trust: Investors gain assurance that international standards and a certified badge of merit govern your artificial intelligence.

- Resource Efficiency: Standardizing AI processes helps businesses simplify their development pipelines and cut unneeded computer expenses.

Look at real-world case studies on how businesses implement ISO/IEC 42001 to strengthen AI security.

See How We Helped Businesses Stay Secure

Recent Breakthroughs in AI Security Standards (2020–2026)

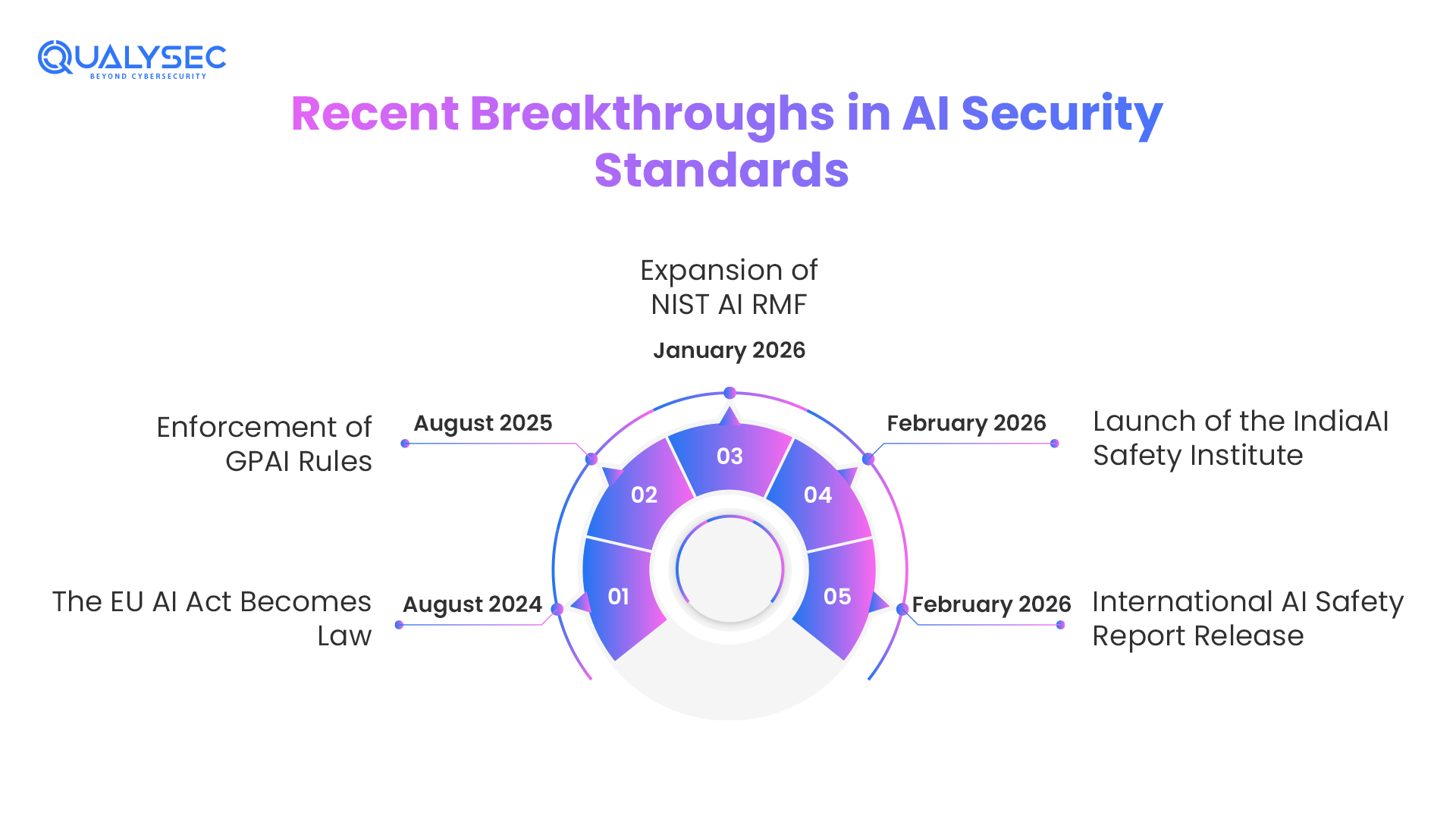

In recent years, the scene of ISO AI criteria and worldwide control has quickly changed. Here are five very genuine changes:

- August 2024: The EU AI Act Becomes Law. Categorizing systems by risk level, this historic statute established the first all-encompassing standards for artificial intelligence worldwide. Effectively, it transformed ISO 42001 into the how-to handbook for compliance with legal obligations.

- August 2025: Enforcement of GPAI Rules. Strict openness and governance standards for General-Purpose AI (GPAI) models were first used by the European Union. This demanded more precise documentation of their sources of training material than ever before.

- January 2026: Expansion of NIST AI RMF. Revised recommendations (v2.0) from the U.S. National Institute of Standards and Technology for handling generative AI hazards now cross-reference ISO/IEC 42001 for worldwide interoperability.

- February 2026: Launch of the IndiaAI Safety Institute. A significant national program under the India AI Mission developed to solve safety issues and eliminate prejudice, aligned with international ISO AI standards.

- February 2026: International AI Safety Report Release. Highlights of the second version of this worldwide report, released on February 3, 2026, are that 23% of high-performing artificial intelligence tools still lack fundamental safeguards, hence accelerating AI security audits for the corporate world.

A Real-World AI Governance Challenge: The HR Bias Case

Think about a worldwide HR technology company that used an artificial intelligence model to screen thousands of job applicants. The model started to prefer candidates from particular demographic groups within months as historical prejudice concealed in the training data became evident. This was a governance failure, not a hack. Millions in possible penalties and a PR catastrophe that imperiled their life confronted the corporation.

- The company was able to use an ISO/IEC 42001 compliance checklist.

- Find the mistake during a compulsory AI System Impact Assessment (Clause 6.1.4).

- Use Data Quality Controls (Annex A.7) to rebalance training sets and get rid of unfair characteristics.

- Set up Human Oversight (Annex A.9) to confirm and override important employment recommendations.

This forward-looking strategy helped the company to avoid a large class-action case and mend its brand image. They developed a permanent artificial intelligence management system that captures these mistakes in real-time rather than just changing the model.

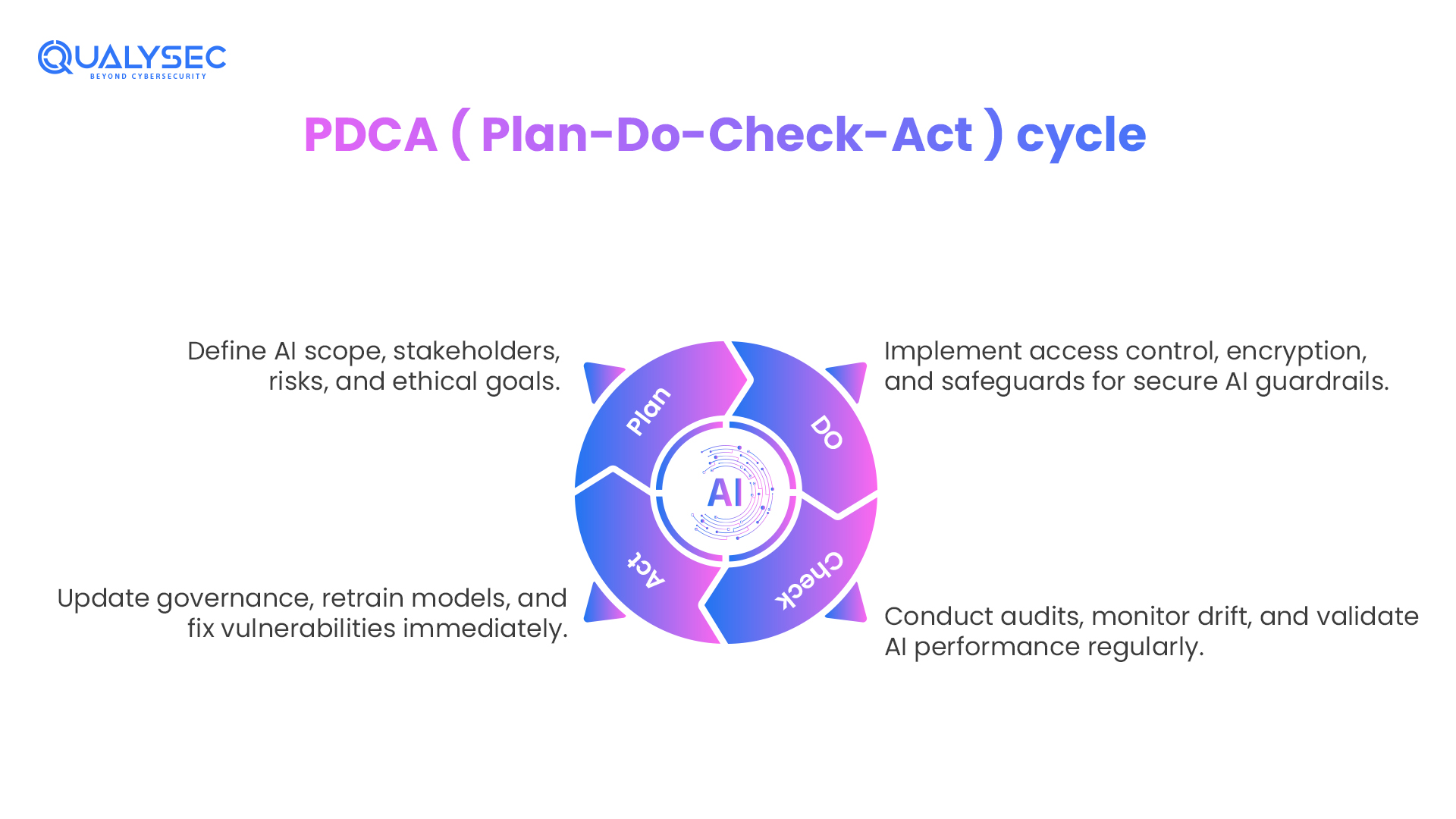

Operational Mode: The Plan-Do-Check-Act Approach (PDCA)

Following the PDCA cycle, the ISO 42001 artificial intelligence management system is a dynamic process rather than a one-time initiative. This guarantees that your security will follow your artificial intelligence’s learning pace. Should your model vary, your audit has to vary as well. Managing the drifting nature of machine learning models requires this constant loop.

- Plan: Set ethical goals for your models, define your AI scope, and pinpoint stakeholders. This entails first evaluating the risk of the artificial intelligence model.

- Do: Use stringent access control and encryption for training data, together with other technical safeguards. Your developers construct the guardrails here.

- Check: Teams should conduct regular AI security audits, and they should use monitoring systems early to identify model drift. You have to confirm that the artificial intelligence is actually doing as you had intended.

- Act: Continuously modify your governance rules and retrain your models using audit results. Should you discover a vulnerability, you move right away to repair it.

| Evidence Category | Document/Record Needed | ISO 42001 Clause |

| Governance | AI Policy & AIMS Scope | Clause 4.3 & 5.2 |

| Risk | AI model risk assessment | Clause 6.1.2 |

| Operations | Data Provenance & Quality Logs | Annex A.7 |

| Evaluation | Internal Audit Reports | Clause 9.2 |

Start your security journey- Book an ISO Readiness Assessment or Download a Sample Penetration Testing Report.

Get a Free Sample Pentest Report

Key Documents and Evidence Required for Audit

Auditors depend on icy, tough evidence rather than on explanations. One must possess a unified repository of papers to satisfy an ISO 42001 audit. Your certification will be refused without these, no matter how secure your code is.

- Governance Documents: You need an official AI policy signed by top management among governance documents. This document must specify the AI Scope, along with exactly which models the Artificial Intelligence Management System covers.

- Risk Management Records: Records of risk management include the results of the artificial intelligence model risk assessment. You have to prove that you had a risk treatment plan to remedy model inversion and evasion attacks, among other hazards.

- Data and Model Control: Documentation of your training data (provenance) is required for data and model control. Before release, you also have to have Model Validation Records verifying that someone tested the artificial intelligence against its original specs.

- Monitoring and Review: You want logs from your performance monitoring tools so you may monitor and review. You have to have an incident record documenting how you reacted if an artificial intelligence event (such as a hallucination providing terrible counsel) happens.

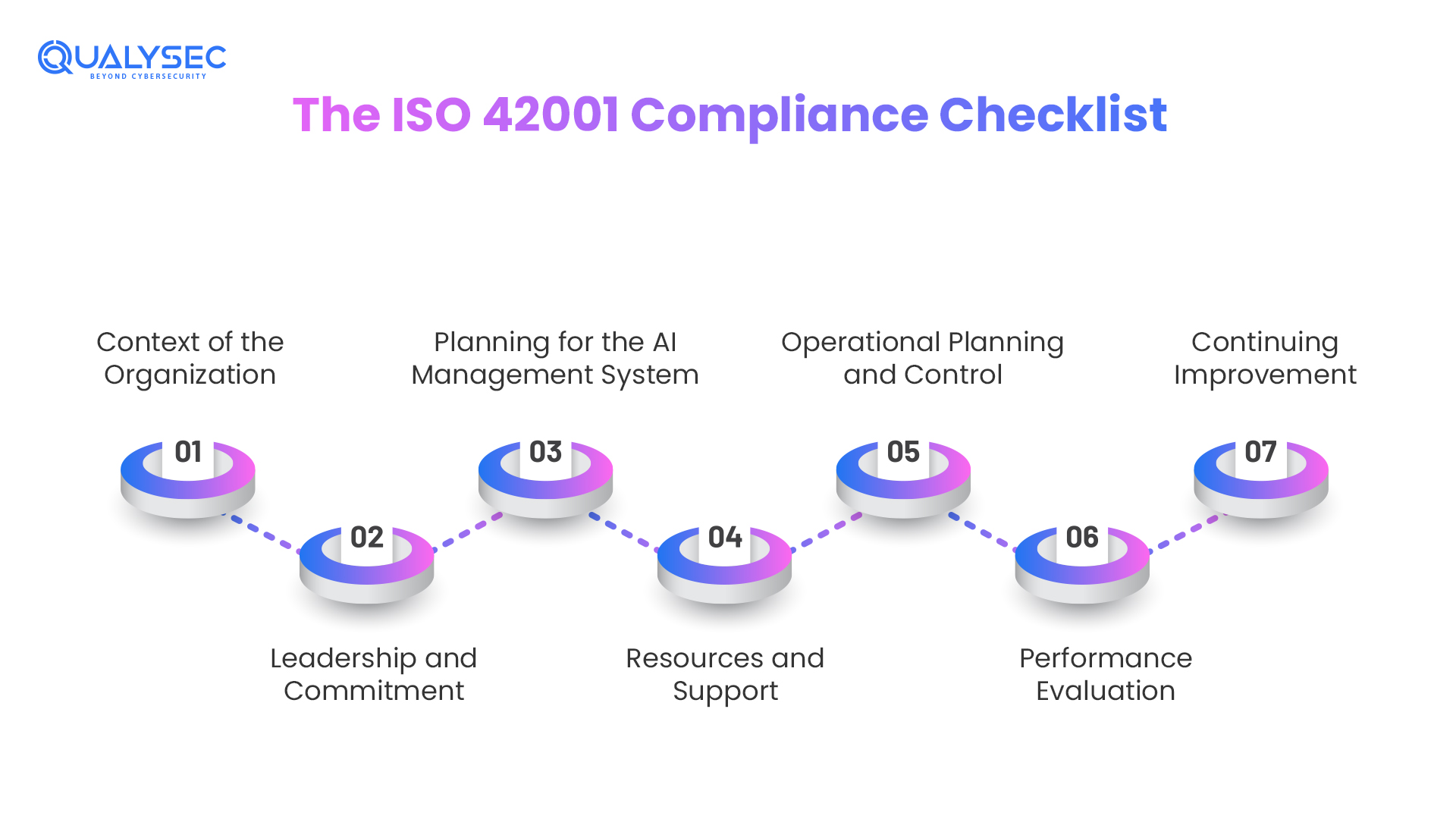

The ISO 42001 Compliance Checklist: Key Audit Questions

Make sure your team confidently responds to these high-priority issues from the ISO/IEC 42001 compliance checklist during internal artificial intelligence security audits with supporting documentation.

Context of the Organization (Clause 4)

The most critical error that companies commit is struggling to conceal their messy tools. Honesty is the most important thing to auditors. In case your employees are typing sensitive emails with a haphazard chatbot, the auditor will discover. You must show that you have seriously examined the hood of your own company to the bottom.

- The Paper Trail: Construct a Firmwide Stock Book. List every tool you use. Assuming that you have determined that a certain tool can be safely used without being supervised, ask yourself how you have come up with that conclusion and write it down.

- Who gets hurt?: Don’t just say “the customers.” Be specific. Demonstrate the difference between the way that your tech may impact someone buying a home for the first time and someone looking for employment.

- A Story from the Field: One company will have collapsed in 2025 due to neglect by its marketing department. The group was entering confidential data of clients in a free online generating system. It is not a complicated rule: When your data is input into the tool, it falls under the tool.

Leadership and Commitment (Clause 5)

You cannot simply pay an intelligent developer and instruct him/her to take care of the ethics. An auditor would like to know that the individuals in the costly suits are paying attention.

- The Paper Trail: I would like to read such minutes of a board meeting where people were in disagreement. When everybody simply signs a paper that he does not read, it is a rubber-stamp job, and the auditors can read between the lines.

- The Veto Power: To whom shall the music cease? One of them must be someone whose job description clearly states that it is within their mandate to stop a launch in case the tech is unsafe.

- A Story in the Field: The audit turns into a disaster when the company applying to be audited can respond to a question on ethics with only one answer: we hire the best people. Auditors do not care to hear that you hire someone; they want to know who exactly is heading the tech.

Planning for the AI Management System (Clause 6)

Risk evaluation that is generic is a waste of time. You must reason like a bad actor who is attempting to ruin your own system.

- The Paper trail: Present me with your Attack Register. Have you been trying to deceive your very own system into getting secrets? Have you attempted to give it partisanship? Record each of the attempts.

- Real Numbers: Stop saying “we want it to be fair.” Define what “fair” looks like. Is a 90 percent accuracy rate with all age groups a pass? Put a number on it.

- Treatment of the Flaws: When your technology begins to hallucinate (create things out of thin air), demonstrate what software wall you have erected to prevent its advancement.

Resources and Support (Clause 7)

Auditors do not merely ask whether your group is clever or not; they are asking whether they are proficient, particularly in AI Ethics and Security.

- Evidence Item 1: The Competency Matrix is the evidence item. Develop a table indicating the particular AI safety competencies required by each role (data scientist, engineer, etc.).

- Evidence Item 2: Training Logs. Get out of the PDF read-and-sign. The auditors would prefer to see the results of a quiz or certificates of real AI Safety workshops.

- Evidence Item 3: Interview. The “Spot Check” Interview. Be prepared: an auditor may take a senior developer aside and say, “How does our AI Policy modify your writing of code? In case they are blanking, then you are facing a non-compliance hit.

Operational Planning and Control (Clause 8)

This is the point where the rubber meets the road. It is about making sure that your technology does not drift or become a dangerous thing once you put it out.

- The Paper Trail: Who informed you that the tech was fine every time it was upgraded? Record all the version changes and note down the person who has authorized the update.

- Vetting Your Partners: You can never trust someone big, such as Microsoft or OpenAI. You must have some record of showing that you have done your own research on their security procedures before connecting them to your business.

Performance Evaluation (Clause 9)

Regulators and auditors detest old systems. It is a failure to launch it six months ago and not visit it since then.

- The Paper Trail: I should get monthly “Health Checks.” Is the technology as precise on Run Day 1 as it was before? Otherwise, present me with the report that states the same.

- The Warts-and-All Audit: Conduct your internal audit first. And here is a point to keep in mind: find something that is wrong. Lesson of the Field: When your internal report states that everything is 100 per cent. Perfect, then the auditor will remain twice as long since he will not believe you. State your weaknesses within the quickest time achievable.

Continuing Improvement (Clause 10)

Do corrections happen? Take action addressing root causes rather than symptoms with proven effectiveness when audits, monitoring, or incidents find issues.

I) AI Lifecycle Management (Annex A.6)

Annex A.6 demands a demanding structure for the whole AI lifetime, guaranteeing quick innovation stays in line with operational safety and responsibility. Organizations must set formal decommissioning to safely retire models exhibiting performance drift or security flaws, while retaining exact version control to enable technical rollbacks. This ordered approach guarantees your ISO artificial intelligence management system stays strong and defendable against regulatory inspection by eliminating shadow artificial intelligence hazards.

II) Data Governance (Annex A.7)

To stop inadvertent data breaches, Annex A.7 demands high-quality, ethically obtained, and personally identifiable information (PII)-free AI training material. To reduce historical biases and meet auditors’ requirements for thorough documentation of data sources, businesses have to present repeatable systems for confirming dataset impartiality. Strict data integrity management shields you from data poisoning assaults and guarantees adherence to international legislation, including the GDPR and the EU AI Act.

III) Transparency and Explainability (Annex A.8)

By demanding unambiguous Transparency Notes that describe model logic and operational limitations to end-users, Annex A.8 aims to address the black box nature of artificial intelligence. To encourage public trust and stop dishonest behavior, groups should clearly indicate when consumers are engaging with a bot rather than a human. Beyond technical correctness to create real corporate responsibility, this emphasis on Explainable AI (XAI) guarantees that every AI-driven decision is auditable and justifiable.

| Requirement | Mandatory Evidence |

| AIMS Scope (Clause 4.3) | Documented list of all AI models and systems included in the audit. |

| AI Policy (Clause 5.2) | An official statement of commitment to ethical and secure AI. |

| Risk Assessment (Clause 6.1.2) | A formal AI model risk assessment report covering bias and security. |

| Impact Assessment (Annex A.5) | Documentation of how AI affects individuals and society. |

| Data Lineage (Annex A.7) | Records showing where training data came from and how it was processed. |

Schedule a 30 minutes meeting with our security experts to discuss your ISO/IEC 42001 compliance readiness.

Speak directly with Qualysec’s certified professionals to identify vulnerabilities before attackers do.

The ISO/IEC 42001 Certification Process

I. Certification Timeline:

Usually, six to eighteen months, depending on the size and complexity of the organization, achieving certification under ISO/IEC 42001 usually takes six to eighteen months.

II. Gap Analysis:

Against ISO 42001 standards, gap analysis examines current AI techniques. This entails a methodical examination of every paragraph to determine whether the necessary policies, procedures, and controls are present. Internal personnel having this experience or outside AI compliance consulting experts perform gap analysis. The output highlights what exists, what is missing, and areas needing improvement.

III. Implementation:

To close discovered gaps, implementation develops technical controls, processes, and policies. This means many workstreams are operating concurrently. One team creates training programs, another puts technical security measures into effect, and still another develops governance papers. Effective program management helps these workstreams to stay synchronized and on track.

IV. Internal Audit

Before bringing in outside auditors, the internal audit confirms that the efficient functioning of your ISO artificial intelligence management system and related data security compliance controls are functioning effectively. Internal audits act as dress rehearsals for certification audits, enabling the discovery and correction of issues in low-stakes situations. Companies foregoing internal audits sometimes get lackluster formal audit findings, revealing significant problems delaying certification.

V. Stage 1 Audit

External auditors examine the design and papers of your ISO 42001 AI management system during the Stage 1 audit. This entails determining whether policies and procedures cover all necessary clauses, checking that the stated scope is suitable, and checking that control aims and risk evaluations are well recorded. Stage one is mostly documentation-based, with a few interviews as needed.

VI. Stage 2 Audit

The main official certification evaluation is the Stage 2 audit. Auditors assess whether the AI management system runs well under actual conditions. Design is less emphasized in this phase than implementation. Auditors verify that controls work as stated by examining evidence, interviewing people, watching processes, and testing controls.

VII. Certifications

Certification is given upon Stage 2’s discovery of no significant nonconformities. Typically valid for three years, certificates demand yearly surveillance audits. Certification shows ISO compliance expectations guiding an organization in adopting a systematic, repeatable approach to artificial intelligence governance.

How Qualysec Supports Your Iso/Iec 42001 AI Compliance Journey

Negotiating AI compliance consulting might seem difficult, particularly if companies lack internal AI governance expertise. Qualysec helps companies by converting ISO/IEC 42001 compliance standards into verifiable, usable controls. Rather than just highlighting paperwork, this method gives technical approval of AI systems and governance procedures upfront.

How Qualysec strengthens your artificial intelligence compliance:

Adversarial AI Red Teaming

Qualysec finds flaws like prompt injection, abuse scenarios, and model modification by simulating actual assaults. This testing method boosts the organization’s AI audit checklist‘s credibility and strengthens readiness for AI security audits.

Automatic Evidence Gathering

Systems are meant to automatically log, trace training data lineages, and record monitored outputs. Keeping the ISO IEC 42001 compliance checklist always revised helps to maintain ongoing compliance and thereby lowers the manual audit preparation workload.

Monitoring of Drift and Bias

Performance fluctuations and bias signals are watched for in AI systems over time. Early detection lowers operational and reputational risk and helps companies satisfy AI model risk assessment criteria.

Designed the AIMS Framework

Including alignment with worldwide AI governance expectations, Qualysec helps to create an ISO 42001 AI management system in accordance with industry-specific risks and legal requirements.

Real World:

The Reality Check: We fill the documentation-and-evidence gap by penetration testing an AI application that is Clause 6.1.2-conformant risk artifact: we discover AI-specific attack paths (LLM/RAG/agents/APIs), test them by exploitation, and convert them to an AI Risk Register with likelihood plus consequence scoring, and a prioritized remediation roadmap, which you can present in an audit.

The Relevance: We also ensure you develop the paper trail auditors would like to have, define clear risk criteria, threshold justification, ownership, and traceable decisions to make sure that your AI risk evaluation is repeatable, reviewable, and certification eligible.

Expert Insights: This is a direct implementation of ISO 42001 provisions of AI risk assessment, AI impact assessment, and AI risk treatment by providing technical evidence and formalized risk treatment advice- not equipment.

Conclusion

Getting ISO 42001 compliance is the sure-fire way to show your artificial intelligence is ready for the world stage. By following this artificial intelligence audit checklist, hazardous experiments become regulated, transparent resources that the economy can rely on. Not only those with the quickest AI, but also the firms having the most trusted artificial intelligence, will define 2026 as a year of winners. Compliance offers the foundation upon which such trust is built and maintained for decades to come.

It’s about more than only avoiding fines; it’s about making sure your technology supports your business objectives without generating secret liabilities. With a certified ISO 42001 AI management system, your firm ensures it remains ethical, resilient, and ready for anything the future holds. You need a firm base to stand on as artificial intelligence evolves at a breakneck pace. Qualysec wants to guarantee that your certification path is quick, technical, and completely safe.

Book a demo or contact our experts to manage your AI security and compliance in one place.

Contact us to secure You AI with Compliance

Reference:

1. ISO/IEC 42001:2023 – AI management systems

2. AI Risk Management Framework -NIST

FAQS

1. What is ISO 42001 compliance?

Concentrating on risk, ethics, and security, it is the process of satisfying the demands of the global standard for responsibly running artificial intelligence systems.

2. What is ISO IEC 42001 for AI?

An Artificial Intelligence Management System (AIMS) is built on the specific worldwide norm (ISO/IEC 42001:2023).

3. What is the difference between ISO 27001 and ISO 42001?

ISO 27001 protects every information asset; 42001 specifically controls the particular risks and life cycle of artificial intelligence, including bias and hallucinations.

4. What is ISO 42001’s applicability?

It pertains to any business, large or small, in any sector, creating, delivering, or using AI-based goods or services.

5. Who needs ISO/IEC 42001 certification?

Companies aiming to follow worldwide rules, like building stakeholder trust or safeguarding their AI supply chain.

6. What are the key requirements of ISO/IEC 42001?

Core requirements include human oversight, data quality management, a complete artificial intelligence model risk analysis, and a documented artificial intelligence policy.

7. What are the benefits of ISO/IEC 42001 compliance?

Better model accuracy, less legal liability, a competitive edge in B2B transactions, and preparation for 2026 legislative amendments.

0 Comments