Introduction

From an experimental technology, artificial intelligence has quickly evolved into a base layer of contemporary cybersecurity. Organizations today rely on artificial intelligence in cybersecurity to analyze enormous data volumes, find outliers, automate incident response, and lower human error. Though it has also brought a hazardous reality with growing AI cybersecurity threats, this reliance has produced efficiency. AI systems that fail fail at scale.

The Rise of AI in Cybersecurity and Its Hidden Risk

AI cybersecurity threats are moving more quickly in 2026 than classic countermeasures can keep pace. Attacks against AI models, training pipelines, and inference logic have increased dramatically, according to OWASP and the Cloud Security Alliance. These are not flaws of the theory. Pathways attackers often use to influence outcomes without directly violating infrastructure are actively exploited.

AI systems work like black boxes, which presents difficulties. Often trusting results without insight into decision-making processes, security teams’ attackers break this confidence by contaminating models, adding hostile prompts, and extracting private information via many interactions. These dangers directly affect artificial intelligence in cybersecurity installations in finance, healthcare, SaaS, and government systems.

How the AI Attack Surface Has Shifted

The attack surface broadens past networks and endpoints into intelligence itself as cybersecurity and artificial intelligence keep coalescing. Neither signature-based tools nor conventional firewalls can spot a model quietly learning incorrect behavior. That is the reason learning AI-specific threats is now a fundamental requirement rather than an advanced skill.

This tutorial outlines the most serious AI security hazards in 2026, how they operate, and why they are so challenging to recognize. It also presents realistic defensive techniques based on real-world testing and risk analysis, not theoretical.

Organizations wishing to correctly and safely use artificial intelligence together with cybersecurity should view AI solutions as valuable assets needing regular validation. Those who avoid long-term loss of trust, operational interruption, and regulatory fines.

What Are AI Cybersecurity Threats?

Attacks intended to take advantage of how artificial intelligence systems learn, manage inputs, and produce outputs are known as AI cybersecurity threats. These threats directly control intelligence behavior rather than traditional cyber attacks that target software flaws or misconfigurations. While making hazardous decisions, the system may seem to work.

Attacks seek to influence results rather than interrupt availability in settings where artificial intelligence in cyber defense is used for detection or automation. Often more valuable than a crashed system is a compromised model that encourages destructive activity or hides notifications.

These risks exist throughout the artificial intelligence life cycle. Attackers can add prejudiced or harmful samples during data collection. Poisoned data distorts learning during training. Prompt manipulation changes behavior during deployment; frequent inquiries retrieve sensitive data during runtime.

One cause of the success of these assaults is overdependence on automation. AI-generated alerts, categories, and recommendations have the confidence of security teams. Malicious behavior mixes in with daily activities when attackers subtly affect these outcomes. Compared to rule-based systems, this exposes AI in cybersecurity.

Scale presents yet another difficulty. Attackers may reproduce a flaw in a model design or deployment approach across companies with comparable tools once they spot one. This causes systematic risk among businesses using comparable artificial intelligence systems.

Defending against these threats requires visibility into model behavior, not just infrastructural security, as adoption of AI cybersecurity solutions and AI-driven platforms grows internationally.

Key AI Cybersecurity Threats And Impact

| Threat Type | Primary Target | Business Impact |

| Model Poisoning | Training pipelines | Missed attacks, false trust |

| Prompt Injection | AI interfaces | Data leaks, unauthorized actions |

| AI Data Leakage | Models and APIs | Compliance violations |

| Model Abuse | AI endpoints | Operational disruption |

Don’t Let AI Cyber Threats Compromise Your Business. Contact Us Today to Learn How Our Experts Can Help You Detect, Prevent, and Respond to Emerging AI Security Risks Effectively.

Understanding Model Poisoning Attacks in AI & How It Impacts AI Systems

Because it targets the intelligence layer instead of infrastructure, model poisoning is viewed as among the most serious AI cybersecurity risks. When training data is changed by attackers, the artificial intelligence algorithm picks up wrong patterns that forever influence decision-making. Unlike conventional breaches, poisoned models are still running typically but yielding hazardous effects. This makes detection very challenging and lasting.

Model poisoning fundamentally results from the inclusion of hostile, prejudiced, or strategically modified data into training datasets or feedback loops. Attackers use open datasets, third-party data providers, compromised sensors, user-created inputs, and automated retraining systems. Because machine learning learns patterns during training, even a little amount of malicious data can distort model behavior.

Why Poisoned Models Fail Quietly

Usually, in artificial intelligence used for cyber defense, poisoned models pass quietly instead of violently. Instead of crashing, they gradually lower security performance. A fraud detection model would, for example, approve high-risk transactions; an intrusion detection system would prevent horizontal movement. These irritants are small, ubiquitous, and rather pricey.

Targeted and Precision-Based Poisoning

Targeted model poisoning seeks to alter certain outcomes. Data poisoning by an attacker could cause monitoring systems to reject particular IP ranges or malware always classified as safe. A study of the MITRE ATLAS reveals that model correctness varies greatly, with only five percent of the training data changed. The effectiveness of attacks attracts them to poisoning.

A rather dangerous variety is backdoor poisoning. Here, attackers conceal triggers inside training models. The model behaves maliciously when certain patterns—a certain symbol, word, or pixel configuration emerge. These backdoors only begin to function when the attacker instructs them; otherwise, they remain latent throughout testing and security audits.

Beyond technical damage, the long-run effects of model poisoning continue. Systems driven by artificial intelligence expose companies to blind spots, compliance violations, financial losses, and trust erosion. Recovery can cost millions and take months, usually involving full dataset audits, thorough retraining, model redeployment, and validation.

Traditional Security vs AI Security Focus

| Area | Traditional Security | AI Security |

| Attack Vector | Software flaws | Model behavior |

| Detection | Signature based | Behavior based |

| Testing | Infrastructure | Intelligence validation |

| Risk | Localized | Systemic |

Read also: What Is AI Threat Detection? How ML Identifies CyberThreats in Real-Time.

What Is Prompt Injection in AI Models?

Among the most rapidly developing artificial intelligence security problems impacting PCs powered by enormous language models is prompt injection. It does not take advantage of programming flaws like past cons do. Rather, it alters how artificial intelligence approaches context and language. This is especially dangerous in spoken and automated contexts.

How Prompt Injection Manipulates AI Behavior

Among what looks to be conventional user inputs, including harmful instructions that encourage injection procedures. The gaps that have been opened up in the process of development are usually discovered by the use of Automated Security Testing Tools, which are intended for this purpose.

These principles trump development boundaries, security filters, or system messages. Because language models forecast reactions based on context rather than expected confirmation, they sometimes find difficulty in distinguishing between attacker-controlled instructions and trustworthy guidelines.

Fast injection in cybersecurity applications may cause models to show sensitive information, find interior system logic, or perform unauthorized acts. This group includes

- deactivation of the security systems of artificial intelligence agents

- phishing material creation

- circumvention of authorization protocols

- credential leakage

Natural language is used by attackers to deliberately hide really bad clues. Role-playing scenarios, summaries, or clear demands help instructors to show norms. Because there is no fixed payload or signature, traditional input validation and WAF regulations cannot correctly detect these attacks.

Linking artificial intelligence systems to databases, CI pipelines, APIs, or cloud automation tools greatly raises the risk. One amazing prompt injection can cause data exfiltration, set change, or harm operations over several unmonitored systems.

Why Prompt Injection Is Now a Top Enterprise Risk

Early injection is now seen by OWASP as one of the most detrimental for large language models. This implies a shift from thinking to systems use. Once generative artificial intelligence is embraced, prompt injection will turn into a major assault vector aimed at ecosystems for cybersecurity and artificial intelligence.

Prompt injection simulation, AI logic abuse testing, and LLM security validation are included in Qualysec’s advanced API and application security testing! Read Case studies.

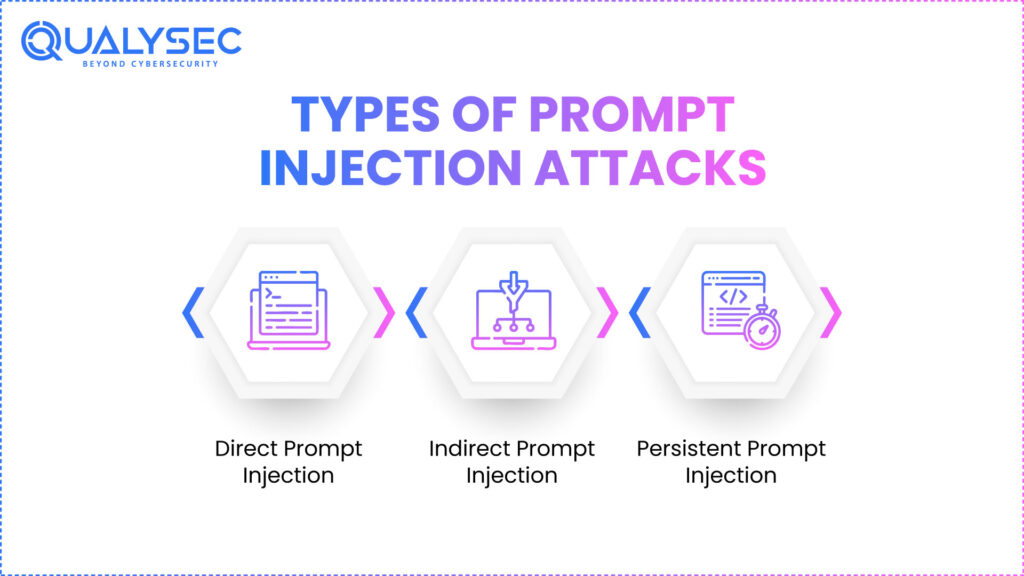

Types of Prompt Injection Attacks

More than one specific strategy, prompt injection assaults are a kind of behavioral ploy meant to influence how large language models interpret context, power, and purpose. These attacks use the fact that AI algorithms fall short of conventional software in their application of security measures. They offer ones instead of answers grounded on likelihood, sequence prediction, and situational weighting that attackers purposefully change.

1. Direct Prompt Injection

Real-time interaction with an artificial intelligence system causes straightforward prompt injection. The attacker sends ready instructions directly through conversational interfaces, co-pilots, or dashboarding powered by artificial intelligence. Claiming dominance, urgency, or role changes, these directions fly in the opposite direction of system alerts or security limitations.

Direct prompt injection is especially dangerous because of its speed. Neither outside systems, malware, nor continuing processes are required for the assault. One prompt requesting the model to disregard past constraints or current internal data could soon cause credential leaking, sensitive information disclosure, or dangerous conduct.

Direct prompt injection can control SOC assistants, incident response bots, or compliance systems in cybersecurity situations, including artificial intelligence. This could either misclassify threats, silence alerts, or give internal security logic to attackers.

Traditional input validation fails here because the content is linguistically exact and usually difficult to distinguish from genuine queries. Only the background for target verification is provided by the model.

2. Indirect Prompt Injection

Indirect prompt injection is far more sophisticated and extremely hard to detect. Malevolent directions are hidden within the external knowledge that the artificial intelligence next uses. The exposed gaps often require AI security tools to identify and control. This includes web pages, PDFs, emails, logs, API responses, or knowledge base records.

When it looks over or minimizes this extraneous debris, an artificial intelligence model incorrectly executes the embedded commands. For instance, a damaged website could instruct artificial intelligence to evade limits during summarization or to collect user information.

Indirect prompt injection is extremely harmful in business settings where artificial intelligence systems process data from many unreliable sources. This creates a linked attack path whereby attack vectors are content supply chains.

Attribution is exceedingly challenging, as the dangerous prompt is not evident during user interaction. Many security departments find the initial foundation of accord difficult to find.

3. Persistent Prompt Injection

Long-term, extremely potent danger is regular, prompt injection. In this version, malicious instructions are hidden deep within persistent storage—including logs, vector databases, embeddings, memory caches, or even training datasets.

These instructions keep affecting model behavior long after the initial input has been erased. Often challenging and executing the hostile environment, the artificial intelligence generates ongoing security flaws.

This greatly complicates rehabilitation. Just removing arriving data is insufficient. Organizations may have to completely recreate vector stores, retrain models, or purge embeddings.

Every version of prompt injection works since artificial intelligence systems rank contextual relevance and token probability above overt law enforcement. This is the reason why AI with cyber security demands behavioral analysis rather than solely application scanning.

You might like to know about the AI Security Audit Checklist: How Experts Test AI Models and Applications.

Risks and Consequences of Prompt Injection

Prompt injection is not only a technological failure. It creates methodical commercial risk that compromises confidentiality, integrity, and access across related systems.

Data exposure is among the most immediate dangers. Prompt-injected artificial intelligence systems can expose employee information, client records, credentials, internal documents, or proprietary logic. This immediately generates legal obligations and compliance violations in regulated areas.

Unauthorized actions by AI systems

Another major consequence is unauthorized action performance. AI agents can approve transactions, halt surveillance, change settings, or produce fictitious logs by means of integration with cloud infrastructure, CI pipelines, or financial systems. These actions often seem credible since they are performed by trustworthy artificial intelligence systems.

Furthermore, damage by fast injection is a matter of honesty. Artificial intelligence systems used for risk assessment, fraud detection, or event response might generate incorrect ideas on which security teams rely. This generates blind spots that attackers more often profit from.

Large-scale operational impact

Since artificial intelligence systems gradually operate autonomously, their blast radius from quick injection is much greater than that from human error. One defective model might influence tens of thousands of procedures all at once.

From a governance perspective, these strikes show weaknesses in cybersecurity and AI control since responsibility for decisions made by artificial intelligence is unclear. This increases the risk of operational and compliance issues.

Worried about prompt injection risks? Contact us today for a comprehensive security assessment to identify vulnerabilities and protect your AI systems.

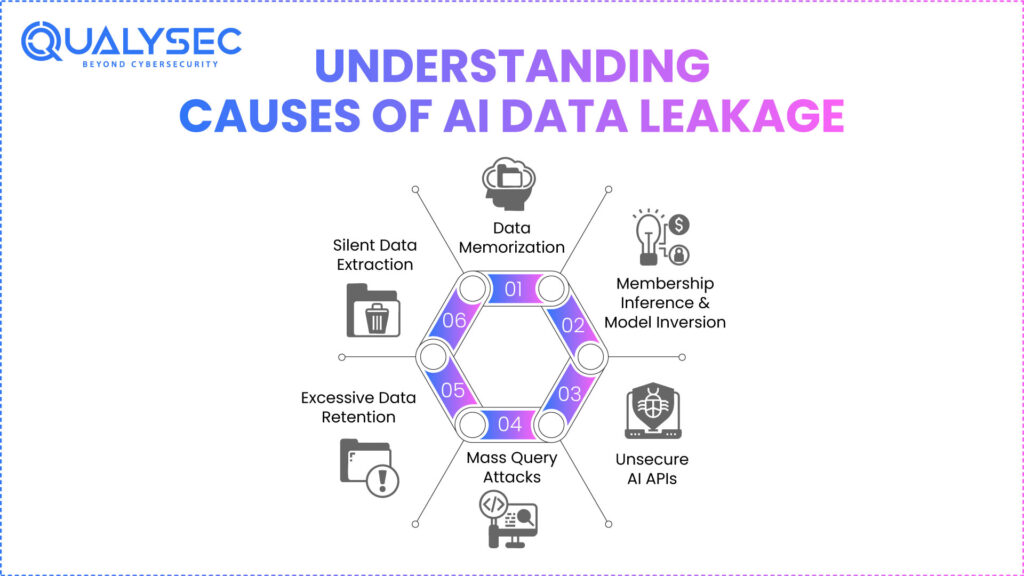

Understanding AI Data Leakage & Common Causes of Data Leakage in AI Systems

Sensitive information unintentionally revealed through artificial intelligence outputs, inference patterns, logs, embeddings, or memory systems causes AI data leakage, often caused by underlying AI security vulnerabilities. Unlike traditional breaches, leakage typically happens quietly without setting security alarms.

Data memorizing is the major motivator. Large language models trained on unmasked data can recall personal, financial, or proprietary data. Attackers gradually collect sensitive information by means of repeated, organized queries.

Two more major factors are membership inference and model inversion. Attackers could employ these techniques to locate regions of the training dataset or determine whether specific information was utilized during training. This reveals personal data and intellectual property.

Uncertain AI APIs raise the chance of leakage by a considerable degree. Utilizing openly accessible endpoints, attackers may automatically send tens of thousands of queries to investigate the model’s reactions till subtle patterns surface.

Too much prompt and response retention aggravates the problem. Logs, vector databases, and analytical pipelines often record sensitive interactions devoid of adequate retention restrictions or access limitations.

These imperfections turn artificial intelligence systems inside AI cyberthreat situations into quiet data extraction channels.

Explore more about Data Security Services in Cybersecurity: Protecting Sensitive Information in the Digital Age.

Compliance and Privacy Risks of AI Data Leakage

Direct and serious legal repercussions result from artificial intelligence data leaks. Increasingly, regulators see artificial intelligence outputs as controlled data processing operations rather than as exempt experimental behavior.

Violations of HIPAA, CCPA, GDPR, and industry-specific regulations cause penalties, required breach notifications, contract-based penalties, and reputation harm. Fines can total four percent of worldwide income in some areas.

Delayed detection is one of the most hazardous factors. Many companies only find leakage after models have been in manufacturing for months, thereby increasing exposure.

Leaked information frequently drives secondary attacks, including identity theft, fraud, and social engineering, therefore broadening the effects far beyond the original event.

This makes deliberate artificial intelligence security governance a must rather than something that is optional.

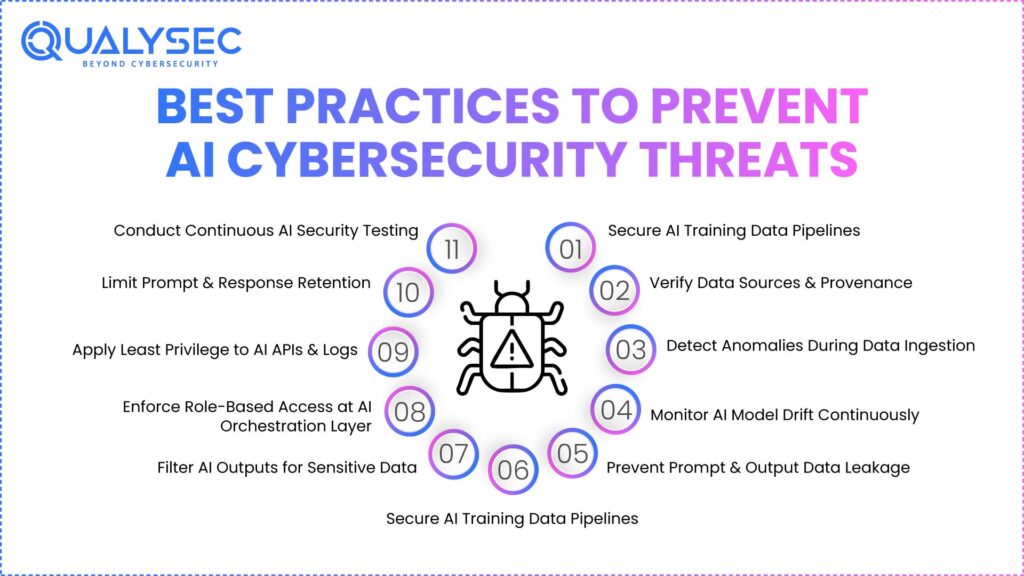

Best Practices to Prevent AI Cybersecurity Threats

Preventing artificial intelligence in cyber security threats calls for controls developed expressly for artificial intelligence behavior rather than for changes in existing security systems.

By verifying data sources, conducting provenance inspections, and spotting anomalies during ingestion, groups must ensure their training channels. Constant monitoring of model drift is vital for detecting poisoning or behavioral deterioration.

Rapid strengthening is absolutely necessary. To stop sensitive data exposure, system prompts must be segregated, inputs cleaned contextually, and outputs filtered. At the AI orchestra layer, role separation and access limits have to be observed.

Least privilege ideas should guide access to AI APIs, embeddings, and logs. Retention rules have to restrict the duration of responses, and prompts are kept.

Most importantly, artificial intelligence technologies demand ongoing security testing. One-time audits are inadequate since attackers adjust, interfaces alter, and models grow.

See how AI security risks are identified and mitigated. Download a sample pentest report today.

Role of AI Security Testing and Penetration Testing

Because it assesses behavior, not merely setup or code, artificial intelligence security testing is fundamentally different from conventional security testing. AI security testing looks at how models react when purposely distorted rather than at whether libraries are outdated or ports are open. This includes seeing how an artificial intelligence system responds to adversarial inputs, malformed prompts, poisoned data, or ongoing probing attempts over time.

What AI Security Testing Actually Simulates

Simulating actual hostile scenarios is a main goal of AI security testing. This entails consciously reproducing attacks, including model poisoning, quick injection, model evasion, data extraction, and AI-driven automated misbehavior. These simulations show how artificial intelligence systems fall quietly, usually without setting exceptions or triggering notifications. Because the system technically runs, conventional scanners miss these problems.

Why AI Penetration Testing Goes Beyond Traditional VAPT

Unlike traditional penetration testing, AI penetration testing covers the whole artificial intelligence life cycle. To find poisoning hazards, testers look at data ingestion channels, evaluate training processes for integrity checks, and confirm inference logic for tampering flaws. This whole perspective is essential since faults in artificial intelligence often start far above where the harm becomes evident.

Testing at the inference level has an especially significant importance. Attackers seldom need training line access. Instead of that, they take advantage of openly accessible artificial intelligence interfaces, APIs, chatbots, and automation agents. AI penetration testing determines whether these interfaces can be forced to disclose sensitive information, conduct unauthorized activities, or circumvent safeguards by means of ongoing contextual manipulation.

Risks Inside AI Memory and Embeddings

One more major research field is the storage of memory and embeddings. Long-term memory systems and vector databases frequently hold sensitive contextual data. AI security testing assesses if embeddings may be poisoned, be subject to reconstruction attacks, or consistently influence future outcomes. This is a major blind spot in most AI deployments.

AI security testing also assesses orchestration layers, where AI systems interact with cloud services, CI/CD pipelines, databases, and third-party tools. A compromised AI agent with elevated permissions can cause cascading failures across environments. Testing these integrations reveals risks that do not exist in standalone applications.

Why Specialized AI Security Teams Matter

This is where experienced AI cyber security companies provide measurable value. They combine adversarial machine learning techniques, manual reasoning, and threat modeling to uncover risks that automated tools miss entirely. Without this expertise, organizations often deploy AI systems that appear secure but are fundamentally unsafe under real attack conditions.

Assess AI risks in cloud environments,consult with Qulaysec now!

Importance of AI Risk Assessment And Future of AI Cybersecurity

The procedure of turning technical flaws in AI systems into commercial, legal, and operational effects is known as AI risk assessment. Beyond vulnerability identification, it asks a more crucial question: what happens if this artificial intelligence system malfunctions, is tampered with, or behaves inappropriately? As AI security systems acquire decision-making power, this change in attitude is crucial.

Model Sensitivity and Business Impact

A good AI risk analysis assesses model sensitivity, therefore determining how much harm wrong results may generate. For instance, an artificial intelligence system approving medical choices, security alarms, or financial transactions carries much less risk than a recommendation engine. Knowing this sensitivity lets businesses properly rank protection initiatives.

Automation significance is yet another crucial aspect. AI systems that function independently without human review pose significantly more risk. AI risk assessments pinpoint areas without human monitoring and those where it is feeble or bypassed. Because faults propagate swiftly and at large, these targets frequently come first for attackers.

How AI Authority Levels Change Risk

Central to risk evaluation is decision-making power. While some artificial intelligence systems act directly, others counsel humans. The results of compromise worsen greatly when artificial intelligence is given permission to approve access, execute code, or start reactions. These authority levels are mapped to threat likelihood and probable impact via risk assessment.

Equally crucial is exposure analysis. Higher attack likelihood exists for AI systems tied to external APIs, public interfaces, or unverified data sources. AI risk analyses locate where manipulation is most practical by means of data source, trust borders, and interactive surfaces. All of these are supported by AI threat intelligence to identify emerging risks and attack patterns.

Why AI Risk Assessment Must Be Continuous

Looking forward, ongoing validation rather than static controls will drive artificial intelligence in cyber defense. Through retraining, updates, and new integrations, artificial intelligence solutions develop. Thus, risk analyses have to be continuous, not yearly or one-time events.

Organizations that don’t use ordered artificial intelligence risk assessment will more and more find that their AI-driven security systems turn into liabilities rather than protections. By contrast, individuals who include risk assessment in artificial intelligence governance will be able to confidently and securely scale AI adoption.

Speak with AI security experts about AI cybersecurity threats. Contact us now!

Conclusion

Along with growing AI adoption comes accelerating AI cybersecurity threats. Model poisoning distorts education, rapid injection paralyzes decision-making, and data leakage reveals confidential data at large. AI cyber security companies, as a major security asset, use continuous testing and have solid governance, will confidently, rather than anxiously, lead the direction of artificial intelligence in cyber security.

Learn more about AI cybersecurity threats. Schedule a meeting with our expertsto tackle model poisoning, prompt injection, and data leakage.

FAQs

1. How will cyber security be affected by AI?

Though it introduces new attack vectors like model tampering, prompt injection, and data leaks, artificial intelligence increases detection pace and scope. Rather than simply safeguarding infrastructure, cybersecurity will become increasingly oriented toward verifying artificial intelligence behavior.

2. What is AI in cyber threat intelligence?

AI in cyber threat intelligence helps to identify patterns, forecast assaults, rank hazards, and process huge datasets using machine learning. Still, these systems need to be protected against tampering.

3. What are the most dangerous AI cybersecurity threats today?

Among the most serious threats endangering AI systems are model poisoning, quick injection, data leakage, model inversion, and AI-driven evasion assaults.

4. What is an AI cyber attack?

An artificial intelligence (AI) cyberattack targets AI systems or employs AI to improve deepfake phishing, automated evasion, or clever malware that is, classic attacks.

5. What are the risks of AI in cybersecurity?

If AI behavior is not regularly verified, risks include silent model failure, data exposure, automation abuse, prejudiced decisions, and legislative breaches.

6. Why do traditional security tools fail against AI threats?

Classic instruments emphasize code, signatures, and infrastructure. Behavior, learning, and context, which demand different testing and monitoring techniques, are targeted by AI threats.

0 Comments