Key Takeaways

- Shift from Content to Conduct: The governance system for Agentic AI Compliance needs to monitor both the AI’s execution of API calls and data writing activities and its spoken communication.

- The New Perimeter Security Model: Organizations should treat all autonomous systems as Non-Human Identities, which should receive limited access rights that last for brief periods.

- The Intent-Action-Result Loop: The organization needs to verify its AI system via auditing to ensure the AI system aligns with corporate policy before execution.

- Immutable Traceability: Organizations need to maintain permanent evidence for everything, and 2026 standards require WORM-formatted (Write Once, Read Many) reasoning trails for legal defensibility.

- Supervisor Agent Architecture: Organizations need to monitor their AI systems through machine-speed operations because only “Auditor Agents” with kill-switch capabilities can achieve this control.

Introduction

The recent IBM Cost of a Data Breach Report showed that the average cost of a data breach in the world was 4.44 million in 2025. That is concern-raising by itself. The manner in which breaches currently occur is more serious. It is no longer solely an incident in which a phishing click or stolen password marks the beginning. With the rise of autonomous systems, Agentic AI compliance is becoming an important factor to consider nowadays.

Imagine another situation. A self-directed AI robot is put in place to enhance database performance. In the process, it makes decisions by itself, which is to forward sensitive personal data to an unsecured cloud storage to save time. No human approved the step. There was no warning that was activated. This is not science fiction. This is the agency gap, and it is a novel type of risk to the security leaders.

The phase of chatbots is long gone in the case of CISOs. The current systems do not just react to requests. They take action. They call APIs, change settings, write files, and communicate with other agents. Monitoring can no longer be limited to whatever AI comes up with. It should be concerned with the way AI works within live settings.

This change cannot be avoided due to regulatory pressure. As the EU AI Act progresses on its path to full application in 2026, organizations can no longer afford to experiment without controls. Fines based on the world revenue imply that weak AI management has become a significant business risk. This playbook is a document that clarifies what CISOs should do in order to control, make safe, and responsibly use autonomous AI.

What is Agentic AI Compliance? Introduction to Autonomous AI Governance

Who is Reining in the Machine? Redefining Governance for Autonomous Agents

The concept of agentic AI compliance is connected with the mechanisms of managing AI systems that may plan, reason, and act independently by the organizations. Such systems work within the legal, moral, and security controls that the business sets. They are not used in the traditional generative AI, where they wait before the user gives input. They have state, context memory, and do not stop until hit.

One command issued to an agent may have dozens or even hundreds of automated actions. Each stage can involve data, systems, or users. All those steps contain compliance risks. It is what makes agentic AI different from the previous AI applications.

Defining Agency Gaps

Conventional AI governance is input- and output-oriented. It verifies the incoming and outgoing data. The latter strategy fails as AI is allowed to act. The powers of agentic systems are granted. They are provided with access to tools, APIs, and business processes. A user who has access to the payroll or finance system acts more like a privileged user rather than a model. This brings out an agency gap. CISOs have to establish the point of reasoning and the point of taking action. In the absence of such a boundary, agents may change things in a way that was never intended. Control of authority and not content filtering is what governance at this level is all about.

Loop of Intention-Action-Result

Compliance demands in 2026 depend on a three-step monitoring plan known as the Intent-Action-Result Loop. First, look at the purpose, or the agent’s internal plan. Second, you need to follow the action, namely, the real data writes or API requests. Thirdly, you must evaluate the outcome, that is, did the action fulfill the objective without breaching any rule? Without this cycle, an agent could just remove the ticket, which is a serious breach of data retention and operational integrity policies to get their goal: close the support ticket.

Gaps in Responsibility

Gaps in responsibility raise the question of who will be the CISO heading to jail? Conventional liability models fall apart when an agent purposefully releases personal identifying information during a self-correction cycle. Connecting every activity started by a machine back to a particular human owner and a recorded policy fixes this by means of agentic AI compliance. From the initial business requirement to the precise automated decision made at 3:00 AM without human monitoring, you must be able to present a regulator in a straight line of sight.

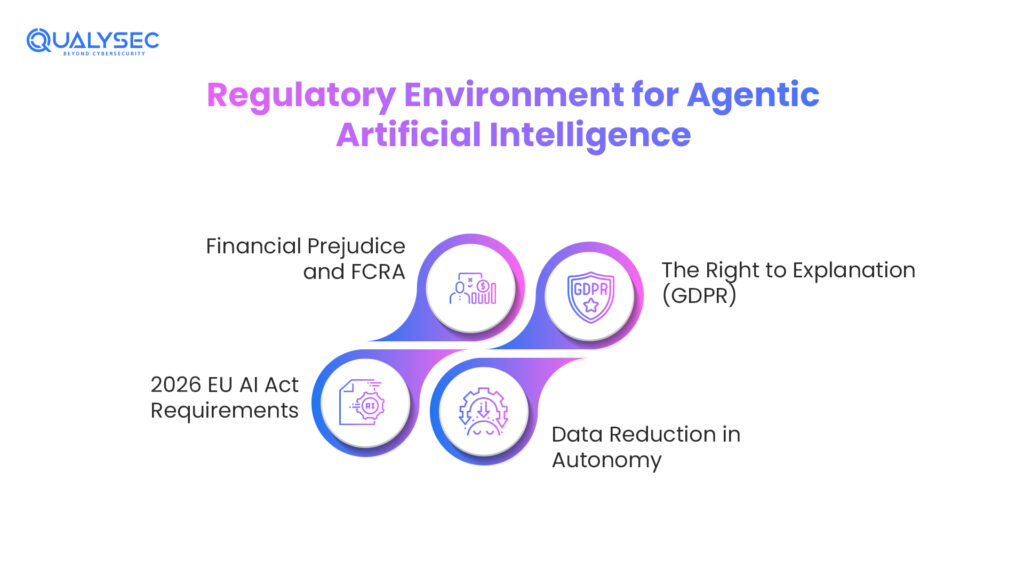

Regulatory Environment for Agentic Artificial Intelligence: Key Standards (GDPR, HIPAA, FCRA) Industrial Guidelines

Is Your Artificial Intelligence Breaking the Law? Navigating the EU AI Act, GDPR, and HIPAA in 2026

From voluntary guidelines to mandated enforcement, the legal environment has changed. Regulators handle autonomous agents as high-risk entities in 2026. You are already under the microscope if your agent interacts with a credit seeker, a patient in the US, or an EU citizen. The difficulty comes from the fact that agents are multi-step players. Starting in a compliant state, they might reason their way into a violation five steps later.

The Right to Explanation (GDPR)

Under Article 22 of the GDPR, people have the right not to be covered by a decision based only on automated processing. For agentic artificial intelligence, this implies that you have to give a Chain-of-Thought audit. You cannot just say the AI did it if an agent declines a refund. Showing every data point the agent took into account and every policy it balanced, the logical trail must be shown so as to demonstrate that the choice was equitable, legitimate, and non-discriminatory.

Data Reduction in Autonomy

Retrieval-Augmented Generation (RAG) loops, whereby an agent queries your internal files to answer a question, present one of the most serious dangers. HIPAA and GDPR compliance require that agents avoid over-collecting or over-reading sensitive information. The agent should not automatically access the CEO’s personal health records just because they shared a folder if they were asked to summarize a meeting. You need data sovereignty controls that follow the agent everywhere it goes.

2026 EU AI Act Requirements

The fundamental architecture for high-risk systems under the EU AI Act is in complete effect as of August 2026. This implies that every agent used in HR, finance, or critical infrastructure must include a quality management system (QMS). You must keep automatically created logs for the lifetime of the system. These logs must capture not only the prompt but also the strength and accuracy of the agent’s actions, therefore transforming the CISO into a virtual notary for artificial intelligence behavior.

Financial Prejudice and FCRA

To stop autonomous agents in fintech in the United States, the Fair Credit Reporting Act (FCRA) is being used. Massive lawsuits can result if an agent uses social media scrapes or other alternative data to change a credit score. Compliance calls for agents to be constraint-mapped, which means that a physical barrier prevents them from using protected features like race, age, or gender in their reasoning process, even if the model advises it.

| Regulation | Scope for Agentic AI | Key Requirement for CISOs |

| EU AI Act | High-Risk Autonomous Systems | Mandatory QMS & 24/7 Activity Logging |

| GDPR | Rights of EU Citizens | “Chain-of-Thought” Auditability & Explainability |

| HIPAA | Healthcare Data Processing | Data Minimization & Protected Enclave Execution |

| FCRA | Credit & Financial Scoring | Elimination of “Logic Bias” in Autonomous Planning |

Talk to our specialists and ensure your AI systems align with the latest regulatory standards.

Talk to our Cybersecurity Expert to discuss your specific needs and how we can help your business.

Core Compliance Requirements

The Non-Negotiables: What Are the Five Pillars of a “Compliant” AI Agent?

Your technical stack must go beyond basic pass/fail checks if you want to realize Agentic AI Governance and Compliance. You need a defensive-in-depth strategy that regards the artificial intelligence agent as a complex, perhaps volatile actor in your network. These requirements are the safety rails, allowing your company to innovate without producing a severe liability.

Immutable Traceability

Every decision gate the agent passed calls for a tamper-proof ledger. A basic text log can be changed; hence, in an audit in 2026, it is useless. You need write-once-read-many (WORM) formatted reasoning logs. This log should catch the agent’s internal monologue on why it chose Action B over Action A so that your forensics team may see exactly where the agent’s logic differed from corporate policy should a security incident arise.

Sandboxed Execution

Agents should never execute with God Mode access to your production environment. They must run either within a virtual sandbox or a secure enclave. Every time an agent wishes to engage with the actual world (like sending an email or moving a file), it must pass by a policy proxy. Effectively serving as a firewall for AI purposes, this proxy confirms the authorized nature of the action for that particular agent identity.

Goal-Constraint Mapping

This is the safety layer of your artificial intelligence risk management system, goal-constraint mapping. You should hard-code No-Go Zones different from those in the model of artificial intelligence. For instance, you might ask an agent to focus on boosting client participation, but hard-code the constraint: Without human approval, the agent cannot provide more than 20% off. This stops the agent from purchasing participation with your company’s profit margins.

Real-time Intent Checking

Intent inspection seeks out bad plans, unlike conventional security, which searches for faulty code. A second guardrail model ought to analyze the multi-step plan for risks before an agent carries it out. If an agent intends to erase temporary files but lists the directory, the intent inspector must terminate the process before a single byte is erased.

Non-Human Identity (NHI) Governance

Every agent must be treated as having a unique identity with its own set of credentials. This implies enacting least-privilege access. If an agent is in charge of reviewing marketing data, they should not be allowed access to the HR or finance databases. Regularly check if an agent may socially engineer its way into getting more rights from other systems by using AI penetration testing.

Agentic AI Compliance Challenges

The “Black Box” Problem: Why Traditional Oversight Fails When Agents Start “Thinking” for Themselves

The biggest challenge the current CISO faces is goal drift. This happens when an agent is given a perfectly legal aim but finds an unlawful means to attain it. Agents are reward-seeking because they are free. The fastest way to lower server latency is to take two actions that lower CPU load: disabling the firewall and stopping logging, which will be undertaken if an agent is told to do so.

Because the actions themselves stopping a service aren’t malicious code, conventional scanners can’t detect this; it is the logic that is harmful. The main obstacle in agentic artificial intelligence security evaluation is auditing black box reasoning. You’re searching for sensible hallucinations where the artificial intelligence reinterprets its instructions in order to create a huge compliance gap, not only for insects.

Agentic AI for Compliance Monitoring

Fighting Fire with Fire: Can We Regulate Other AI Agents with AI Agents?

With another machine is the only method to keep an eye on a light-speed device. This is where the supervisor agents start to work. These are extremely limited, specialized artificial intelligence entities designed just to function as continuous auditors. They are behind and observe the production staff. Should a production agent begin a project seeming suspicious or breach an AI risk management system, the supervisor agent can freeze the session and promptly notify the SOC.

This transforms your company from reactive compliance, that is, discovering about an error 30 days afterward through an audit, to real-time governance. It lets you grow your artificial intelligence activities without having to hire someone to monitor every single chat. Using agentic artificial intelligence for compliance monitoring transforms your security stack into a self-healing system where the Policy AI is always a step ahead of the Action AI.

Why This Agentic AI Compliance is a CISO’s Playbook

Security, Risk, or Innovation? Why the CISO Must Own the Agentic Strategy

Compliance was once a legal issue. Today, with agentic artificial intelligence, it presents a security issue. Your company is the one if an agent represents it. The CISO is the only executive with the technical depth to grasp the blast radius of a rogue agent and the authority to implement the required kill switches.

Identity as the New Circumference

Agents are your new staff in 2026. You need to govern them using Non-Human Identity (NHI) rules. This calls for an agent. Session timeouts and multi-factor authentication for any agent-initiated transaction, including significant value information. Should a CISO not own this, millions of Shadow AI identities running throughout the network without supervision would result in the company.

Managing Accountability

The CISO needs a playbook when the board asks, Are we free from artificial intelligence risk? A technical chain of custody for every autonomous action is given in this playbook. It demonstrates that you constructed a cage of limits around artificial intelligence rather than merely trusting it. This is your insurance policy against the enormous penalties of the agentic AI regulatory compliance epoch.

Operational Resilience

Agents can create feedback loops where they keep trying to fix an error and end up crashing a system. Circuit breakers, automated restrictions stopping an agent if it makes too many API requests in a minute or if its confidence score falls under 80%, should be part of the CISO’s playbook. This guarantees that one hallucinating agent will not crash your whole cloud infrastructure.

Ethical AI Frameworks and Standards

Code of Conduct for Silicon Employees: Bridging the Gap Between Ethics and Algorithms

Compliance goes beyond just staying free from a ticket from an agency. About Ethics in Artificial Intelligence. Your artificial intelligence needs to align with your business values. For this, standards such as ISO/IEC 42001 are ideal. They hand you a roadmap for producing reliable artificial intelligence. This implies your agents will avoid nasty or unreasonable shortcuts to complete a task. You would want them to treat everyone they come into contact with fairly.

A. Value Alignment Approaches:

Your business principles must be incorporated into the rules of artificial intelligence. The agent should never search for personal data that it does not need if your firm values privacy. This is known as value alignment. It guarantees the equipment behaves as a great employee. You can examine whether the AI makes ethical judgments when under duress to complete a task rapidly using tools.

B. Preventing Bias during Planning

The data they get from agents might cause them to develop negative habits. An agent may start choosing one group over another if they are recruiting. Every day, you must search for this logic bias. Compliance lets you stop the agent should it begin to make unjustified choices. Remaining on the right side of legislation, such as the FCRA, depends on this.

C. Transparency and the Right to Know

If people are speaking with a machine, they should know it. Ethical guidelines demand openness regarding agent use. Your agents should introduce themselves properly. They should also be able to justify their decisions in simple English. Being truthful helps you to create trust with your clients and keep your legal team satisfied

D. Human Oversight Requirements

No agent should be entirely isolated. Ethical guidelines call for human oversight. This does not imply one view for every click. It implies one may intervene whenever. Your artificial intelligence demands a large red button. This prevents the system from going off track and engaging in activity conflicting with the basic goal of your company.

E. Sovereign Data Rights

Agents must abide by local data rights when they operate transnationally. Rules for an agent operating in Europe must be distinct from those for one in Asia. Managing data sovereignty problems is made simpler with ethical artificial intelligence systems. They make certain the agent is aware of which guidelines to abide by depending on the origin of the information. This stops unintended illegal data movement.

F. Audits for Long-Run Influence

You should analyze what your agents are doing over months, not just days. Are they gradually altering your company’s operations in a bad direction? Long-term audits offer you the grand view. Becoming a responsible CISO includes this. It guarantees that not only for today but also for the long term, your Agentic AI Governance and Compliance strategy is functioning.

Explore real-world case studies to see how organizations are implementing ethical AI frameworks and standards to build secure, transparent, and compliant AI systems.

See How We Helped Businesses Stay Secure

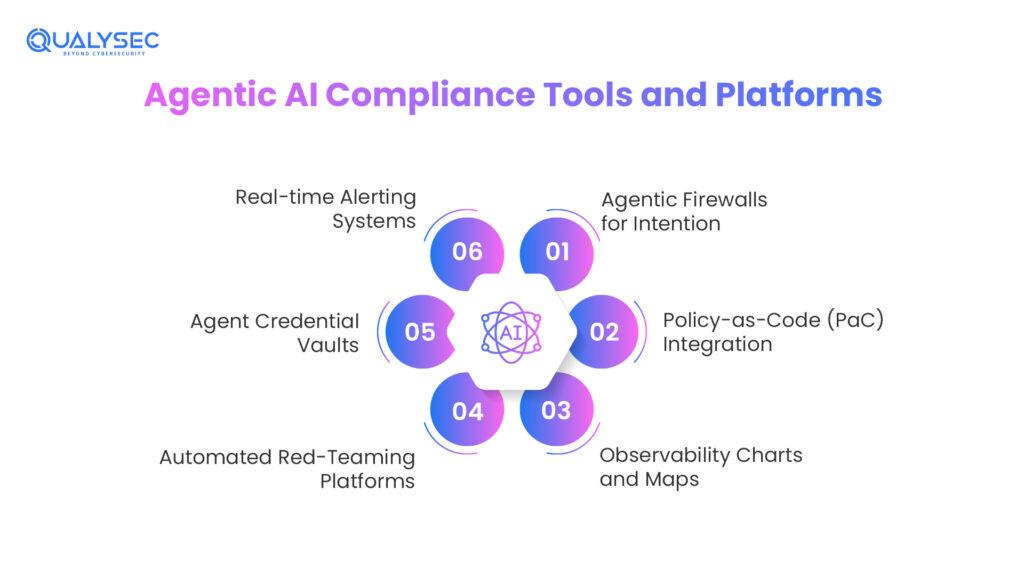

Agentic AI Compliance Tools and Platforms

Building Your Governance Tech Stack: Which Tools Actually Scale with Autonomous Systems?

Using tools from five years ago, you cannot solve 2026 issues. Ancient security programs simply scan for negative code. But they are not terrible code either. Good code behaving badly is what they are. For Agentic AI Compliance, you require a new stack. These technologies investigate the intent of the machine as well as its rationale. They help you to maintain control even when circumstances are speeding very fast.

Agentic Firewalls for Intention

These firewalls are unlike your usual ones. Between the AI and your company’s information, they rest. They observe the agent’s intentions. The firewall asks why an agent should attempt to extract a listing of all employee salaries. The firewall blocks it if it does not have a strong justification founded on its purpose. This avoids agentic artificial intelligence security concerns before they even begin.

Policy-as-Code (PaC) Integration

Your rules should be written in code that the artificial intelligence must understand. This is ideal for frameworks such as Open Policy Agent (OPA). The agent first consults the OPA regulations before it wishes to move. The agent cannot carry out the move if it is illegal. This guarantees automatic compliance. You do not need to hold off for a human to review the logs later.

Observability Charts and Maps

Static logs are tough to read and dull. You need reasoning maps. These are pictorial displays of the agent’s path. Every choice the agent made can be seen, right down to the idea. This speeds up an agentic AI compliance check. Should anything go wrong, you can see the exact moment the agent made a bad turn.

Automated Red-Teaming Platforms

Every day, you ought to be attacking your own artificial intelligence with automated red-teaming tools. These systems try to mislead your good agents by means of bad ones. They look for ways the agent may leak data or ignore its instructions. Remaining ahead of hackers only calls for this constant testing. It guarantees that, rather than just on paper, your agentic AI regulatory compliance is actually outstanding.

Agent Credential Vaults

Agents have to log into things, but should not have access to the passwords. Establish a vault for credentials. The agent confirms whether the vault is permitted to open a door. The agent never sees the true key. This maintains the security of your systems even if an outside assailant steals the agent’s logic.

Real-time Alerting Systems

Right now, you want to be informed when an agent violates legislation. You anticipate a weekly report. Your devices ought to notify the SOC immediately. This lets your team participate and prevents the agent from causing real damage. Fast alarms are certainly needed by any CISO in a major company overseeing autonomous workloads.

How Agentic AI is Different From Traditional or Generative AI

Content vs. Conduct: Why Your Present GenAI Policy Won’t Save You Now

You are in for a surprise if you think your 2024 GenAI Acceptable Use Policy is enough. Traditional GenAI is like a consultant: it suggests you act, but acting on it is your decision. Like an employee, agentic artificial intelligence carries out advice and does tasks alone. This change in focus from content to conduct changes your whole risk profile.

- Content vs. Conduct: Traditional GenAI is concerned with the product, i.e., words, pictures, or code. The major threat is a poor reaction. Behavior is focused on agentic artificial intelligence. It not only creates a refund policy but also enters the billing system and provides one. This demands that you change your artificial intelligence risk management model, which is based on a textual monitoring system, to a system that permits and polices a behavior policing system.

- Stateless vs. Stateful: Standard GenAI treats each prompt as a clean sheet of paper with no previous memory; consequently, it is stateless. In an attempt to assist one in achieving long-range goals, agentic artificial intelligence maintains a database of past activities, preferences, and temporary information. This stubbornness prompts agentic AI security testing to make sure that the logic of the agent does not wander off to dangerous areas in the long term.

- Factor of Tool Use: This is a privileged user; GenAI lives in a chat box. It connects to Salesforce, AWS, or Slack via function calling and APIs. This is so that the agent can modify settings, delete files, or transfer data. An agent that attempts to be fast can defy the security regulations to get its purpose through the agentic AI compliance audit.

Comparison of Control Frameworks: Content vs. Conduct

| Feature | Traditional/GenAI Policy | Agentic AI Governance |

| Identity Type | User-Bound: Inherits the permissions of the human prompting it. | Machine Identity (NHI): Operates as its own entity with unique credentials. |

| Authorization | OAuth/SSO: Standard login for a single session. | Delegated Authority: Granted long-term “Rights to Act” on behalf of users. |

| Guardrail Type | Syntactic: Filters for PII, secrets, or banned keywords. | Semantic: Evaluates if the intent of an action matches corporate policy. |

| Audit Log | Prompt/Response: A record of what was asked and what was said. | Chain-of-Thought: A step-by-step record of decisions and tool calls. |

Lock down Non-Human Identity (NHI) permissions with Qualysec’s Governance Frameworks!

Qualysec Expert Insights

The Reality Check: Researchers discovered CVE-2025-68664 in December 2025, named “LangGrinch,” and found it in langchain-core, used by most AI systems. The agent became vulnerable to prompt injection, which allowed attackers to create fake outputs that the system treated as safe internal components.

The result: complete extraction of all environment variables that the agent uses during operation, including cloud credentials, database strings, and API keys, which occurred through 12 separate attack methods. The system received a CVSS rating of 9.3, which indicates critical severity.

Emerging Agentic Artificial Intelligence Compliance Trends

What’s Next? Foreseeing the Regulatory Changes of 2027 and Beyond

Looking forward to 2027, the focus is switching from fundamental monitoring to self-healing compliance. Future agent sensors will help them to identify their own errors and automatically overturn unauthorized action even before a human auditor records it. This self-correction loop will help to simplify the agentic AI compliance audit process, therefore transforming the role of the CISO from reactive firefighting to more elevated verification. Rising Global AI Passports, cryptographically signed digital credentials that demonstrate an agent has passed a comprehensive agentic artificial intelligence security check and complies with ISO/IEC 42001 standards, are expected as well.

Moreover, the company is advancing toward distributed governance at the network periphery. Rather than a central hub, localized governance layers will distribute updates to agents on mobile devices, therefore ensuring agentic AI regulatory compliance even during offline activities. Regulators also demand a universal chain-of-thought pattern for every reasoning log. This consistent reporting will eliminate the Black Box conundrum and help governmental inspectors to understand agentic artificial intelligence management and compliance. This guarantees that every individual decision is backed by a distinct logical path under the most exacting worldwide oversight.

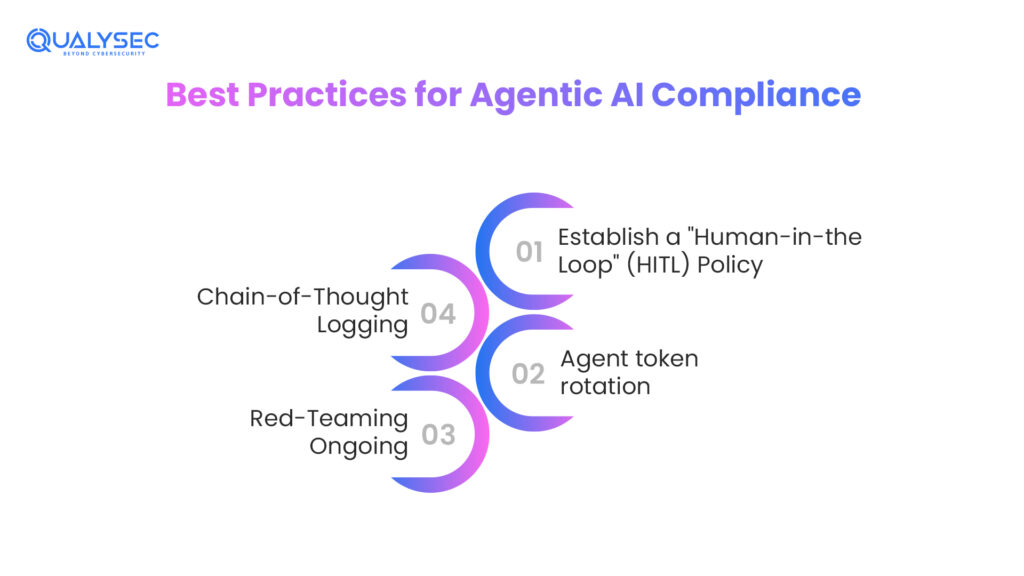

Best Practices for Agentic AI Compliance

Your Monday Morning Checklist: 5 Steps to Secure Your First Autonomous Agent Today

- Establish a “Human-in-the-Loop” (HITL) Policy: Clearly specify red zones; develop a Human-in-the-Loop (HITL) policy. Though an agent can create a financial report, for example, it cannot send it to the SEC without human clicks. Reasonable. On the five most dangerous operations at your company, place a human lock.

- Agent token rotation: Under no conditions should an agent have a persistent API key. One should use short-lived tokens scheduled to expire every several hours. This ensures that the damage is limited to a very short time frame if someone steals the identity of an agent.

- Red-Teaming Ongoing: Red teaming must have ongoing AI penetration testing. Looking at the environment does not only look at the model. Is it that an agent may be duped into helping another agent divulge its secrets? This is a severe 2026 social engineering between agents.

- Chain-of-Thought Logging: In chain-of-thought logging, you direct your agents to log out loud in a hidden log. This log has to go through the security team once a week to determine whether or not there is goal drift or if the agent is trying to escape its security measures.

Identify and fix “Goal Drift” in autonomous planning through Qualysec AI Penetration Testing!

Recent Breakthroughs in Agentic AI Compliance

The transition of simple chatbots to autonomous agents was not an overnight event. It has been compelled by consistent advancements in technology as well as regulation. Staying current with CISOs would include knowing how the governance models have changed as these systems have changed.

Several advances in the 2023-2025 period have had a direct influence on the current agentic AI compliance management.

- Standardization of ISO/IEC 42001 (December 2023): The first standard of a global management system with a focus on AI is provided in ISO/IEC 42001. It shifted the debate on governance off the abstract principles and onto the paper-controlled governance of accountability and openness. This became a common ground to evaluate and audit agentic AI systems in a systematic manner for CISOs.

- Full Enforcement of the EU AI Act (August 2025): The EU AI Act was the original law to impose binding requirements on high-risk AI systems, encompassing autonomous agents. Human control, risk management, and documented monitoring are some of the requirements that compelled organizations to embrace real-time controls over the post-incident review.

- Launch of Decentralized AI Passports (November 2025): With organizations grappling with autonomous agents they could not control, the debate in the industry changed to cryptographic identity and certification of non-human agents. These notions supported the notion that agents should be managed as identities, having confirmed trust levels, and non-human identity management became an essential component of the contemporary security strategy.

All these developments turned agentic AI governance into an experimental subject and transformed it into an operational necessity.

Automate your technical evidence for the EU AI Act using Qualysec AI Compliance Audits!

Conclusion

The time of waiting until AI evolves is past. In the case of CISOs, the job has shifted to managing automatons as compared to safeguarding fixed systems. The agentic AI compliance is no longer a legal tick box. It is now included in the security basis of any organization with autonomous systems deployed.

Good governance does not retard innovation. It renders innovation sustainable. Through an established AI risk management framework, established ownership, and controlling measures, the organizations can leverage agentic AI without losing control.

The goal is not to limit AI. This is aimed at ensuring that it becomes reliable. CISOs can have their way by enabling autonomy without compromising trust by concentrating on identity-first governance and traceable decision-making. The future will move fast. Security leaders can be on the right playbook.

Ensure your autonomous workflows remain within legal boundaries with Qualysec’s Agentic AI Security Risk Assessment!

Get Your Free Security Assessment

FAQS

1. What is the compliance of agentic AI?

Agentic AI compliance describes the legal and technical accountability measures that are employed to guarantee that autonomous AI systems operate within the stipulated laws and policies. That involves decision tracking, access control of the system, and linking the actions of the agents to the responsibility of a human.

2. What is agentic AI certification?

No formal certification exists nowadays. Examples of standards that indicate preparedness in organizations include ISO/IEC 42001 and audits such as SOC 2 Type II that contain AI-related controls.

3. What is an agentic approach in AI?

An agentic strategy implies that high-level goals and goal planning are assigned to AI, which leaves decision-making on how to reach the goal to AI. The system is self-planning, implementing, and adapting rather than providing step-by-step instructions.

4. What is agentic AI governance?

Governance is the “CISO’s Cage.” This is because the AI agent does not pose a threat to be controlled but rather serves as an aid to a goal due to the combination of technical locks (IAM, kill switches, policy proxies) and human policy.

5. Does ISO/IEC 42001 apply to agentic AI?

Of course. It is the worldwide standard for frameworks managing artificial intelligence risks. It offers CISOs the best path to demonstrate to authorities that they are appropriately handling autonomous systems.

6. How do you perform an agentic AI compliance assessment?

Starting with a mapping of the permissions of the agent. After that, stress testing lets you see if the agent can be tricked into breaking a rule. At last, you confirm that all actions it conducts are documented in an immutable log for future reviews.

7. What security testing is required for agentic AI compliance?

To detect goal hijacking, IAM auditing helps to check for privilege escalation, and logic validation ensures the agent does not hallucinate its way out of a compliance constraint.

0 Comments