Introduction

Artificial intelligence is now more than simply a lab experiment. Nowadays, it is included in medical diagnostic systems, fraud detection engines, loan approvals, trading platforms, and customer service robots. Each choice these systems make directly impacts compliance, human lives, and income. Most businesses, meanwhile, still safeguard artificial intelligence like they would a regular web app without conducting a dedicated AI vulnerability assessment. Such a strategy is incredibly outdated.

An AI vulnerability analysis goes beyond APIs, firewalls, and servers. At every stage of the AI lifecycle, it assesses how data, models, and automated decisions may be exploited. Unlike conventional software, artificial intelligence systems don’t just crash when under attack. Far more hazardous than mistakes distributed over thousands or millions of users before anyone notices, they silently start making bad decisions.

According to IBM, the average price of a data breach in 2025 hit USD 4.44 million, and AI-driven systems are now becoming more and more engaged in those breaches. Models trained on sensitive data can be abused through attacks like model inversion and membership inference, which allow attackers to reconstitute training data from outcomes. IBM’s security research and MITRE’s AI attack taxonomy will let you check this risk.

This is the reason why top-level company risk now includes AI vulnerabilities. Leaked sensitive customer information, avoidance of compliance restrictions, or machine speed production of bogus approvals can all result from a poisoned training set or a changed prompt.

Organizations can use an AI security evaluation framework to discover where their models, pipelines, and automation are vulnerable before attackers strike. Furthermore, it supplies the evidence partners and officials need.

Why AI Systems Require Specialized Vulnerability Assessments

Traditional VAPT was developed for deterministic systems. Sending the same request twice to a regular application elicits the same answer. AI functions differently. Models are stochastic. Depending on context, background, and hidden inner conditions, they grow, change, and react differently.

An exposed API or missed patch could be found by a rudimentary AI vulnerability scanner. It won’t spot model drift, training data poisoning, or prompt injection attacks, modifying the decision-making process. These are not programming errors. They are failures in intellect.

Black boxes are also artificial intelligence models. Developers sometimes struggle to fully justify why a model arrived at a particular conclusion. This implies that attackers can use hidden correlations, prejudices, and edge cases without ever physically interacting with the code.

Using methods recorded by MITRE ATLAS and OpenAI, red teaming studies adversarial inputs, embedding modification, and instruction override attacks, among others, threat actors now specifically target AI.

This is why AI vulnerability management calls for ongoing testing rather than yearly audits. As data changes, models change as well. As user behavior alters, risk does too.

You might like to know more about the AI Security Audit Checklist

Understanding The AI Pipeline And Its Attack Surface

Each level of the multi-stage path, known as the artificial intelligence life cycle, presents a different, fresh attack surface. Securing an artificial intelligence system requires examining the whole pipeline from the gathering of data to the point where a prediction is presented to a user.

| AI Pipeline Layer | What Can Be Attacked | Business Impact |

| Data ingestion | Poisoned or biased data | Wrong model behavior |

| Model training | Backdoors, bias | Corrupt decisions |

| Storage | Model theft | IP loss |

| APIs | Prompt abuse | Data leakage |

| Feedback loops | Drift | Silent degradation |

Assess AI risks in cloud environments, get in touch with Qualysec!

1. Data Sources and Ingestion Layer Risks

Data forms the lifeblood of artificial intelligence, but also its major vulnerability. An attacker can precisely plan the model’s future behavior if they can impact the information coming into your training or fine-tuning pipeline. This is called data poisoning. Giving a fraud detection dataset just a little percentage of malicious samples, for instance, can produce a bypass that enables certain fraudulent transactions to go undetected.

Additionally, many businesses depend on web-scraped data or outside labeling services for high-risk entry points for manipulated information. You are constructing your intelligence on sand unless a thorough artificial intelligence security assessment system validates the integrity of your data. Once a model discovers a harmful pattern, it is almost impossible to erase without a complete and expensive retraining program.

2. Model Training and Validation Vulnerabilities

Usually, utilizing great computing power from shared cloud GPUs, the training stage shapes your AI’s brain. These environments often lack good isolation, hence making them potential targets for infrastructure hijacking or side-channel attacks. Should an attacker acquire entry to the training environment, they may covertly modify hyperparameters to impose bias or set a trigger that triggers a particular, destructive reaction just when a given keyword is entered.

Validation is also very important. Data leakage can happen and give a false sense of security if the data used to test the model’s accuracy is not rigorously separated from the training data. Treating the training environment as a high-security area, a professional AI vulnerability assessment guarantees that the model created is precisely what the developers meant, devoid of unwanted changes that could jeopardize its integrity in production.

3. Model Storage and Version Control Weaknesses

Big binary files, artifacts, store the weights and biases acquired throughout training by artificial intelligence models. The pride of your intellectual property is these files. Should a model repository be inadequately protected, an attacker can conduct model swapping, replacing a certified, safe model with a compromised version, including a hidden backdoor, in addition to stealing your own logic.

For artificial intelligence, version control is usually not as developed as it is for conventional software. Tracking the introduction of a flaw becomes challenging in the absence of precise ancestry and cryptographic signing of model versions. To stop unauthorized tampering or exfiltration, modern AI vulnerability management calls for the tracking, hashing, and encrypted storage of every model artifact with strong Role-Based Access Control (RBAC).

4. Deployment, APIs, and Inference Layer Risks

Usually, using an API, the inference layer is where the model confronts the real world. The most frequently attacked place is this one. To get around security filters, threat actors employ adversarial input data carefully meant to deceive the model. With LLMs, this sometimes manifests as prompt injection, wherein a user provides directions that fool the artificial intelligence into disregarding its safety restrictions to uncover sensitive system information or consumer data.

Because the harmful intent is hidden in the language’s semantics rather than in a distinct code pattern like an SQL injection, conventional Web application firewalls (WAFs) are mostly useless against these kinds of attacks. For this reason, behavioral testing of the live API simulating thousands of hostile questions to find the precise point where the logic of the model breaks down must be included in AI for cybersecurity vulnerability assessment.

5. Monitoring, Logging, and Feedback Loop Vulnerabilities

Many contemporary artificial intelligence systems use active learning loops, whereby the model keeps learning from user feedback following deployment. Attackers can take advantage of this to gradually skew the model’s behavior over time, therefore producing a hazardous feedback loop. An attacker can move the decision borders of the model until it no longer performs its intended purpose efficiently by bombarding the system with deliberately created, biased input.

Another big danger is subpar logging. You cannot conduct a post-mortem on a security event if your system does not log the precise inputs that produced a peculiar outcome. A strong AI security assessment methodology guarantees you possess the telemetry necessary to detect model drift, a gradual decrease in accuracy usually indicating a complex, long-term poisoning attack or a basic alteration in the data environment the model was not built to manage.

Concerned about vulnerabilities in your AI pipeline? Contact us today to secure your AI systems against evolving threats.

Protect Your AI From Emerging Threats

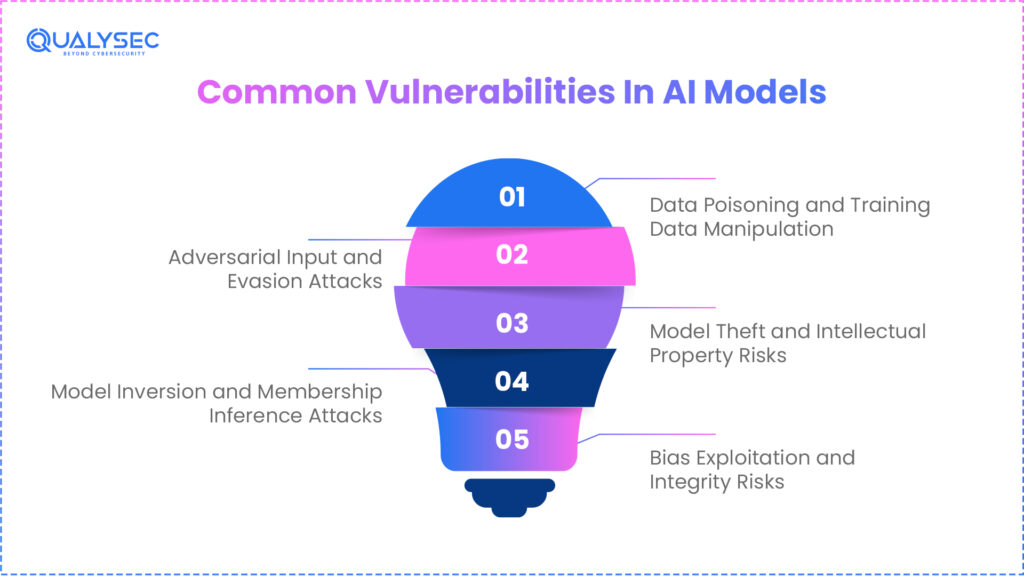

Common Vulnerabilities In AI Models

Programs assessing contemporary artificial intelligence regularly find a fundamental set of model-level hazards. These are not speculative. In modern production, artificial intelligence systems are aggressively used. MITRE ATLAS, Google Brain, OpenAI, and academic institutions’ studies show that attackers now focus on model behavior as much as network infrastructure.

Unlike software, artificial intelligence algorithms do not fail. A model that is compromised keeps working yet produces false, skewed, or risky outcomes. That renders artificial intelligence flaws far more devastating as they spread quietly throughout thousands of judgments.

This is why model-specific security testing must be included in every AI security evaluation plan. To capture intelligence-level flaws, one cannot depend on firewall rules or AI vulnerability scanners.

Most AI security assessment tools and red teams use the basic core vulnerability matrix shown below.

| Vulnerability | What It Does | Why It Is Dangerous |

| Data poisoning | Alters what the model learns | Permanent logic corruption |

| Adversarial inputs | Tricks models into wrong output | Fraud and bypass |

| Model inversion | Extracts training data | Privacy and compliance failures |

| Model theft | Copies decision logic | IP and revenue loss |

| Bias exploitation | Forces discriminatory outputs | Legal and regulatory risk |

1. Data Poisoning and Training Data Manipulation

Data poisoning happens when hostile or biased data is added to the training or fine-tuning process. Because AI algorithms find patterns in data, even a little bit of poisoned input can generate hidden backdoors that cause inappropriate action later. Detecting these backdoors is extremely challenging since they may stay dormant until certain criteria are met.

Attackers sometimes poison public image repositories, user-generated feedback, or web-scraped databases. Studies from Google and OpenAI have revealed that poisoned samples can live through several training sessions, provided the fundamental data lake is not cleaned.

This means that testing can make a model look correct, but in production, it acts maliciously. Long-term risk is generated by financial approvals, medical diagnosis, and fraud detection.

A thorough AI vulnerability analysis confirms data lineage, ingestion restrictions, and retraining methods as part of complete AI security testing. Attacking the data pipeline itself rather than only the model, Qualysec performs these checks.

2. Adversarial Input and Evasion Attacks

Mathematical flaws in machine learning models are exploited by adversarial attacks. Attackers somewhat change inputs so that people perceive them to be natural, but cause the model to incorrectly classify them. This is how harmful material avoids moderation or how fraudulent transactions get noticed.

Attackers, for instance, could deceive the model by modifying a few pixels in an image or lightly modifying a transaction pattern. Carnegie Mellon and MIT have well-documented these threats.

This results in undetected fraud in financial systems. It can lead to misdiagnosis in medicine. In content moderation, it lets damaging material sneak by.

This is the need for adversarial testing and model fuzzing in AI vulnerability management. Qualysec systematically conducts these tests to expose actual-world evasion danger.

3. Model Inversion and Membership Inference Attacks

By means of repeated inquiries of a model, model inversion attacks let attackers recreate training data. Membership inference attacks show whether a certain record or individual was included in the training set.

This goes straight against privacy rules, including GDPR, HIPAA, and financial data protection laws. About this threat, warnings have been issued by both IBM and NIST.

For instance, from badly secured artificial intelligence APIs, attackers have acquired financial information, voice recordings, and medical images.

Modern AI security evaluation tools thus examine how far model outputs allow one to deduce sensitive data. Qualysec includes these assessments in its approach to AI vulnerabilities.

4. Model Theft and Intellectual Property Risks

Artificial intelligence models are intellectual property worth a lot. Attackers steal them either by obtaining access to storage systems or by repeatedly interrogating public APIs and creating the model.

This lets attackers sell your artificial intelligence on black markets or to rivals to duplicate your product. Large-scale model extraction attempts have been noted by Microsoft and OpenAI alike.

A correct artificial intelligence vulnerability assessment assesses model repository access restrictions, output leakage, and API rate limiting. Qualysec pays great attention to this since model theft has a direct influence on the company’s worth.

5. Bias Exploitation and Integrity Risks

Hidden prejudices abound in artificial intelligence models from their training material. Attackers can deliberately set off these biases to produce prejudiced outcomes.

This might result in rejected loans, prejudiced medical advice, or arbitrary hiring choices. These errors lead to lawsuits, legal penalties, and reputational harm.

Currently, regulators consider prejudiced artificial intelligence judgments as compliance breaches. Not only is this an ethical concern, but this also makes biased exploitation a security risk.

This is the reason bias and integrity testing, which Qualysec conducts as part of its AI security evaluations, must be included in AI vulnerability management.

Don’t let hidden AI vulnerabilities put your business at risk. Schedule a meeting with our AI expertsfor a complete security review.

Speak directly with Qualysec’s certified professionals to identify vulnerabilities before attackers do.

Vulnerabilities In AI Infrastructure And Pipelines

Artificial intelligence systems do not work alone. They depend on cloud platforms, data lakes, GPUs, CI/CD channels, orchestrating systems, and APIs. Each of these layers produces exposure that may be used to change artificial intelligence behavior. Almost all successful attacks on the infrastructure cause model-level compromise.

Consequently, a modern AI vulnerability assessment must view infrastructure and pipelines as components of the AI system, not apart from it. Should a hacker have access to your cloud storage, they can poison training data. Malicious models can be deployed if they violate your CI/CD pipeline. Should they abuse your API, they might extract information or steal your model.

Government agencies and security communities understand this now. CISA has clearly cautioned that machine learning supply networks are presently vulnerable targets. OWASP has also published AI-focused threat models demonstrating how infrastructure flaws allow model compromise.

This is the reason why AI security evaluation instruments have to scan far more than just endpoints. They have to verify dependency chains, data stores, MLOps pipelines, and cloud permissions.

Qualysec examines this entire stack as part of every artificial intelligence vulnerability assessment, therefore enabling businesses to identify hazards before attackers weaponize them.

Read more about: AI Threat Intelligence.

1. Cloud Misconfigurations in AI Environments

Cloud services are very important for artificial intelligence workloads. Object storage houses training data. Models operate across GPU clusters. Experiments are logged in dashboards and notebooks. Attackers get inside the core of the artificial intelligence system when any one of its parts is wrongly configured.

Attackers may read or substitute available buckets for training datasets. Open GPU clusters let attackers run harmful training operations. Notebooks that are open expose secrets and model code. AWS and Google Cloud both list these failures among the most often cited causes of AI violations.

This is where AI cloud security becomes essential. It protects AI infrastructure in the cloud by securing data, controlling access, and continuously monitoring configurations to prevent unauthorized access or manipulation. Attackers can covertly insert poisonous data or replace authorized models once they have cloud access. The artificial intelligence keeps running, yet its behavior is now guided by the attacker.

Strong AI security assessment frameworks, therefore, always include cloud setup audits for AI workloads. As part of its artificial intelligence vulnerability analysis, Qualysec checks model deployment environments, GPU isolation, and storage permissions.

2. Insecure MLOps Pipelines and CI CD Risks

MLOps pipelines automatically deploy, train, and test models. These pipelines control what gets produced. An attacker does not need to attack the model directly if they compromise this pipeline. They simply make a poisonous version ready for market.

Except that the target is AI, this risk is somewhat similar to the SolarWinds supply chain threat. An impaired CI/CD pipeline might install a backdoored model that passes basic tests but exhibits malevolent behavior in particular situations.

Studies from GitHub and Snyk reveal that ML pipelines sometimes lack the security measures present in software CI/CD.

3. Weak Authentication and Authorization Controls

Many artificial intelligence systems reveal models via APIs that employ weak authentication, no role division, or shared keys. This lets attackers extract delicate patterns, misuse paid AI services, or interrogate models at scale.

Attackers can conduct model extraction without rate restriction. Any user can access sensitive features without role-based access. This opens a tremendous risk for IP theft and data leaks.

A good AI security assessment framework validates identity management, access scopes, and API misuse prevention among AI services. As part of its API and artificial intelligence vulnerability analysis, Qualysec undertakes these tests.

4. Third-Party and Open-Source Dependency Risks

Most artificial intelligence systems depend on open-source libraries, pretrained models, and external plugins. Your artificial intelligence inherits the vulnerability should any one of these elements be damaged.

Attacks have already introduced harmful code into well-known ML datasets and libraries. Hidden backdoors within production AI systems are created in this fashion. CISA and NIST now suggest monitoring ML BOMs just as they do software SBO. Ms.

Every dependency’s integrity and security are guaranteed by a correct AI vulnerability management system. Among its artificial intelligence security testing, Qualysec incorporates supply chain risk analysis.

See how we secured AI infrastructure for real-world clients. Explore our case studies to learn more.

See How We Helped Businesses Stay Secure

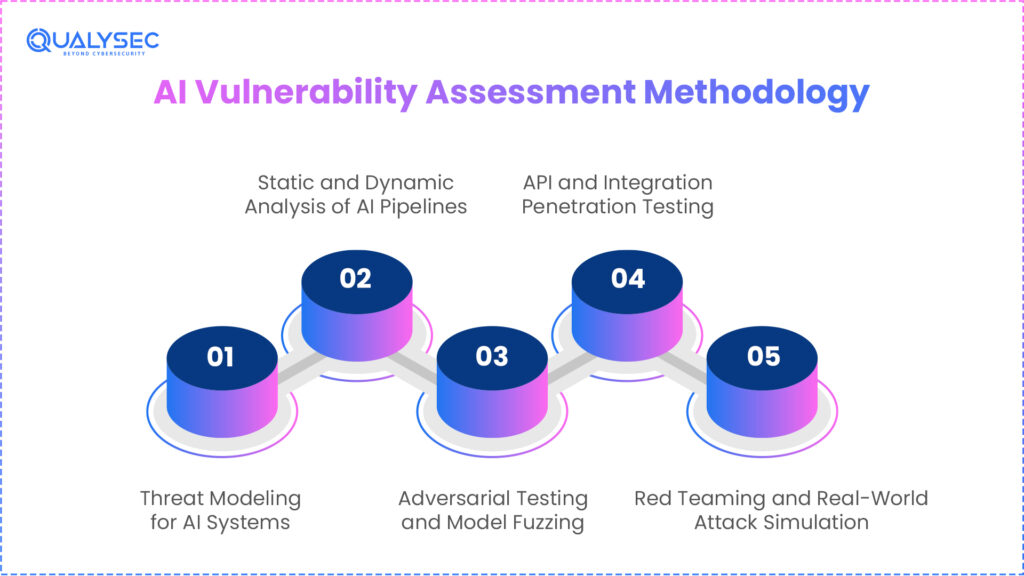

AI Vulnerability Assessment Methodology

A professional artificial intelligence vulnerability assessment uses a multi-layered approach far beyond basic scanning. Unlike software, artificial intelligence systems include data, models, training pipelines, APIs, and automatic decision flows. If any one of these is compromised, the whole intelligence layer becomes unreliable.

Modern AI security assessment approaches, therefore, employ a five-stage strategy testing behavior, honesty, and infrastructure concurrently. It is, along with dedicated API security testing to secure integrations and data exchanges. This approach conforms to international standards like the NIST AI Risk Management Framework and MITRE ATLAS.

Finding flaws is not the only aim; rather, it is also about grasping how a threat could turn minor weaknesses into business-level failures.

Qualysec does artificial intelligence vulnerability evaluations for businesses using LLMs, fraud detection systems, and decision automation exactly this way.

1. Threat Modeling for AI Systems

Every AI vulnerability evaluation starts with threat modeling. It shows where data comes in, how it moves across models, and where decisions are taken. This enables security professionals to find abuse routes that attackers could abuse.

Unlike conventional threat models, artificial intelligence systems exhibit nondeterministic behavior; hence, the same input may not always result in the same result. This offers fresh failure modes that have to be recorded.

A model for fraud detection, for instance, could seem secure at the API level but still be open to adversarial inputs bypassing its decision logic.

Moreover, maps decision authority inside threat modeling. It specifies where automated approval, rejection, recommendation, or action implementation of AI is permitted. Nodes with high authority become targets of priority.

This stage fits MITRE ATLAS threat mapping, which classifies artificial intelligence attacks according to their impact on confidentiality, availability, and integrity.

2. Static and Dynamic Analysis of AI Pipelines

Static analysis looks at AI code, access controls, training scripts, and infrastructure setups. It exposes inadequate permissions, exposed storage, misconfigured APIs, and hazardous data pipelines as part of a comprehensive AI pentesting approach.

Dynamic analysis goes further still. It examines how real traffic, production data, and user interactions affect the system’s reaction. This shows weaknesses that were never revealed in code review.

Dynamic testing may, for instance, show that a model behaves safely under usual inputs but leaks sensitive data when particular prompt structures are employed.

This combination is essential in AI vulnerability management, as AI threats are mostly behavioral rather than grammatical.

Detects silent failures using this dual technique by companies following NIST and ISO AI security guidelines.

3. Adversarial Testing and Model Fuzzing

Adversarial testing targets the mathematical reasoning of the model. Rather than looking for missing patches, testers submit precisely created inputs meant to fool the model.

Sending thousands of altered inputs, malformed prompts, and edge-case data points, model fuzzing automates this. This reveals areas where the model makes unlawful, prejudiced, or hazardous choices.

MIT, OpenAI, and Google Brain all study these methods extensively. Identifying prompt injection, jailbreaks, hallucinations, failures, and evasion attempts requires this phase.

4. API and Integration Penetration Testing

Rarely do artificial intelligence systems work by themselves. Through APIs and plugins, they link automation engines, CRMs, medical records, and payment systems.

These links produce effective routes of threat. A damaged prompt could cause background actions, retrieve internal data, or run workflows.

For this reason, API and plugin testing must be included in artificial intelligence for vulnerability assessment in cybersecurity. In its OWASP Top 10 LLM Vulnerabilities, OWASP has noted this danger.

5. Red Teaming and Real-World Attack Simulation

Red teams behave much like actual attackers. Checklists are not followed by them. They link vulnerabilities.

A tester could, for instance, access a dataset by abusing a cloud misconfiguration, poison it, remodel the model, and then abuse the new behavior through the API.

True breaches occur this way, and it’s the only means to verify actual AI vulnerability assessment readiness. EU Artificial Intelligence Act compliance and NIST AI governance standards call for red teaming.

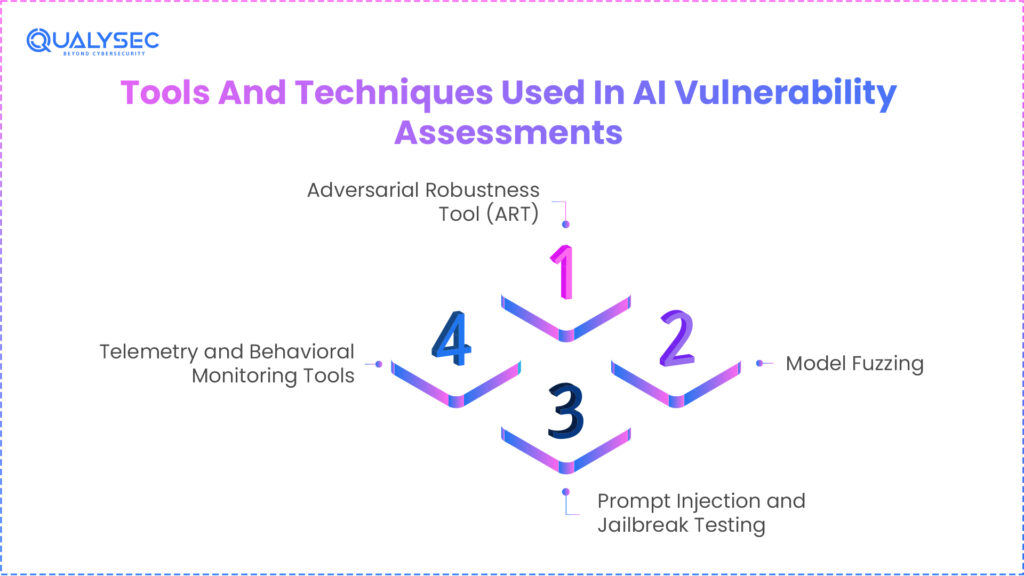

Tools And Techniques Used In AI Vulnerability Assessments

Modern artificial intelligence vulnerability analysis depends on far more than just basic AI vulnerability scanners. AI systems do not collapse just on account of poor code. Their mistakes result from hidden flaws in decision logic, malicious data tampering, and data corruption. Effective AI security assessment tools, therefore, have to assess the model’s software as well as its intelligence layer.

Leading research organizations like MIT, OpenAI, and NIST have verified that AI systems need adversarial testing, behavioral analysis, and robustness validation.

This is the reason companies entering artificial intelligence production today use layered AI vulnerability management techniques, blending human-driven testing with automation.

– Adversarial Robustness Tool (ART)

One of the most often used open frameworks for testing artificial intelligence models against evasion and poisoning threats is the Adversarial Robustness Toolbox. It lets security teams create malicious input patterns meant to deceive or influence the model.

ART evaluates how steady its decision boundaries are by feeding a model thousands of artificial attack examples. The model is regarded as vulnerable and exploitable if a slight modification in input results in a significant shift in output.

Identifying fraudulent bypass, image manipulation, and content moderation failures depends on this kind of resilience testing. Both IBM and Google have published studies confirming ART-based testing.

Read more about Top AI Security Tools to Protect Your Organisation in 2026

– Model Fuzzing

Model fuzzing uses the same idea as software fuzzing, but for AI decision logic. It introduces malformed inputs, combinations of characteristics, and randomized prompts into the model rather than arbitrary bytes.

This shows where the model acts unpredictably, gives risky results, or breaks policy limitations. These are the exact circumstances attackers abuse in production AI systems.

This is especially important for artificial intelligence systems that make financial or medical decisions; fuzzing also reveals uncommon edge situations that regular testing never encounters.

Explore more about Cybersecurity for AI in Fintech: Key Risks & Controls

– Prompt Injection and Jailbreak Testing

Especially prone to prompt injection are big language models. Attacks use precisely written commands to bypass safety precautions, expose internal system prompts, or recover secret information.

By trying to circumvent content filters, access hidden context, and manipulate downstream devices, prompt injection testing mimics these attacks.

– Telemetry and Behavioral Monitoring Tools

Behavioral telemetry is also used in modern AI vulnerability management. These instruments follow output drift, unusual patterns, and decision distribution changes.

Usually, the first indication is a little deviation in model results when attackers poison data or change prompts. Real-time sensors on telemetry systems spot these changes.

Curious about how AI vulnerabilities are documented and remediated? Download a sample AI pentesting report today.

Get a Free Sample Pentest Report

Differences Between AI Vulnerability Assessment And Traditional Vapt

Originally developed for a world of static, deterministic code, classic Vulnerability Assessment and Penetration Testing (VAPT). A certain input in that context always produces a particular output, and security is concerned with locating errors such as SQL injections or buffer overflows. AI systems, nonetheless, are probabilistic. They run on statistical chances; therefore, their actions might vary even when given the same input twice. Though a conventional vulnerability scanner could find an unpatched server, it is essentially unable to identify a backdoor buried inside the millions of parameters of a neural network.

Traditional VAPT uses signature-based scanning; an AI vulnerability assessment demands a behavioral study. In an AI setting, the exploit is a deliberate alteration of the model’s training data or inference logic rather than a fractured piece of code. For instance, although conventional penetration testing could protect the API endpoint, it won’t prevent a prompt injection attack that deceives the model into revealing its own system instructions. Specialized AI security assessment tools are thus required to stress-test the actual mathematical decision boundaries of the model.

Finally, the cleaning method varies greatly. In conventional IT, you apply a patch, and the problem is resolved. In artificial intelligence, if your model has picked up a malicious pattern through data poisoning, you might have to completely retrain the model or use sophisticated input-filtering levels. This provides the evaluation procedure with far more integration with the MLOps lifecycle than conventional security.

Comparison Table: Traditional VAPT vs. AI Vulnerability Assessment

| Feature | Traditional VAPT | AI Vulnerability Assessment |

| Primary Target | Known software bugs (CVEs/OWASP Top 10) | Data integrity and Model logic (MITRE ATLAS) |

| Testing Style | Deterministic (Pass/Fail) | Probabilistic (Risk Scoring & Robustness) |

| Persistence | Fixed via code patches/updates | Requires retraining or data/input filtering |

| Threat Vector | Network, APIs, and OS flaws | Training data, Model weights, and Inference |

| Outcome focus | System uptime and data confidentiality | Decision integrity and model reliability |

Qualysec validates AI-driven API and plugin securityacross real production integrations!

Regulatory, Compliance, And AI Governance Considerations

The EU AI Act will be fully applicable by August 2, 2026, therefore ushering in a world turn in AI control. High-risk systems such as those used in healthcare, finance, or critical infrastructure must now undergo compulsory AI security assessment processes to show their resilience. Failing to comply might lead to fines up to 7% of worldwide yearly revenue and major legal responsibility if a model leaks personal consumer information via membership inference threat. This rule compels businesses to change from a fast-moving, shattering mindset to a secure-by-design approach.

The worldwide benchmark for reliable AI governance has also become the NIST AI Risk Management Framework (RMF) 1.0. It stresses four essential activities: govern, map, measure, and manage. Today’s companies are required to keep written evidence of model explainability, bias audits, and adversarial testing. Many companies will discover they cannot pass security checks or cooperate with other governed entities in the worldwide market without a formal AI vulnerability management program in place.

Emerging, too, are industry-specific standards like ISO/IEC 42001, which offers a certifiable management system for artificial intelligence. These models call for openness in how models are verified and how data is gathered. In the age of autonomous systems, an AI vulnerability assessment has evolved from a security duty to be a prerequisite for legal operation and insurance eligibility.

Summary of AI Regulatory Landscape 2026

| Regulation | Region | Mandate for AI Security |

| EU AI Act | European Union | Mandatory adversarial robustness & conformity assessments. |

| NIST AI RMF | USA / Global | Governance of trustworthiness, safety, and security. |

| DPDP Act | India | Strict data provenance for training sets & PII protection. |

| ISO/IEC 42001 | International | Certification for AI Management Systems (AIMS). |

Hear directly from businesses about how they protect their AI governance with our support. View our testimonials.

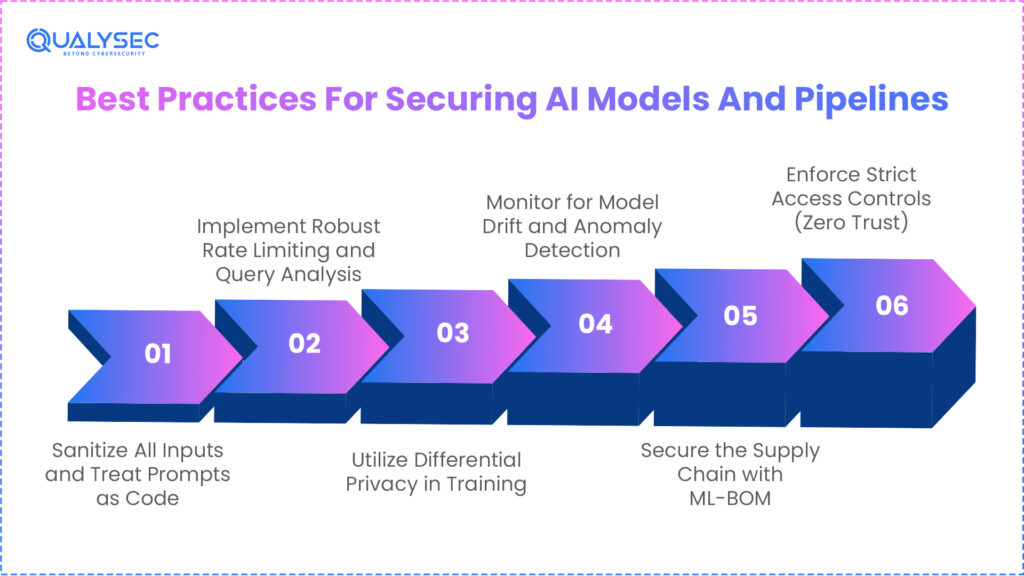

Best Practices For Securing AI Models And Pipelines

- Sanitize All Inputs and Treat Prompts as Code: Treat every user request or outside data point as potentially harmful by cleaning all inputs and treating prompts as code. Prompts are the new code in the world of LLMs; neglecting to clean them results in OWASP LLM01.

- Implement Robust Rate Limiting and Query Analysis: Use strong rate limiting and query analysis to restrict the volume and frequency of inquiries a single user may make, therefore averting model theft and extraction attacks. This stops attackers from scraping the reasoning of your model to create a rival shadow model.

- Utilize Differential Privacy in Training: Mask individual data points in your training set to prevent model inversion threats. This guarantees that even if a hacker regularly questions the model, they cannot recreate the private PII of your customers.

- Monitor for Model Drift and Anomaly Detection: Establish real-time alerts for changes in your model’s accuracy or output distribution to detect model drift and anomaly detection. Often, the first indication of a quiet poisoning or an evasion attempt is an abrupt behavior change.

- Secure the Supply Chain with ML-BOM: Maintain a Machine Learning Bill of Materials (ML-BOM) to track the provenance of every library, pre-trained model (like those on Hugging Face), and dataset used in your development lifecycle.

- Enforce Strict Access Controls (Zero Trust): IBM claims that 97% of AI-related breaches come about because of subpar access limitations. Make sure model weights and training logs are encrypted and accessible only by MFA-secured least-privilege accounts.

How Penetration Testing Companies Perform AI Vulnerability Assessments

Elite companies do not simply use a scanner and give a generic report. They use deep, hands-on investigation into linked exploits, combined with high-speed automated artificial intelligence security assessment technology. A normal engagement starts with Adversarial Red Teaming, in which testers imitate a cunning opponent. A tester, for instance, could discover a little network flaw granting access to a non-sensitive dataset, then use that access to poison the data, which ultimately reaches the training pipeline and produces a backdoor in the ultimate product model.

Model fuzzing is the process of sending millions of random, boundary-pushing inputs to locate the areas where the model’s neurons start to fire erroneously. For generative artificial intelligence, businesses apply jailbreak testing to determine if the model may be forced to disregard its security rules. Finally, the analysis checks the MLOps Pipeline to make sure the Model Registry is secure, and no one can replace a genuine model with a harmful one during the deployment stage. This all-encompassing perspective is the only approach to genuinely protecting an intelligent system.

Why Choose Qualysec For AI Vulnerability Assessment Services?

We at Qualysec cross boundaries to make sure your invention stays a competitive edge, not a liability; we don’t only check boxes. Particularly suited for the complexities of the 2026 threat environment, where conventional security is insufficient, our AI vulnerability assessment tools have been developed. In the age of artificial intelligence, one flaw could destroy your whole brand reputation.

- Hybrid Testing Model: To guarantee zero false positives and a 100% detection rate of logical flaws, we combine deep-dive manual penetration testing with high-speed automated scanning.

- End-to-End Pipeline Security: Offering a 360-degree barrier for your artificial intelligence ecosystem, we secure your data ingestion, MLOps, and cloud infrastructure rather than only evaluating the model.

- Expert Red Teaming: Our team mimics complex hostile attacks like context-window poisoning and membership inferencing that common instruments just miss.

- Developer-Friendly, Actionable Reports: You receive a prioritized roadmap with explicit, technical remediation measures that help your team toughen your artificial intelligence against actual hackers without compromising development.

Don’t let your AI become a liability. Book an AI Security Consultation with Qualysectoday to identify hidden risks before they impact your reputation!

Conclusion

Although it has produced a fresh category of dangers that conventional security just cannot address, the change toward AI-driven business models is the most important technical development of our decade. When your most valuable asset, your data and intelligence, is on the line, a conventional security posture is inadequate. The argument of innovation versus security is finished as laws like the EU AI Act go completely into effect; today, one has to have security before they can innovate.

By doing a strict AI vulnerability assessment, you are safeguarding not only code but also your company’s future, reputation, and competitive advantage in an increasingly automated world. Secure your pipeline today to make sure your artificial intelligence remains an asset for expansion instead of a backdoor for opponents.

Partner with us to protect your AI systems, reduce risk, and build secure, trustworthy AI solutions.

Find Your Perfect Security Partner

Frequently Asked Questions

1. What is an AI vulnerability?

It refers to a flaw in an artificial intelligence model, its training data, or its deployment pipeline that lets an attacker modify results, steal confidential information, or influence automated decisions.

2. What is the security assessment of AI?

Concentrating on dangers like data poisoning, adversarial evasion, and prompt injection, this is an exhaustive approach to finding and reducing risks throughout the AI lifecycle.

3. What are the 5 steps of vulnerability management?

The regular framework comprises 1. Discovery of assets; 2. Prioritization of risks; 3. Assessment of vulnerabilities; 4. Remediation of flaws; and 5. Verification by retesting.

4. How is AI used in vulnerability management?

AI examines massive volumes of security telemetry to give important vulnerabilities priority and project possible attack routes across hybrid-cloud environments with sophisticated setups.

5. How often should organizations perform AI vulnerability assessments?

Ideally, quarterly or anytime there is a major model’s training data, base architecture, or outside integration change, assessments should be conducted.

6. What is the difference between AI vulnerability assessment and AI penetration testing?

While penetration testing involves actively exploiting those vulnerabilities to gauge real-world business impact (the stress test), an AI vulnerability assessment finds and catalogues possible flaws (the check-up).

0 Comments