Introduction

When hospitals depend on artificial intelligence for diagnostics, monitoring, and medical devices, how safe is patient data? AI cybersecurity in healthcare has become a growing concern as cyber attackers are not lagging while healthcare systems start employing artificial intelligence at scale. Usually quicker, they are changing just as fast. The most sensitive data on the earth is already handled by the healthcare industry, and artificial intelligence has significantly improved its visibility and worth.

With a worldwide average healthcare data breach cost of $7.42 million in 2025, healthcare was once again the most costly sector for breaches for the fourteenth straight year (IBM Cost of a Data Breach Report). The attack surface has grown beyond conventional IT systems as artificial intelligence is included in diagnostics, radiology, patient triage, and connected medical devices.

Modern AI cybersecurity healthcare transcends network or database protection. It is about finding real-time learning systems that grow, make decisions, and affect patient outcomes. Attacks use artificial intelligence to automate reconnaissance, abuse logical faults, and circumvent static barriers.

Conventional cyber security healthcare approaches find difficulty in identifying these machine-speed threats. Static regulations, signature-based detection, and frequent audits cannot match opponents who constantly change.

This manual examines actual AI-driven attack scenarios, AI cybersecurity healthcare threats, and how healthcare institutions can safeguard patient information while promoting innovation. It also clarifies why thorough expert-led security validation, including manual penetration testing, is now crucial.

Understanding AI In Healthcare Cybersecurity

Deeply integrated in healthcare processes, artificial intelligence powers everything from autonomous medical devices to radiology tools and clinical decision support. From a cyber security healthcare viewpoint, this combination totally modifies the attack surface by including unconventional aspects like machine learning models, training datasets, and autonomous decision-making agents.

While traditional security paradigms emphasize securing networks and servers, AI security in healthcare calls for a two-layered approach that safeguards both the infrastructure and the intelligence layers. This entails defending the AI itself from manipulation as well as employing artificial intelligence for defense, say, automating threat detection. By 2026, healthcare systems’ dependence on AI security will be a need since cybercriminals are currently deploying artificial intelligence to initiate high-speed reconnaissance and circumvent human detection.

Efficient healthcare AI risk management starts with realizing that your patient records are just as precious and susceptible as your AI models. A single flaw in an AI triage bot or an unencrypted training log might cause a large AI healthcare data breach. Organizations need to give visibility into their AI pipelines top priority so that every diagnostic suggestion or automated patient contact is validated and safe.

Secure Your Clinical AI Assets Today: Is your AI infrastructure resilient enough to withstand a high-speed automated exploit? Qualysec’s specialized AI security auditing shields your medical models from external attackers and internal leaks. Ensure your diagnostic tools remain a clinical asset, not a security liability. Build a secure AI ecosystem with Qualysec!

The Healthcare AI Threat Landscape

Healthcare executives face a converging threat environment as we approach 2026, when attackers realize that data integrity problems and hospital downtime provide the most leverage. These rivals utilize artificial intelligence to automate the identification of misconfigured cloud buckets and unpatched medical devices in seconds, eliminating manual labor. At this pace, conventional, manual security reaction systems become outmoded.

Moreover, the growth of artificial intelligence poses difficulties in medicine, which comprises advanced Deepfake Engineering, in which fraudsters utilize AI-generated audio and video to simulate hospital executives or leading doctors. These social engineering attacks help to enable illegal data transfers or allow administrative access to networks that are forbidden. The menace is about trust theft, not just file locks.

Automatically Detected Scouting

Attackers quickly examine healthcare settings for exposed APIs, unpatched medical equipment, and misconfigured cloud storage using artificial intelligence. Once weeks of effort were needed; seconds of it are now available.

Deepfake Engineering

To imitate hospital administrators, merchants, or clinicians, voice and video deepfakes produced by artificial intelligence are employed. These threats help con artists steal money and gain illegal access to sensitive systems.

Malware driven by AI

Modern kinds of malware abuse trusted system processes to get around Endpoint Detection and Response systems. These attacks often go unnoticed while softly exfiltrating patient data. Learn about AI-Powered Malware Detection.

The following table lists the major AI-driven dangers now confronting healthcare professionals:

| Threat Type | Mechanism | Potential Healthcare Impact |

| Automated Reconnaissance | AI scans for network vulnerabilities at scale. | Rapid identification of unpatched medical devices. |

| Deepfake Engineering | AI-generated voice/video impersonation. | Unauthorized access to PHI via social engineering. |

| Model Inversion | Extracting training data from a live AI model. | Direct leakage of sensitive patient medical histories. |

| Evasion Attacks | Modifying inputs to “trick” a diagnostic AI. | Incorrect clinical outcomes and patient safety risks. |

Protect Your AI Systems from Cyber Threats

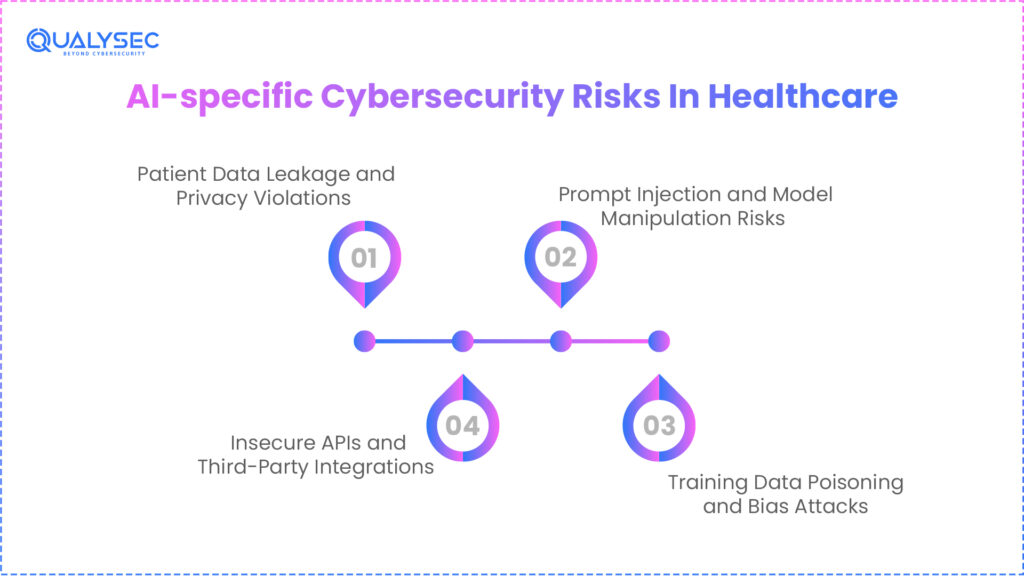

AI-specific Cybersecurity Risks In Healthcare

The incorporation of artificial intelligence into medical procedures has exposed a new kind of digital flaw that goes beyond conventional software faults. The healthcare system’s intelligence layer, the data, models, and decision-making logic, is under target. The stakes for artificial intelligence cybersecurity in healthcare are exceptionally high since hospital AI systems often have immediate access to Electronic Health Records (EHRs) and real-time patient monitoring streams. Attackers are starting to modify the exact algorithms doctors employ for life-saving decisions instead of merely stealing information.

Knowing that these models are dynamic entities that learn from the data they consume helps one to grasp AI security in medicine. The whole clinical basis might be compromised if the interface to the model is unprotected or the data is untrustworthy. Organizations have to evolve from a stance of guarding boxes and wires to defending weights and biases. The main AI-specific risks now affecting the healthcare scene are listed here.

1. Patient Data Leakage and Privacy Violations

Unknowingly retaining sensitive protected health information, big language models can be used for clinical documentation, medical research, and patient interaction tools. Unlike conventional databases, LLMs may store patient data fragments within learned parameters, which makes detection and management of leakage more difficult.

Attackers may construct targeted prompts to obtain patient names, diagnoses, or even identifiers like Social Security numbers if artificial intelligence systems are not appropriately separated and protected. This generates direct AI in healthcare data breach situations, devoid of traditional alerts.

Without proper anonymization, AI chatbots record conversations for later learning, therefore increasing this risk. Many healthcare institutions also do not encrypt logs produced by artificial intelligence tools, therefore exposing unprocessed patient data to both internal and external dangers.

Data reuse across testing, development, and production settings aggravates the problem even more. Sensitive data moves discreetly around systems when training sets are duplicated without strong supervision.

Therefore, healthcare’s AI data security in healthcare should emphasize data flow visibility rather than just storage protection. Organizations need to appreciate how patient information flows via prompts, models, logs, and outputs.

2. Prompt Injection and Model Manipulation Risks

Rapid injection is among the fastest-growing dangers in artificial intelligence security in medicine. It entails giving artificial intelligence systems malevolent instructions to bypass existing defenses or change behavior. Unlike malware, these attacks use logic rather than code.

Attackers can prioritize unwanted demands in medical settings by tampering with artificial intelligence triage robots, clinical aides, or scheduling systems. This might give access to patient data or have an impact on medical decisions without violating authentication systems.

Since artificial intelligence models are made to adhere to instructions, they can be tricked into disclosing sensitive information, administrative roles, or internal system prompts. This renders healthcare’s AI data security in healthcare especially susceptible to conversational abuse.

Traditional security solutions have trouble finding prompt-based attacks since no harmful files or network anomalies become clear. The system operates as designed, just not as desired.

These threats can result in compromised patient data exposure, changed diagnosis suggestions, and avoided authorization processes. This gradually undermines clinical faith in artificial intelligence (AI) systems.

3. Training Data Poisoning and Bias Attacks

One of the most serious AI issues in healthcare, training data poisoning has subtle but long-lasting effects. By introducing malicious or biased data into training pipelines, attackers can reduce model performance over time without obvious notice.

In clinical situations, even a minor fall in diagnostic accuracy can have very terrible effects. Studies demonstrate how steeply the misdiagnosis rates and patient damage may rise as medical AI systems’ accuracy declines by 20 to 25%.

Poisoned data can also create prejudice, which results in inconsistent treatment advice for different population groups. This exposes healthcare providers to ethical violations and regulatory investigations.

Poisoned inputs may survive throughout retraining cycles unless strong validation measures are in place because artificial intelligence models learn constantly. This turns healthcare AI risk management into a continuous obligation instead of a single chore.

Data provenance checks, retraining audits, and model performance monitoring are required of groups to avoid long-term deterioration.

4. Insecure APIs and Third-Party Integrations

Most healthcare AI systems use APIs to communicate with outside vendors, imaging devices, and electronic health records. Prime threat targets are these APIs since they frequently run with little monitoring and broad permissions, making API security a critical concern and a prime target for attackers.

Attacks may move laterally across healthcare systems without strong medical device AI security measures by means of compromised APIs. One poor integration might reveal several systems.

Because of their frequent data exchange and outside access needs, cloud-based artificial intelligence solutions and remote patient monitoring devices are particularly susceptible. Many outside suppliers do not satisfy the same security levels as medical professionals.

Therefore, AI security for healthcare systems must span beyond internal defenses to comprise vendor risk assessment, API authentication controls, and constant monitoring.

Go Beyond Automated Scans: Generic scanners miss the complex logic flaws in healthcare AI. Qualysec’s elite manual penetration testing uncovers hidden risks like prompt injection and unauthorized PHI extraction before they can be exploited. Protect your patient data with deep-dive analysis from certified experts. Schedule your comprehensive AI security audit!

Impact of AI Cyber Attacks on Patient Safety and Trust

Financial loss is not the only outcome of a successful healthcare AI attack; direct danger to human life is also present. The validity of every later medical decision is brought into doubt when a diagnostic artificial intelligence is compromised. The result is physical damage if a model tampering causes an algorithm to propose an inappropriate dose or if a monitoring system fails to notify personnel of a cardiac event owing to an evasion attack. This change converts the core patient safety obligation from an IT need to one using medical device AI security. This is where approaches used in Cybersecurity for AI in Fintech are increasingly relevant.

Moreover, the erosion of trust might be irreversible. Patients expect perfect privacy and provide their most personal information. Studies show that 71% of patients would lose faith in a provider that doesn’t safeguard their data. Therefore, a mass exodus of patients might result from a prominent healthcare data breach caused by artificial intelligence. Apart from the clinical and reputational harm, companies risk severe legal penalties and lawsuits that could cripple even the biggest healthcare systems.

Healthcare AI Impact Matrix

| Impact Category | Clinical Consequence | Operational/Legal Risk |

| Clinical Disruption | Delayed life-saving treatments due to system lockouts. | Significant increase in medical malpractice liability. |

| Diagnostic Integrity | Incorrect dosages or missed diagnoses from poisoned models. | Failure to meet FDA AI cybersecurity requirements. |

| Data Sovereignty | Unauthorized extraction of PHI through model inversion. | Heavy HIPAA fines and OCR investigations. |

| Patient Trust | Loss of confidence in automated clinical tools. | 71% of patients are likely to switch healthcare providers. |

Explore more about AI for Cyber Attack Prevention- Stop Threats Before They Happen.

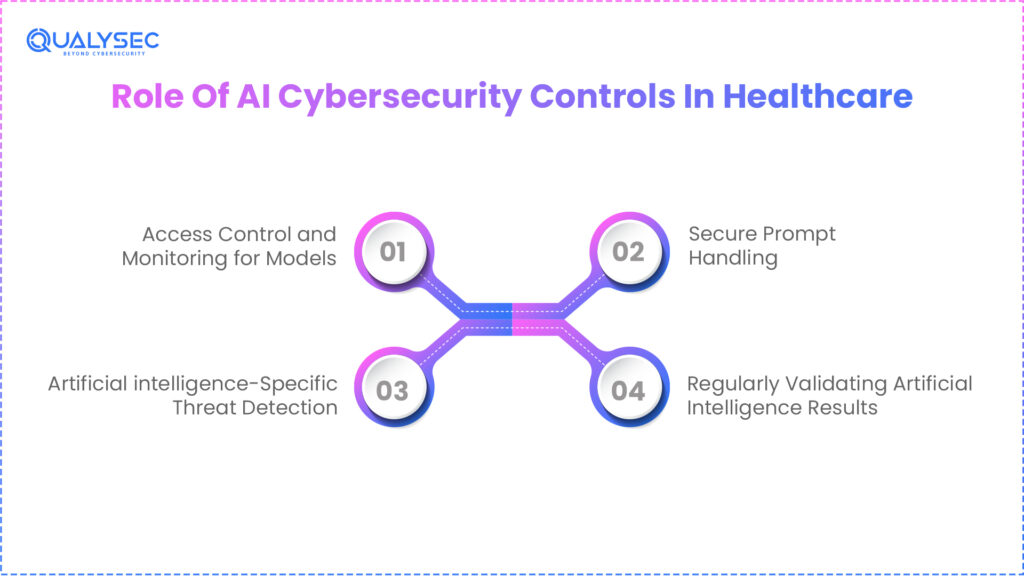

Role Of AI Cybersecurity Controls In Healthcare

Healthcare organizations have to use AI-specific cybersecurity measures going beyond their typical perimeter defenses in order to fight AI-driven threats. This approach highlights the role of AI in cybersecurity, where self-learning systems create a dynamic baseline of normal behavior for every hospital network user, medical device, and data stream. Understanding baseline behaviors enables these systems to spot small variances, such as a connected insulin pump abruptly trying to contact an unapproved external server and immediately stop the threat. This speed is essential since it stops nefarious lateral movement long before a human security analyst might even interpret the first alert.

Model access control and monitoring are among the important framework controls limiting interaction with sensitive diagnostic algorithms. Moreover, safe, quick processing is imperative to stop users or attackers from setting off hallucinations or data extraction using malicious queries. Additionally, used to find fileless malware and sophisticated scripts developed to circumvent conventional antivirus programs, AI threat detection helps. Finally, ongoing validation of artificial intelligence results guarantees that the model’s medical recommendations stay accurate over time, guarding against the gradual drift of the model or silent corruption.

For medical professionals, the only way to guarantee patient safety is by seeing how artificial intelligence behaves in real-world clinical contexts as opposed to just in a controlled testing setting. Acting as a digital safety net, these controls offer the needed supervision for autonomous systems managing life-critical operations. Implementing such a demanding system not only protects the information but also guarantees the hospital’s operational continuity.

Explanation of Important AI Cyber Security Controls

-> Access Control and Monitoring for Models

Like vital medical resources, artificial intelligence models need protection. Role-based access guarantees that only permitted clinicians, data scientists, or systems can engage with models. Continuous monitoring finds either unusual usage patterns that could indicate model abuse or unauthorized inference requests.

-> Secure Prompt Handling

In healthcare AI security, prompts are a primary attack path. Secure prompt management guarantees that malicious inputs cannot override system commands, retrieve PHI, or change clinical reasoning. This lowers the probability of artificial intelligence in cases involving healthcare data leaks resulting from quick injection.

-> Artificial Intelligence-Specific Threat Detection

Traditional security solutions overlook AI cybersecurity threats, including artificial intelligence logical attacks. Rather than only network traffic or files, AI-specific threat detection emphasizes model behavior, decision consistency, and output anomalies.

-> Regularly Validating Artificial Intelligence Results

Continuous verification of healthcare AI outputs guarantees precision and safety. Unexplained fluctuations, bias, or output drift can suggest compromised or poisoned training data.

Healthcare groups want actual AI visibility, not just laboratory-based performance indicators.

Download a sample pentesting report to see how AI cybersecurity controls are tested in healthcare environments

Get a Free Sample Pentest Report

Penetration Testing For Healthcare AI Systems

Preserving AI security in healthcare calls for more than just traditional vulnerability scans. Modern medical security now necessitates AI red teaming and focused penetration testing against the specific logic of machine learning models. Unlike conventional software, artificial intelligence systems can be compromised via their inputs and reasoning rather than just through code flaws. Thus, to guarantee that their patient records remain impenetrable, healthcare institutions need to test for rapid injection events, simulate instances of abuse, and prevent data extraction without consent.

Because automated scanners frequently lack awareness of clinical context or the probabilistic nature of artificial intelligence outputs, manual testing remains the ultimate gold standard in this field. A human tester can simulate a clever opponent who chains several small faults together to increase permissions inside an artificial intelligence agent. For example, a manual tester would probe whether a triage bot can be persuaded to provide administrative access to an Electronic Health Record (EHR) system through logical loopholes, even as an automated tool would check if a port is open.

Staying ahead of the curve depends on continual testing with AI application VAPT becoming increasingly important. Since artificial intelligence (AI) systems are always changing via model updates and new data. This forward-looking approach guarantees that newly discovered vulnerabilities, such as model inversion or adversarial evasion, are found and fixed before cybercriminals can use them.

Key AI Security Testing Scenarios in Healthcare

| Test Category | Target Area | Objective |

| Prompt Injection | AI Assistants & Chatbots | Test if the system can be forced to ignore safety filters. |

| Model Inversion | LLMs & Research Models | Verify if sensitive training data (PHI) can be extracted via queries. |

| Adversarial Evasion | Diagnostic Tools | Ensure small input changes don’t trick the AI into wrong diagnoses. |

| Privilege Escalation | AI Agents & APIs | Check if AI agents can be manipulated to gain unauthorized system access. |

You might like to know more about 5 Critical AI Security Vulnerabilities Every CTO Should Know

Regulatory And Compliance Considerations

Compliance in 2026 has advanced significantly beyond the fundamental HIPAA pillars. Medical device manufacturers are now obligated under the FDA’s AI cybersecurity requirements, specifically Section 524B of the FD&CAct to submit a thorough Secure Product Development Framework (SPDF). This rule represents a move toward a Total Product Life Cycle (TPLC) strategy, whereby manufacturers must show how they will monitor, identify, and handle cyber threats even after a gadget has been utilized in a clinical environment.

Regulators now want healthcare institutions to show proactive management of artificial intelligence risk instead of only reactive incident response. This means keeping a thorough Software Bill of Materials (SBOM) for every AI tool to trace vulnerabilities in third-party libraries. Failing to protect these intelligent systems could have serious repercussions, including multimillion-dollar legal penalties, required product recalls, and the loss of important healthcare licenses.

Drivers of Healthcare Compliance 2026

- HIPAA and GDPR: Guaranteed total privacy and independence of sensitive patient medical documents.

- FDA Section 524B: FDA Section 524B demands post-market vulnerability tracking and repairing for all cyber gadgets.

- NIST AI RMF: Following the NIST AI Risk Management Framework to guarantee openness and justice.

- EU AI Act: Navigating tight classification for high-risk artificial intelligence systems employed in medical diagnosis.

Fast-Track Your Compliance Journey: Navigating the latest FDA AI cybersecurity requirements shouldn’t slow down your innovation. Qualysec provides audit-ready reports and seamless compliance testing to satisfy the most stringent global regulatory bodies. Bridge the gap between cutting-edge AI and secure, compliant care delivery.

See How We Helped Businesses Stay Secure

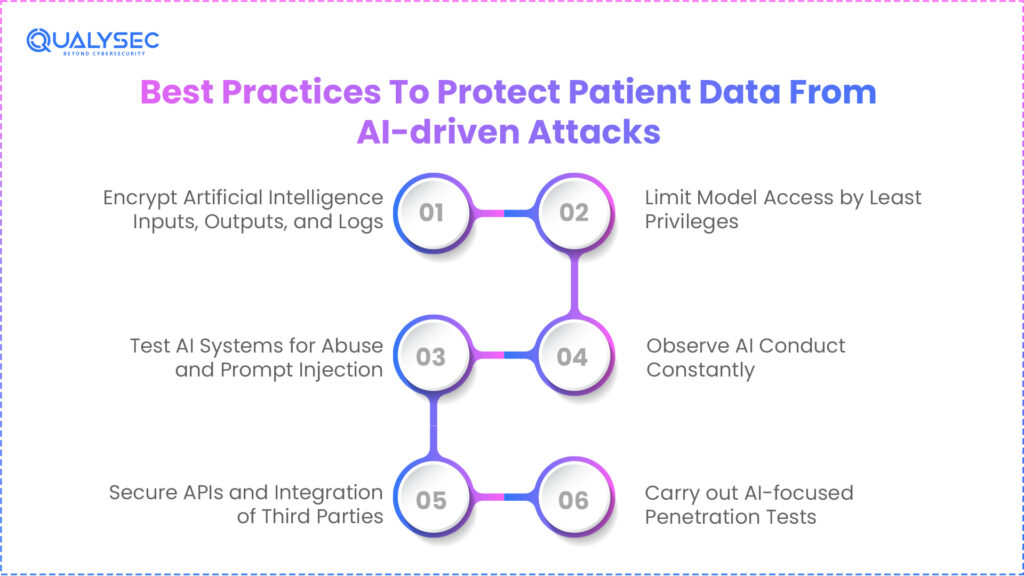

Best Practices To Protect Patient Data From AI-driven Attacks

Companies need to embrace a defense-in-depth strategy, tackling the data at every phase of its life to improve AI cybersecurity in healthcare. This begins with the strong encryption of all AI logs, outputs, and inputs. Hospitals can stop attackers from obtaining PHI by making sure even the memory of a large language model is encrypted using model inversion methods. Moreover, the concept of least privilege ought to be strictly observed for model access to guarantee that AI tools merely have access to the particular datasets needed for their immediate use.

Additionally, groups should give high importance to the continuous monitoring of artificial intelligence behavior so as to find any abnormalities or drift that might point to an ongoing attack or data poisoning. Equally important is securing third-party integrations and APIs since these are typically the weakest points for data breaches. Finally, regular AI-focused penetration testing lets security teams find and fix logic faults before they lead to a large healthcare data breach caused by artificial intelligence.

Extended Best Practices

- Encrypt Artificial Intelligence Inputs, Outputs, and Logs: Encryption keeps attackers from abusing exposed prompts, logs, or inference findings that could include PHI.

- Limit Model Access by Least Privileges: Only authorized systems and users should work with artificial intelligence models. Too much open access raises breach risk.

- Test AI Systems for Abuse and Prompt Injection: Through regular adversarial testing, artificial intelligence assistants are prevented from being persuaded to leak information or circumvent restrictions.

- Observe AI Conduct Constantly: Behavioral monitoring finds anomalies, drift, or suspicious output patterns pointing to compromise.

- Secure APIs and Integration of Third Parties: Regular authentication, monitoring, and testing of APIs linking artificial intelligence systems to EHRs or suppliers is required.

- Carry out AI-focused Penetration Tests: Manual testing confirms security assumptions under actual threat circumstances.

Security teams have to work closely with data science and clinical teams. Artificial intelligence security has to work together with other systems.

Choosing The Right Healthcare AI Cybersecurity Partner

Not every security provider has the specific knowledge needed to negotiate the complexity of medical device AI security. Healthcare leaders must seek a demonstrated history in the medical field and specialized AI-specific testing skills when assessing a partner. A vendor who only knows conventional IT will probably overlook the subtle dangers connected with neural networks and automatic clinical agents.

A great partner should be well-versed in medical laws and can assist you in negotiating the most recent FDA AI cybersecurity standards. Manual testing must also be given top priority as contemporary cybercriminals’ creative attacks frequently go beyond automated protection. The ideal partner works as an extension of your team, enabling you to keep patient trust without slowing down the rate of medical innovation, and so minimizing risk.

Expertise In Healthcare Regulations And AI Security

In a high-stakes field, healthcare security, a single error could result in legal disaster as well as physical damage to patients. Your cybersecurity ally, hence, needs a close grasp of the patient safety effects of every security measure they advise. This includes knowing how to trade off the need for clinicians to have rapid, life-saving access to information during crises with strict data lockdowns.

Your partner has to be acquainted with the HHS Cybersecurity Performance Goals and other changing standards. Building confidence among patients, doctors, and regulators depends on this knowledge. A partner who knows the clinical soul of the company can put in place security policies supporting, rather than blocking, the main goal of patient care.

Importance Of Manual And Continuous Penetration Testing

A one-time yearly audit is no longer adequate in the fast-changing environment of AI security for healthcare systems. AI threats develop at the pace of software upgrades; therefore, a system protected yesterday may be exposed tomorrow. Automated tools just cannot see that certain manual penetration testing is essential for revealing complicated exploit chains where an attacker uses several small flaws to cause a significant breach.

Manual testing particularly reveals logical errors in artificial intelligence processes, including determining if a diagnostic model may be swayed into circumventing its own safety checks. Consistent testing guarantees that your security posture stays immutable as your network integrations evolve and as your models grow from fresh data. Resilient healthcare companies differ from those that make news after a significant violation in their constant attention.

Protect the Heart of Patient Care: Connected medical devices are prime targets for cyberattacks. Qualysec specializes in securing firmware and communication protocols for critical equipment like insulin pumps and imaging systems. Ensure your devices are as safe as the medical care they provide. Schedule a strategy session to secure your medical devices!

Conclusion

For the contemporary medical field, the development of AI cybersecurity healthcare is a double-edged sword. Though AI-powered solutions present the possibility for amazing breakthroughs in operational efficiency and patient care, they also bring a sophisticated new class of dangers that conventional defenses are ill-suited to address. More than just a firewall is needed in 2026 to safeguard patient data; rather, a proactive, human-led, AI-supported defense plan built on regular AI risk assessment and a strong focus on machine learning model integrity.

Healthcare companies can negotiate this digital transition safely by using a zero-trust architecture, carefully following the most recent FDA AI cybersecurity criteria, and teaming up with seasoned security professionals like Qualysec. The aim is evident: to make sure the intelligent hospital of the future continues to be a venue of healing and confidence where creativity never compromises patient safety or privacy.

Schedule a meeting with an expert to understand how AI cybersecurity protects patient data and medical systems.

Speak directly with Qualysec’s certified professionals to identify vulnerabilities before attackers do.

FAQs

1. How secure is AI in healthcare?

Healthcare artificial intelligence is as safe as the testing and safeguards around it. These systems are very susceptible to quick injection, data leakage, and training data corruption that may endanger patient safety if they lack specialized AI security in healthcare guidelines.

2. Which AI tool is used in healthcare cybersecurity?

Self-learning artificial intelligence and AI-powered Endpoint Detection and Response (EDR) are often combined by healthcare organizations for network monitoring. Still, to find actual-world AI challenges in healthcare, manual penetration testing should complement these instruments.

3. What are the biggest AI cybersecurity risks in healthcare?

The main risks include the leaking of PHI via LLMs, poisoning of diagnostic training data, unsecured APIs that permit lateral network movement, and the control of autonomous medical devices.

4. Why is AI cybersecurity critical for healthcare organizations?

This is especially important since artificial intelligence has a direct influence on clinical judgments. An artificial intelligence system breach is a patient safety occurrence that can cause incorrect diagnoses and postponed medical treatments, not only a data loss incident.

5. How can healthcare organizations secure AI systems?

A multi-layered strategy accomplishes security: encrypting all artificial intelligence data, implementing tight access restrictions, following FDA AI cybersecurity standards, and, frequently, manual AI penetration testing.

6. How do healthcare regulations impact AI cybersecurity?

HIPAA, FDA Section 524B, and other rules demand that suppliers and manufacturers employ secure-by-design concepts, keep SBOMs, and continuously monitor vulnerabilities over the lifespan of the artificial intelligence system.

0 Comments