Key Takeaways

- The EU AI Act has been in effect since 1 August 2024; the banned AI practices have been banned since 2 February 2025, and the majority of the requirements, including high-risk AI ones, will be enforced on 2 August 2026.

- Article 99 sets maximum sanctions, such as EUR 35 million or 7 percent of the annual turnover across the globe, should there be illegal AI practices.

- Every organization must now be capable of demonstrating AI literacy to those employees and contractors who operate AI systems, and this has been enforced since February 2025.

- The Act is extraterritorial as well: the non-EU firms whose AI systems are utilized in the market of the EU are obliged to comply with it regardless of where the company’s headquarters are located.

- Simplification amendments endorsed as part of the Digital Omnibus Package (adopted 19 November 2025) include key requirements that companies should follow before adopting compliance programs.

Introduction

The clock is running. The majority of responsibilities associated with the compliance of the EU AI Act, as well as the whole structure of high-risk AI systems, will become effective on 2 August 2026. The fines can be introduced by the national competent authorities of all 27 EU member states from that date.

The EU AI Act is the first binding global comprehensive regulation on AI in the European Commission on artificial intelligence. It classifies any AI system based on the risk and gives each participant in the AI supply chain specific responsibilities, and creates an administrative framework, the AI Office, the AI Board, and national market surveillance authorities, which also have existing investigative power and significant fines.

This will no longer be a problem in the future for the businesses that use, create, import, or distribute AI systems within or in the European Union. There are risk levels, requirements, timescale, governance, penalty regime, and compliance checklist 8-step checking guide, which you should complete by the time the enforcement begins.

What Is the EU AI Act?

The EU has a binding law, the EU AI Act (or Regulation (EU) 2024/1689), which governs the development, utilization, and implementation of artificial intelligence systems. It was suggested by the European Commission in April 2021 and formally adopted in 2024, and came into force on 1 August 2024.

The Act operates based on a risk framework, as the more significant the amount of damage a given AI system can inflict, the more compliance regulations. It applies to AI systems located on the EU market or going live within the EU, regardless of the location where the provider has been established.

Your organization must mention this regulation (EU) 2024/1689 in all compliance reports, technical documentation, and conformity assessment reports it will be compiling. This is the sole most crucial entity signal of any record of conformity to any EU AI regulation.

Did You Know? Italy became the pioneer EU member country to establish the framework of the act nationally. The fines outlined in the No. 132/2025 law of Italy, which was effective on 10 October 2025, include fines of up to EUR 774,685 and a criminal offense against unlawful AI forms of deepfakes, which leads to a sentence of up to five years in prison.

The Four Tiers of the EU AI Act Risk Classification

The risk classification system is the structural basis of the entire AI Act compliance regime in the EU. Your organization must assess and classify any AI systems it develops or implements, and it cannot take any further compliance steps. It launches every AI risk assessment EU AI Act procedure.

| Risk Tier | Definition | Examples | Examples | Enforcement Deadline |

| Unacceptable Risk (Prohibited) | AI systems pose clear threats to fundamental rights, safety, or democratic values | Social scoring by governments; real-time biometric surveillance in public; subliminal manipulation; emotion recognition in workplaces/education | Complete ban — must be removed from operation | 2 February 2025 (already in force) |

| High Risk (Annex III) | AI systems in critical sectors or affecting fundamental rights | AI in recruitment/HR, credit scoring, medical devices, law enforcement evidence evaluation, border control, judicial decisions, critical infrastructure | Risk management system, data governance, technical documentation, human oversight, conformity assessment, CE marking, EU database registration | 2 August 2026 |

| High Risk (Annex I — Regulated Products) | AI embedded in products is already regulated under EU law | Machinery, medical devices, aviation equipment, motor vehicles | Same as Annex III, with product-specific safety obligations | 2 August 2027 (extended transition) |

| Limited Risk | AI with specific transparency obligations | Chatbots; deepfake content generators; emotion recognition (outside prohibited contexts); AI-generated text | Must disclose AI nature to users; label synthetic content in machine-readable format | 2 August 2026 |

| Minimal Risk | AI with no significant obligations | Spam filters, AI in video games, and recommendation systems not meeting high-risk criteria | Voluntary codes of conduct encouraged; AI literacy obligation applies | Ongoing (AI literacy: Feb 2025) |

A Remark on the Complexity of Classification

The classification of risk is not always evident. The creators of the recruitment screening AI tool that they developed as a general productivity tool can still classify it as a high-risk one under Annex III once it starts to affect employment. The European Commission pledges to publish step-by-step instructions and illustrations to explain borderline categories by February 2026. Organizations ought to use a more conservative method of classification and record their rationale until they finalize that guidance.

Scope of the EU AI Act: Who Should Comply?

The EU AI Act compliance requirement is applied to the whole AI supply chain. This is the difference between your particular duties, based upon the chain, and the difference is of great importance in practice, including AI security and risk management.

| Role | Definition | Primary Obligations |

| Provider | Develops or places an AI system on the EU market under their name | Heaviest obligations: conformity assessment, technical documentation, CE marking, EU database registration, post-market monitoring, incident reporting |

| Deployer | Uses an AI system in a professional context | Implement provider instructions; fundamental rights impact assessment where required; maintain usage logs; ensure human oversight |

| Importer | Brings a non-EU AI system into the EU market | Verify provider compliance; do not place non-compliant systems on the market; maintain documentation |

| Distributor | Makes an AI system available without modifying it | Verify CE marking and documentation; do not distribute non-compliant systems |

| Authorised Representative | Non-EU provider’s designated EU contact | Point of contact for authorities; ensure compliance documentation is maintained |

Are Non-EU Companies Subject to the EU AI Act?

Yes, and this is one of the most thoroughly misinterpreted points of the Act. The regulation on EU AI targets any provider that puts an AI system on the EU market or brings it into service in the EU, irrespective of the location where that provider is based. An Indian, United States, or Japanese company that provides an AI system to customers in the EU is a provider under the Act and has to fulfill all the relevant obligations.

The non-EU providers are required to designate an authorized representative who is set up in the EU. This representative acts as the main contact with national competent authorities, and he/she must register in the technical file of the AI system.

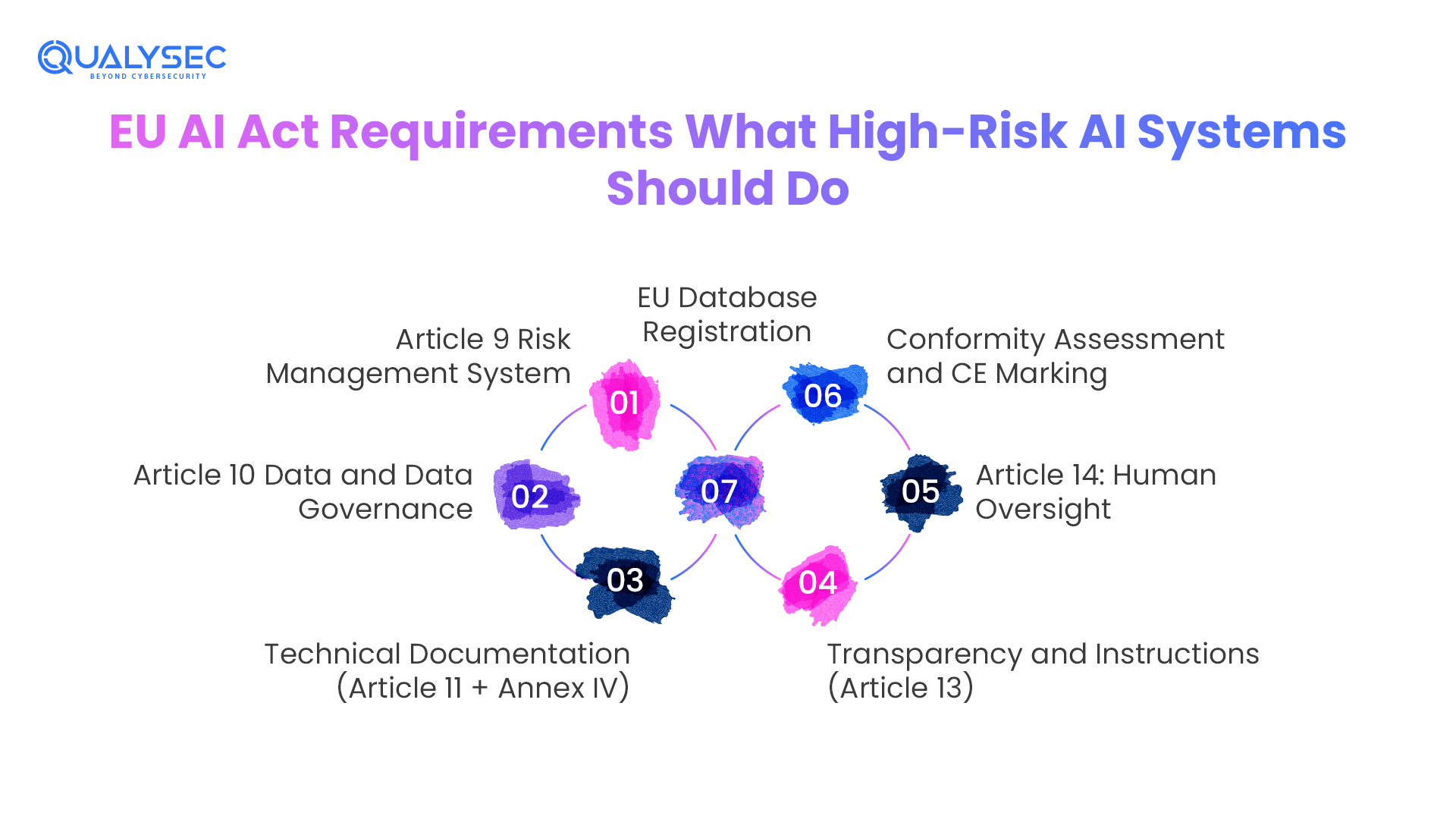

EU AI Act Requirements: What High-Risk AI Systems Should Do

In the case of organizations implementing or creating high-risk AI systems, as specified in Annex III, the EU AI Act guidelines are comprehensive and multi-dimensional. All of these obligations are also associated with a particular article of Regulation 2024/1689.

Article 9 Risk Management System

The team must identify, estimate, evaluate, and mitigate the risks through a documented process that is continuous in relation to the AI system. This is not a single-time evaluation, as the team has to maintain it during the lifetime of the system and whenever there is a major change.

Article 10 Data and Data Governance

It requires the training, validation, and testing datasets to be of high quality, i.e., accurate, complete, and free of bias. Organizations should record data provenance, the methodology of data collection, and the actions taken to overcome statistical bias. This requirement collides head-on with GDPR in the case of personal data.

Technical Documentation (Article 11 + Annex IV)

Before the system is introduced in the market, Technical Documentation (Article 11 + Annex IV) demands a detailed technical file. The file should include the general description of the system, specifications of the design, training methodology, validation/testing procedure, risk management document, and post-market monitoring plan.

Transparency and Instructions (Article 13)

This requires designers to create high-risk AI systems in a way that will allow their deployers to comprehend the capabilities and limitations of the system. Every system should have clear guidelines to be followed.

Article 14: Human Oversight

The high-risk AI systems must be monitored, overridden, or shut down by humans. Designers should create the system so that a natural person can interpret its outputs and override them, and organizations must assign people with the mandate for this overriding role.

Conformity Assessment and CE Marking

Before deploying an AI system that poses a high risk to the EU market, providers should either carry out self-certification or engage a notified body (depending on the application area). Passing the evaluation leads to the entitlement to affix the CE marking.

EU Database Registration

The European Commission is to register the high-risk AI systems within the public database of the EU AI Act before deployment. Regulations require individuals who deploy some high-risk systems in publicly accessible places to be registered.

Ensure your high-risk AI systems meet EU AI Act requirements with confidence. Contact us today to assess your compliance readiness and protect your AI security.

Protect Your AI From Cyber Threats

EU AI Act Compliance Timeline — Every Deadline You Need to Know

| Date | Obligation | Who It Applies To |

| 1 August 2024 | EU AI Act enters into force | All organisations — the awareness and preparation phase begins |

| 2 February 2025 | Prohibited AI practices are banned; the AI literacy obligation applies | All providers and deployers — immediately actionable |

| 2 August 2025 | GPAI obligations apply; AI Office, AI Board, Scientific Panel operational; Penalty regime active | GPAI providers: all organisations with GPAI in their stack |

| 2 August 2026 | The majority of the Act applies: High-risk AI (Annex III), transparency rules (Article 50), and enforcement begins nationally | All high-risk AI providers and deployers, and most organisations using AI in professional contexts |

| 2 August 2027 | High-risk AI embedded in regulated products (Annex I); GPAI placed on market before Aug 2025 | Providers of AI in medical devices, machinery, vehicles, and aviation equipment |

| 31 December 2030 | Large-scale IT systems listed in Annex X | Specific legacy IT systems in law enforcement and border management |

| Ongoing (from Aug 2026) | Post-market monitoring, incident reporting, and regulatory sandbox participation | All high-risk AI system operators |

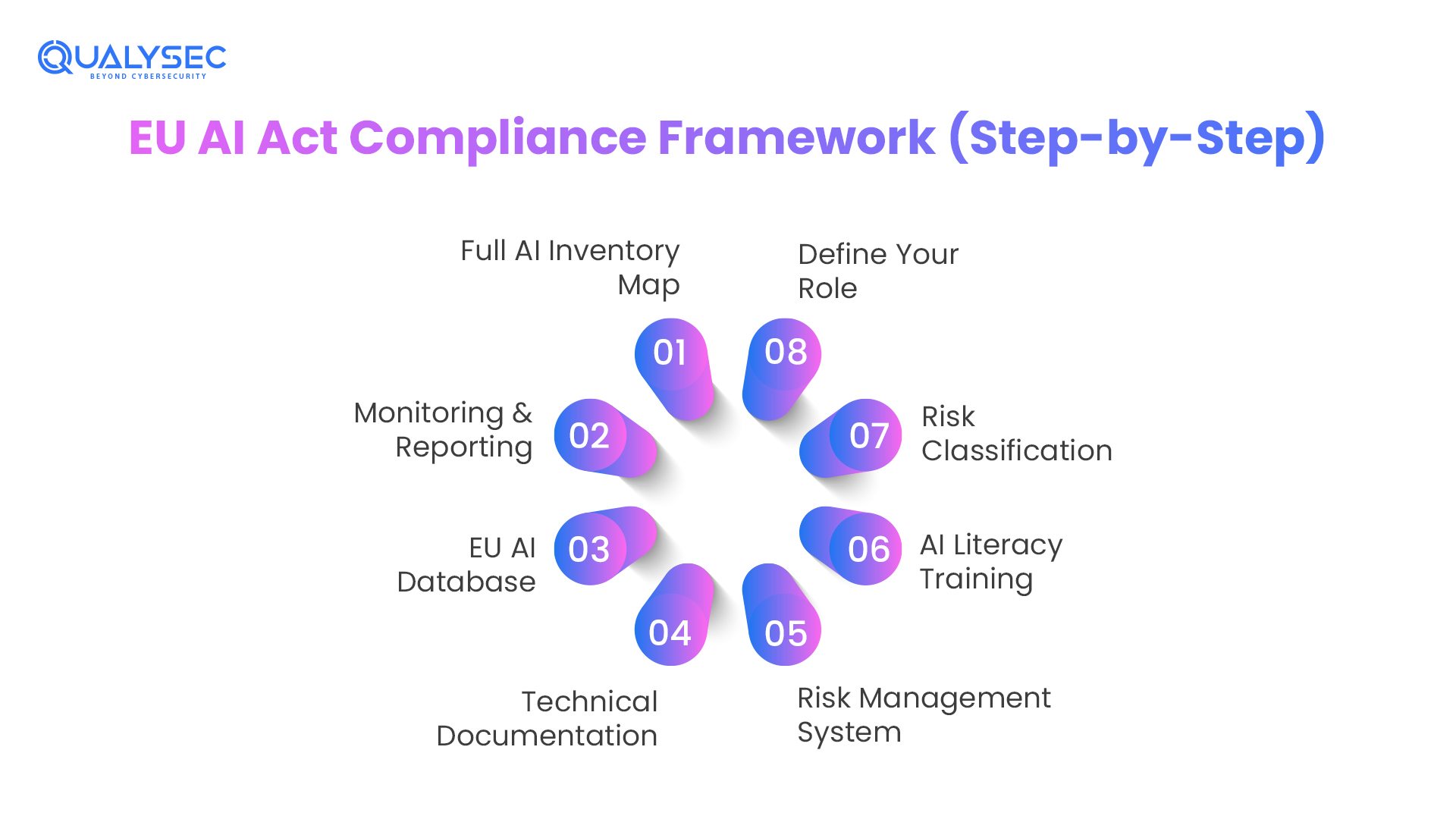

EU AI Act Checklist of Companies: 8 Steps to Compliance

Compliance practitioners suggested recommendations that organize the subsequent EU AI Act checklist of companies as the action preparation sequence, ranked by dependency. The portions 1-4 are to be completed by 2 August 2026; parts 5-8 are continued obligations ensuring ongoing AI compliance and governance.

1: Full AI Inventory Map:

All AI systems developed, deployed, imported, or distributed in Europe by your organization. Add third-party AI technology, built-in AI applications in SaaS solutions, and AI services in bigger systems. Without this inventory, no compliance process can start.

2: Define Role

Understand Your Part in the AI Supply Chain. Understanding the role of your organization in each AI system identified, provider, deployer, importer, distributor, or authorized representative? Debts vary in essence among these positions. A company can also have multiple roles of AI systems at the same time.

3: Risk Classification

Triage Each AI System by Risk Tier. Use the risk classification in the Act for each system in your inventory. Status: Prohibited, High-Risk (Annex I or III), Limited Risk, or Minimal Risk. Record the rationale of classification, so that the authorities can examine it. Use conservative classification where ambiguity is on the borderline.

4: AI Literacy Training

Introduce AI Literacy in Your Organization. This requirement is already in effect. The level of AI literacy (i.e., the awareness of capability, limitations, and risks of AI systems) of all the employees and contractors handling AI systems on behalf of the organization should be adequate. Record of document training completion.

5: Risk Management System

Develop Your Risk Management System (High-Risk Only). Develop the documented, continuing risk management process that Article 9 requires. Assign ownership. Stipulate escalation procedures. Incorporate it into your current enterprise risk management system if it has one.

6: Technical Documentation

Final Technical Documentation and Conformity Assessment. Prepare the technical file that is necessary in Article 11 and Annex. IV. Full compliance evaluation, either self-assessment or through a notified body, of every high-risk AI system. Affix CE marking upon completion.

7: EU AI Database

Register all high-risk AI systems in the publicly available AI database in the EU before deployment. This is one of the legal requirements to introduce high-risk AI into the EU market.

8: Monitoring & Reporting

Develop Continuous Surveillance and Incident Reporting. Have in place post-market surveillance systems. Set limits of serious incident reporting to the national competent authority. Delegate regulatory updates and maintenance of the compliance program.

EU AI Act Governance Framework: Who enforces it?

It is important to understand the structure of the EU AI Act governance in order to understand who your organization is supposed to be responsible to, and who has the authority to investigate and impose fines on your organization.

- The main EU-level agency that oversees GPAI models, facilitates coherent implementation of the Act by the member states, and assists in enforcement is the AI Office (established by European Commission Decision, 24 January 2024; in force 2 August 2025). It is located in the Commission Directorate-General communications networks, content, and technologies (DG CNECT).

- The AI Board is an official coordinating institution that includes one representative from each EU member state. It guides and supports the Commission and the member states in respect to a uniform application of the Act, organizes national practices, and gives opinions on draft delegated acts and guidelines.

- The Scientific Panel is an independent expert advisor to the AI Office and AI Board, especially on issues pertaining to the GPAI model capabilities, systemic risks, and technical standards.

- National Competent Authorities, all EU member states, were expected by 2 August 2025 to designate at least one market surveillance authority and one notifying authority. In the countries, the national authorities investigate, make remediation orders, and give administrative fines for violations in their jurisdictions. It depends on the country; in Germany, the BSI strongly participates in the AI Act, whilst in France, CNIL gets involved due to the overlap with GDPR.

EU AI Act Fines– What the Lack of Compliance Really Costs

The EU AI Act penalty regime came into place on 2 August 2025. The authorities determine the amount of fines as either the ceiling of the flat rate or the percentage of the annual turnover globally, not the EU turnover, but globally, i.e., a big company may end up paying a fine that is significantly higher than the flat rate implies.

| Violation Category | Maximum Flat Fine | Turnover Cap | Active From |

| Prohibited AI practices (Article 5) | €35 million | 7% of global annual turnover | 2 August 2025 |

| Non-compliance with high-risk obligations, GPAI rules, conformity assessment failures, and CE marking issues | €15 million | 3% of global annual turnover | 2 August 2025 (general); GPAI penalties from Aug 2026 |

| Supplying incorrect, incomplete, or misleading information to authorities | €7.5 million | 1% of global annual turnover | 2 August 2025 |

| SME / startup adjustments | Lower penalties may apply | The proportionality principle applied by the authorities | Ongoing |

Specific fines on the providers of GPAI models are not to take effect until 2 August 2026, the same date on which enforcement powers take effect in relation to the GPAI provisions.

Non-monetary enforcement measures, such as warnings and orders to limit or suspend the deployment of AI systems and recall products to the EU market, are also a part of the AI regulation European Commission framework.

See how non-compliance can impact businesses in real scenarios. Explore our case studies to understand the true cost of EU AI Act fines and how organizations can avoid them.

See How We Helped Businesses Stay Secure

EU AI Act Intersection with GDPR, NIS2, and the Cyber Resilience Act

The EU AI Act is not working alone. The organizations have to deal with four overlapping regulatory regimes at the same time, and they must coordinate compliance planning due to the interconnected obligations.

| Regulation | Key Overlap with EU AI Act |

| GDPR | AI training data using personal data; data subject rights applied to AI outputs; DPIA required where high-risk AI intersects high-risk personal data processing |

| NIS2 Directive | AI in energy, transport, healthcare, and financial market infrastructure must satisfy both NIS2 cybersecurity obligations and AI Act high-risk requirements simultaneously |

| Cyber Resilience Act (CRA) | AI products with digital elements are subject to CRA vulnerability reporting; coordination is required with AI Act serious incident reporting |

| Revised Product Liability Directive | High-risk AI causing damage may trigger AI Act penalties AND product liability claims under the revised directive concurrently |

Digital Omnibus Package: What’s Shifting in the EU AI Act

The European Commission approved the Digital Package on Simplification, also popularly known as the Digital Omnibus Package, on 19 November 2025. This law proposal contains amendments to the EU AI Act that will make the legislation less burdensome to comply with, especially for SMEs and start-ups, and enhance the practical implementability of the Act.

Some of the main suggested amendments are

- Broadening regulatory sandbox access allows more innovators to access the regulatory sandbox and enables more innovative AI systems to be tested in regulatory sandbox environments before fully meeting compliance requirements. Developers must start building sandbox infrastructure in 2028.

- Explaining interaction with sector-specific laws, resolving uncertainty over the interaction between the AI Act and previous product safety, financial services, and healthcare laws supported by ISO/IEC 42001.

- Modifying high-risk classification processes, we suggest a connection between some of the high-risk AI requirements and the presence of harmonized standards (which both CEN and CENELEC are still developing, and we anticipate their finalization through 2026).

- Specific simplification of SMEs, less documentation, and less rigorous conformity assessment routes for companies with a specified size cutoff.

The Digital Omnibus Package is the current negotiation in the European Parliament and the Council of the EU. Organizations should not overhaul their compliance programs in accordance with the proposed amendments unless the proposed amendments are actually adopted. The suggestions allow SMEs to obtain directional input as regards how to structure their compliance architecture.

Qualysec Expert Insights: The Reality Check

At our practice, we have been performing AI governance readiness security assessments for organizations in the financial services, healthcare technology products, and SaaS platforms operating in the EU. That is what we always see.

Audit Anecdote:

In one of our recent readiness audits of the EU AI Act concerning a mid-market HR technology provider, we found that their applicant-screening algorithm, integrated in a third-party ATS (Applicant Tracking System), fit the Annex III definition of high-risk AI that can be utilized in employment. The client’s compliance team had given all their AI a low-risk rating since they had not taken the vendor-embedded AI into account. Reclassifying that one system raised conformity assessment, documentation, and FRIA requirements that needed a six-month remediation program.

Pro Tip: When procuring SaaS and software, always ask your vendors to provide a formal disclosure of their AI in the form of due diligence. Ask in particular: Does this product make use of AI systems that would fall under the category of high-risk under the EU AI Act Annex III? Get the answer in writing. When the vendor is not able or willing to respond to that question, that is a red flag, probably because he or she has not already performed his or her own classification exercise.

Common Pitfall:

The most widespread fallacy is to view the EU AI Act as a legal-team-only endeavor. The classification of risk necessitates technical knowledge of the technicality of the working AI system. There is a need for engineering input in documentation. The input of product management is needed in human oversight design. Cross-functional cooperation is needed in the process of fundamental rights assessment. The organizations that silo this either in legal or compliance terms would always overlook high-risk systems and generate insufficient documentation. Form a cross-functional team at the very beginning.

Conclusion

The EU AI Act is not a plan on paper anymore; it is a regulatory framework in operation featuring functioning prohibitions, a functional enforcement framework, and a significant compliance deadline coming on 2 August 2026. The structured preparation window of organizations deploying, developing, or distributing AI in the European Union is open and fading away.

The risk-based model introduced by the Act implies that the level of compliance effort is relative to the interests of your AI applications. Minimal-risk AI must have AI literacy and awareness. The implementation of a full governance program is required in high-risk AI: risk management, data governance, technical documentation, conformity assessment, CE marking, and EU database registration, along with AI application security measures. The models of GPAI should document, be transparent, and, in the case of systemic risk models, be adversarial and report incidents.

The organizations that are in the best place on 2 August 2026 are those that have already done their inventory of AI, have set up cross-functional governance, and have already started with their documentation and risk management efforts. When you have not yet initiated your organization, it is time to do so, not in Q4 2026.

Schedule a 30-minute consultation to discuss your EU AI Act compliance and identify the right steps for your business.

Speak directly with Qualysec’s certified professionals to identify vulnerabilities before attackers do.

FAQ Section

Q1: What is the EU AI Act?

The first comprehensive law on AI in the world is the EU AI Act, which aims to make AI systems deployed in the EU safe, transparent, and under human control. It adopts more stringent rules for systems that have more potential to cause harm based on risk.

Q2: Who must comply with the EU AI regulation?

Any company (provider or deployer) offering AI systems to the market or operating them in the EU (whether it is located in the EU or in a third country, such as the US or the UK).

Q3: What are the fines for EU AI Act non-compliance?

The authorities grade the fines: up to EUR35M or 7 percent is for the prohibited practices, up to EUR15M or 3 percent is for the non-compliance with requirements, and up to EUR7.5M or 1.5 percent is for the provision of wrong information to authorities.

Q4: When do the EU AI Act requirements become mandatory?

Although the Act is currently effective, the majority of the requirements regarding the high-risk systems are due to come into force on August 2, 2026. In August 2025 rules of GPAI models became obligatory.

Q5. What are the 4 levels of the EU AI Act?

The EU AI Act categorizes AI into four levels of risk in addition to the harm that they may cause:

- Unacceptable: Outlawed systems (e.g., social scoring).

- High: Stringent (e.g., recruitment).

- Limited: Must be transparent (e.g., chatbots).

- Minimal: No particular commitments.

Q6.What security testing is required under the EU AI Act?

The EU AI Act has a list of high-risk systems and requires conformity testing, adversarial testing (red-teaming), and robustness testing for them. To ensure accuracy and cybersecurity in the lifecycle, mandatory measures are data poisoning hardening, prompt injection, and model evasion.

0 Comments