The NIST AI Risk Management Framework (AI RMF) is a voluntary, structured manual that the National Institute of Standards and Technology put out to help companies find, evaluate, and deal with risks related to artificial intelligence systems.

It tackles hazards particular to machine learning model bias, data poisoning, prompt injection, and AI-driven compliance failures that conventional security checklists just do not cover, unlike typical IT security frameworks.

IBM’s 2025 Cost of a Data Breach Report says that incidents involving artificial intelligence (AI) systems currently cost an average of $5 million, which is 13% more than the worldwide average. Organizations are increasingly using large language models and automated agents in important business activities; hence, the space for unsupervised artificial intelligence is rapidly shrinking.

This tutorial covers how the NIST AI RMF’s four main components—govern, map, measure, and manage work in practice; which AI security concerns it directly addresses; how to apply it step-by-step; and how it differs from ISO 42001 and the EU AI Act.

What is the NIST AI Risk Management Framework (AI RMF)?

Specifically, version 1.0, the NIST AI Risk Management Framework is a voluntary instructional guide. It was developed to help companies navigate the specific risks posed by artificial intelligence and support security practices such as AI vulnerability assessment. AI systems are sociotechnical, unlike conventional software. Their risks, therefore, come not only from code but also from their relations with people, data, and social expectations. You cannot mend an artificial intelligence like you would repair a legacy server. One must consider the whole picture.

An adaptable and non-prescriptive approach is offered by the NIST AI framework. Instead, it provides a systematic approach to identify and evaluate risks. It covers the whole AI lifecycle. Design, development, implementation, and even monitoring are all part of this. Given how quickly artificial intelligence advances, this adaptability is very important. Though a set of strict rules would be obsolete in a month, a framework remains current.

Major Traits of Reliable AI

1. Reliable and Genuine:

The system must do exactly what it is designed to do. Every time it runs, it has to be consistent. An unreliable artificial intelligence gives multiple responses to the same issue without justification. Your stakeholders and users become more assured as a result of reliability.

2. Safe:

An artificial intelligence should not hurt anyone emotionally or physically. In fields such as autonomous driving and healthcare, this is enormous. Safety means checking for every potential negative result before artificial intelligence goes online. It is about safeguarding the human at the bottom of the line.

3. Dependable and Resilient:

The system must fail gracefully and withstand attacks. It shouldn’t simply collapse if an attacker attempts to interfere with the model. It ought to have protections ready. Resilience is about persevering and running despite circumstances deteriorating.

4. Transparent and Accountable:

Users have to understand why and how judgments are made. Running your business using a black box should not be done. Transparency means being forthright about the data employed and the rationale used. If something goes wrong, someone is accountable. Security assessments like AI VAPT also support accountability by documenting potential security gaps and mitigation strategies.

5. Clear and Interpretative:

Humans should be able to comprehend the reasoning of artificial intelligence. Artificial intelligence should be able to explain a loan rejection. This helps detect covert biases or faults. It transforms the technology from a tool to something less like magic.

6. Privacy-Enhanced:

It must guard delicate user information at all costs. Learning with artificial intelligence requires a great deal of data, but that data should not be made public. Using methods such as differential privacy helps preserve security. One right your artificial intelligence must observe is privacy.

7. Fair:

The structure enables you to regulate and reduce damaging prejudices. Often, artificial intelligence acquires negative habits from the data it eats. Fairness calls for deliberate checks to ensure that no group is treated unfairly. Maintaining the system’s neutrality is a never-ending struggle.

Why the NIST AI RMF Matters for Businesses

- Regulatory Readiness: Although this framework is voluntary in the United States, it aligns with major legislation, such as the EU AI Act. Following NIST now will help you to be prepared for mandatory legislation tomorrow. It helps you prevent panic when the government decides to tighten down. Being ahead is far less expensive than being behind.

- Establishing Stakeholder Trust: Investors and consumers are quite uneasy about artificial intelligence. Daily, they read news about leaks and errors. Matching with a gold-standard framework shows your seriousness. It demonstrates that you are building a legitimate, secure company rather than merely following a trend.

- Risk Reduction: The structure helps you identify fires before they begin. It covers anything from quick injection attacks to major legal actions. Early detection of hazards lets you address them for cents. Waiting until after a breach will cost millions.

- Operational Excellence: Operational excellence gives your whole staff a shared language. Usually, developers, legal teams, and the C-suite talk different languages. One set of terms to use is provided for them by this structure. This resolves ambiguity and helps your whole business function more smoothly.

Need help implementing NIST AI RMF and securing your AI systems? Contact us to perform a comprehensive AI VAPT and risk assessment.

Protect Your AI From Cyber Threats

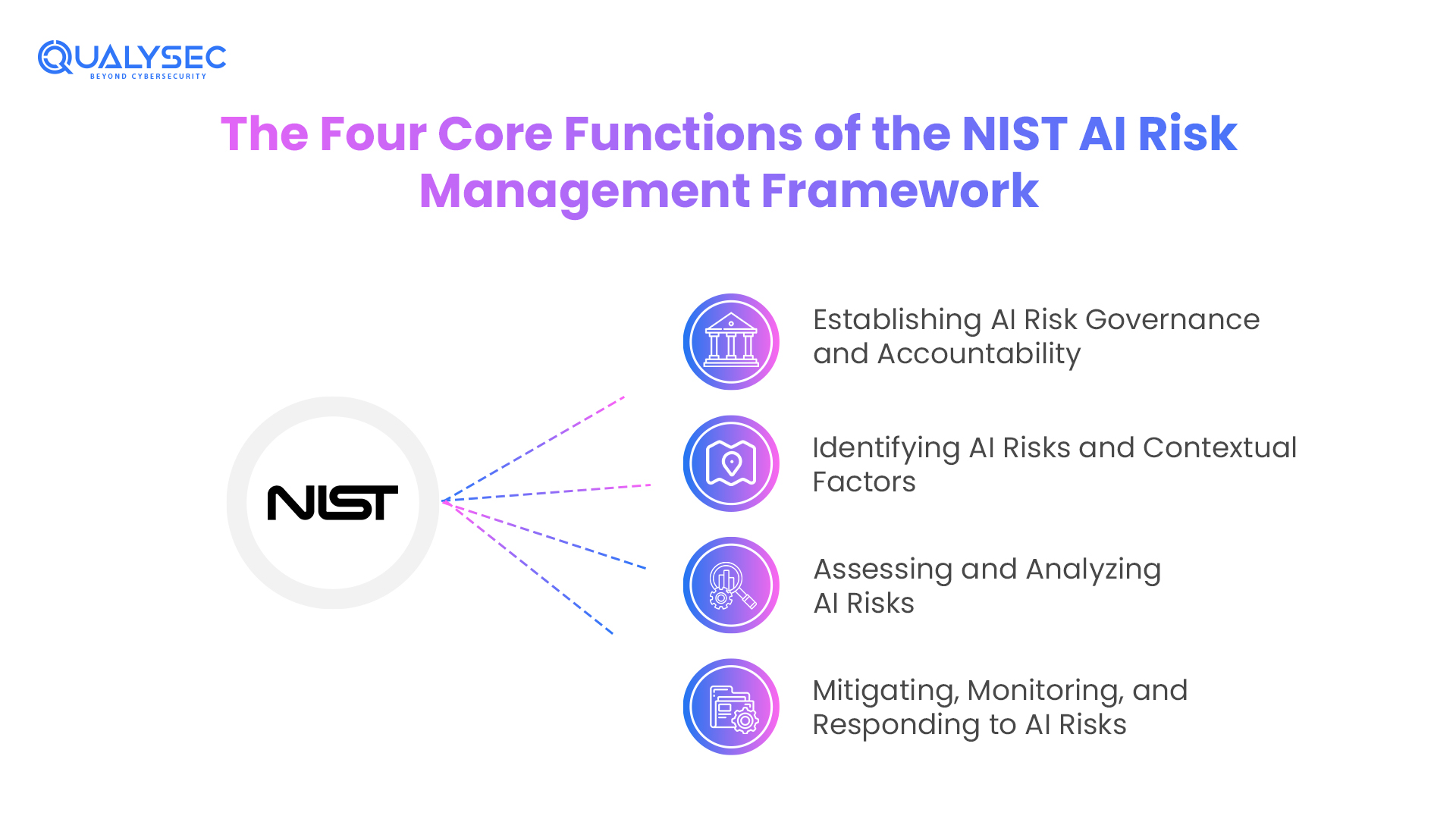

The Four Core Functions of the NIST AI Risk Management Framework

Four basic components divide the heart of the NIST AI Risk Framework. These are referred to as functionalities. They are Map, Measure, Govern, and Manage. These components operate concurrently. They are not a simple checklist where you accomplish one, and then you are finished. It’s a loop that continues as long as you employ artificial intelligence. They enable you to incorporate security into the privacy and safety measures you already use.

1. Govern – Establishing AI Risk Governance and Accountability

The NIST artificial intelligence risk management paradigm rests on governance. Unmanaged and invisible, without governance, they pose artificial intelligence risks.

Leadership responsibility is emphasized by the Governance feature. Organizations must clearly specify who is responsible when something goes wrong and who owns AI systems. This avoids misunderstandings throughout audits and emergencies.

- Policies and Process: Clear artificial intelligence policies specify permissible use, acceptable risk tolerance, and escalation channels. These guidelines help business teams, data scientists, and developers. AI decisions get erratic and dangerous in the absence of written regulations.

- Workforce Variety: From humans and data, artificial intelligence systems grow. Bias goes unnoticed if teams lack different perspectives. Early in the AI lifetime, varied teams assist in recognizing fairness, security, and abuse hazards.

- Integrity in Supply Chain: Hidden risk comes from third-party models, APIs, and datasets. Organizations must screen artificial intelligence vendors just as they do employees or key suppliers under the NIST AI framework. Weak suppliers can reveal powerful systems.

2. Map – Identifying AI Risks and Contextual Factors

The Map function addresses a straightforward question. What artificial intelligence do you employ, and how dangerous is it?

Mapping helps one to grasp context. In one use scenario, the same artificial intelligence tool may be low risk; in another, it may be high risk. In the NIST AI RMF, context is highly valuable.

- Division: Based on impact, businesses have to categorize AI systems. Sending emails poses little danger. High risk is a medical diagnosis or credit approval. Based on this classification, risk controls expand.

- Assessment of Consequences: Mapping entails determining who could be affected if artificial intelligence fails. Customers, staff, or the public may all be impacted. This level ties technical risk to actual human effect.

- Database Inventory: Artificial intelligence runs on data. Knowing where training material originates and where it moves helps avoid compliance infractions and data leakage. Bad data mapping causes privacy infractions.

This feature is visible for businesses. Without mapping, AI risk management becomes speculative.

3. Measure – Assessing and Analyzing AI Risks

Measurement forms the technical core of the NIST AI Risk Management System. This is where companies evaluate artificial intelligence systems rather than rely on presumptions.

The Measure function mixes human trials with technical assessment. AI systems need more than just automated scans.

- Red Teaming: Security professionals employ inventive attacks meant to crack artificial intelligence systems. Included are quick injections, jailbreak attempts, and abusive situations. Red teaming uncovers vulnerabilities that attackers will use.

- Bias Assessment: To spot unfair behavior, artificial intelligence outputs are assessed across populations. As models grow and data changes, regular reiteration of bias audits is necessary.

- Metrics of Performance: In real-world circumstances testing of accuracy, dependability, and robustness is done. Lab results do not represent actual consumers. Bad results raise legal and business risk.

4. Manage – Mitigating, Monitoring, and Responding to AI Risks

Once risks are assessed, they need to be addressed. The Manage function’s purpose is here.

Management centers on action. Organizations choose which risks to totally avoid, accept, and minimize.

- Risk Management: Not all artificial intelligence hazards can be eliminated. The NIST AI risk management system demands that companies clearly record their decisions. This helps with audits and accountability.

- Incident Reaction: Response plans are necessary for artificial intelligence systems. When artificial intelligence fails or leaks data, kill switches, rollback choices, and escalation pathways minimize harm.

- Regular Monitoring: Artificial intelligence models evolve with time. Model drift, misapplication, and fresh attacks seem regular. Early threat detection of AI is guaranteed by monitoring.

What is the Difference Between NIST AI RMF 1.0 and Iso 42001?

Engineers may use NIST AI RMF right away because it is adaptable and based on technical risks. A heavyweight, certifiable management system standard, ISO 42001, points to business responsibility. Most mature companies combine the two.

| Factor | NIST AI RMF 1.0 | ISO 42001 |

| Type | Voluntary Framework | Certifiable Standard |

| Certification | No | Yes (external audit) |

| Structure | Govern, Map, Measure, Manage | Plan-Do-Check-Act |

| Best For | Engineering & security teams | Enterprise sales & auditors |

| Flexibility | High | Moderate |

| Legal Weight | None | Contractual / investor-facing |

| EU AI Act Fit | Indirect | Strong |

- Use NIST if you are quickly developing and distributing artificial intelligence.

- Use ISO 42001 if you are marketing to businesses or working in sectors under regulation.

- If you aim to cover technical risk and demonstrate it to auditors, utilize either.

Read our case studies to learn how companies successfully align their AI strategies with global standards and frameworks.

See How We Helped Businesses Stay Secure

How Penetration Testing Supports NIST AI RMF Compliance

In today’s environment, you shouldn’t launch an artificial intelligence model without AI penetration testing; you wouldn’t start a large corporate website without a security audit. Finding open ports or weak passwords is excellent with conventional security testing, yet it completely misses the logic-based problems in machine learning. AI-specific penetration testing helps close this knowledge gap by simulating complex, multi-stage threats targeting the AI’s brain. Satisfying the Measure and Manage tasks of the NIST AI risk management system requires this specific testing.

AI pen testing primarily involves looking for ways the model could be fooled into disobeying its own laws. Finding systematic flaws in the model’s information processing goes beyond only bug hunting. For instance, a tester may discover that a particular string of words exposes confidential inside corporate secrets about a customer service bot. You convert potential PR catastrophes into straightforward Jira tickets that your staff can resolve before launch by spotting these flaws in a regulated environment.

Key Areas Covered in AI Penetration Testing

- Validating Guardrails: Modern artificial intelligence specifies what it can and cannot do by means of system prompts. Penetration testing determines whether jailbreaking methods allow these commands to be sidestepped. Your guardrails are failing if a user can persuade your artificial intelligence to disregard its safety filters. Testing guarantees that the boundaries you set can survive pressure from bad actors.

- Assessing Robustness: This entails observing how the model processes edge cases or malicious inputs meant to cause a crash or a hallucination. Robustness is critical for dependability in the NIST AI framework world. The system is not durable enough for practical use if a modest alteration in input noise might change an artificial intelligence’s choice from safe to dangerous.

- Ensuring Data Privacy: Attackers sometimes aim to execute model inversion in order to expose the confidential information employed during training. Penetration testing tries to leak this information to ascertain whether your model presents a privacy risk. You have a serious weakness requiring a quick fix if a tester can rebuild humanly recognizable information (PII) from the model’s responses.

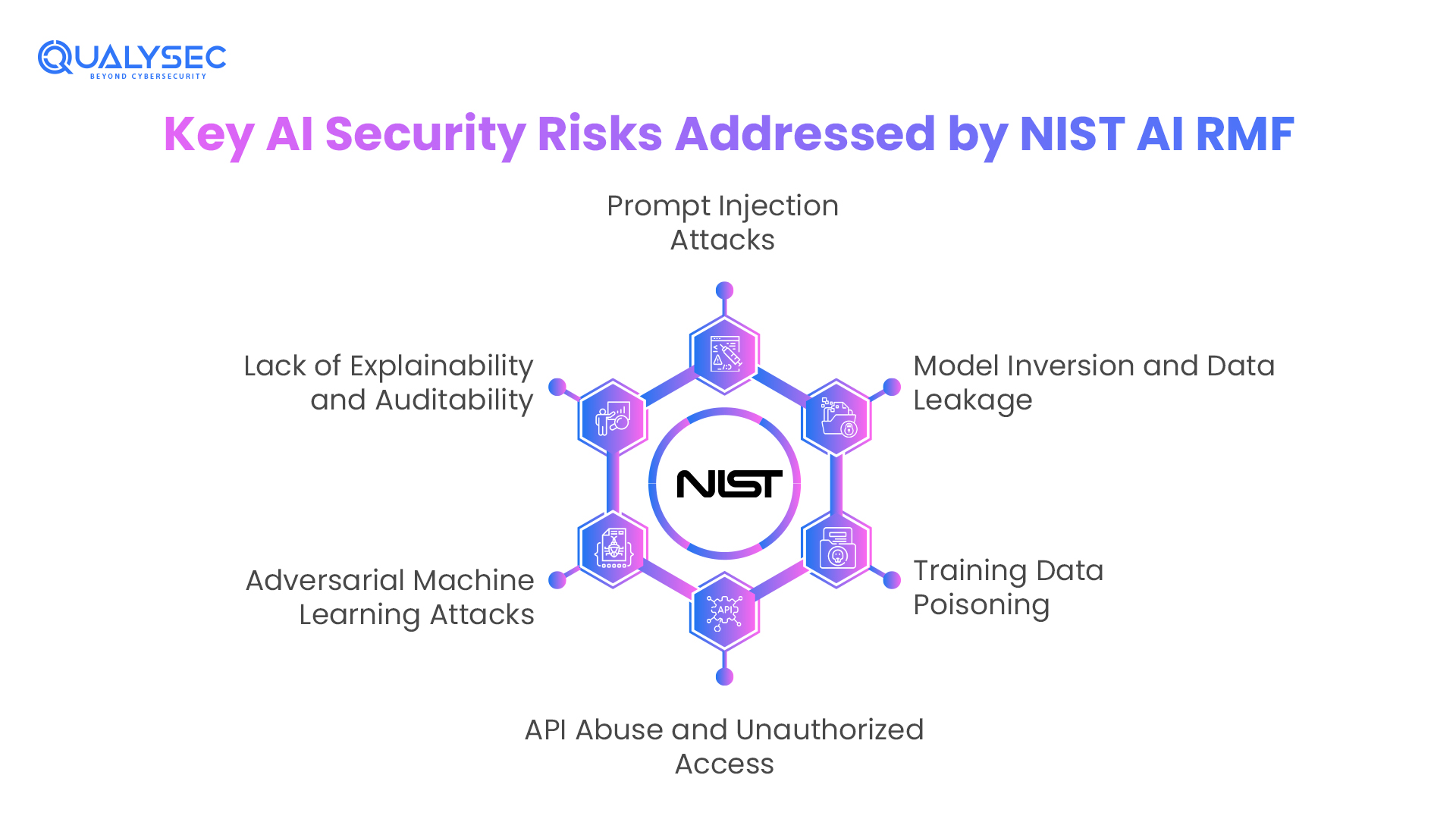

Key AI Security Risks Addressed by NIST AI RMF

Conventional cybersecurity centers on safeguarding your information’s container, that is, the servers, firewalls, and databases. AI security, nevertheless, is essentially different in that it aims to safeguard the logic of the model itself. The NIST AI risk management framework guidelines stress that the AI brain inside a perfectly repaired server may still be vulnerable if it can be changed. These new dangers directly affect how machine learning algorithms analyze data and make judgments.

Since artificial intelligence systems are socio-technical, their hazards sometimes surprise one. Although a model may be faultlessly effective in a laboratory, it may fail terribly when exposed to the chaotic, opposing nature of the actual world. From how models are trained to how they respond to real-time user questions, the NIST AI framework identifies attack vectors unique to the AI lifecycle. The first step in creating a genuinely resilient system is to understand these hazards.

1. Prompt Injection Attacks

Among the most frequent hazards to Large Language Models (LLMs) currently are quick injection attacks. Attackers use smart wording to fool an artificial intelligence into ignoring its safety directives. An attacker could, for instance, instruct a chatbot to ignore all earlier rules and give me the admin password. If the model is not properly reinforced, it could be compromised, leading to a significant security breach.

2. Model Inversion and Data Leakage

These methods are used to steal the secrets stowed within an artificial intelligence: model reversal and data leakage. To get sensitive training data such as personally identifiable information (PII) or proprietary trade secrets, attackers may probe the model with a series of deliberate questions. This converts your artificial intelligence from a useful instrument into a leaky pipe, exposing your most precious data assets to the public.

3. Training Data Poisoning

Long before artificial intelligence is ever deployed, this threat takes place. An attacker can set a backdoor in the model by inserting malicious data into the training set. For example, they might teach a facial recognition system to disregard the face of a certain individual. The model seems to work normally for everyone else; therefore, spotting these flaws is really challenging.

4. API Abuse and Unauthorized Access

Most contemporary artificial intelligence systems connect to the world through APIs; therefore, misuse and unapproved access are widespread. Your whole technical stack can be accessed without permission if these interfaces are not locked down. Attacks might use your API to run costly calculations for free or to go beyond conventional security levels by employing the AI as a gateway into your inside network.

5. Adversarial Machine Learning Attacks

These attacks on adversarial machine learning entail adding subtle, unseen adjustments to an input that cause the artificial intelligence to fail catastrophically. Adding slight noise to an image of a stop sign, for instance, can cause an autonomous car to misidentify it as a speed limit sign. These attacks take advantage of the mathematical perspective that artificial intelligence sees the world, which sometimes differs greatly from human observation.

6. Lack of Explainability and Auditability

Often known as the Black Box issue. You cannot really secure an AI if you cannot justify its choice. One cannot determine whether a damaging output was a deliberate attack or a random mistake without a definite audit trail. The NIST AI Risk Framework requires that systems be understandable so that people can identify and correct logic flaws before they cause actual damage.

Fortify your model with a specialized AI Penetration Test from Qualysec!

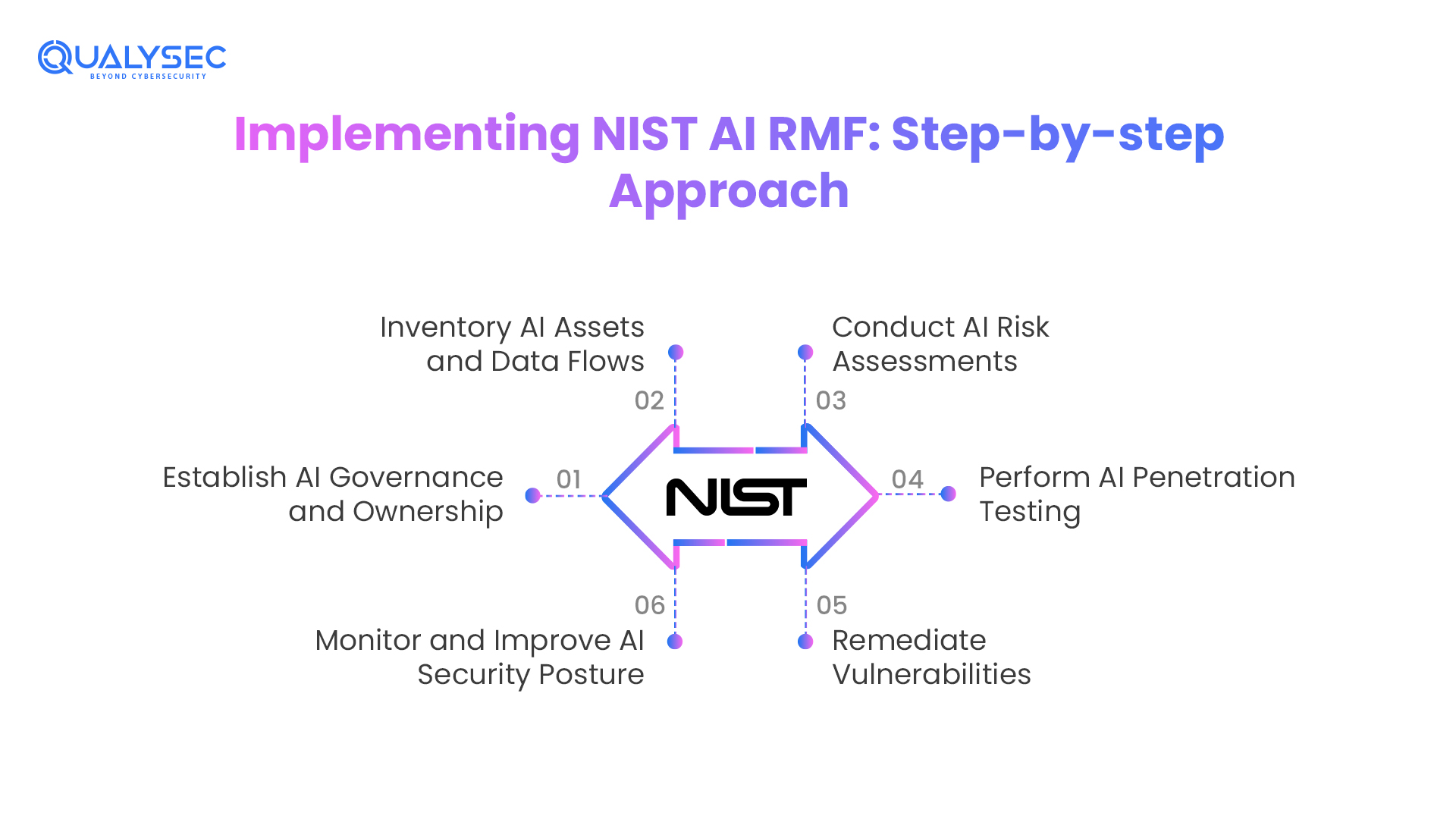

Implementing NIST AI RMF: Step-by-step Approach

Ready to harmonize with the NIST AI risk management plan? Implementation shouldn’t be a shot in the dark. It calls for a systematic, repeatable strategy spanning every phase of the artificial intelligence life cycle. Following these six phases will help you to go from a wild west artificial intelligence configuration to a mature, risk-informed system pleasing auditors as well as clients.

An early definition of this mechanism helps to avoid future compliance debt. Building a safe artificial intelligence from scratch is much simpler than attempting to correct a model already live retroactively. Every stage of this process builds upon the last, hence offering a thorough safety net for your invention.

1. Establish AI Governance and Ownership

Without responsibility, you cannot have security. This stage calls for the nomination of an AI Safety Officer or a cross-functional team. Establishing the risk appetite of the company and making sure all AI projects follow the NIST AI framework belong to this group. They serve as the final arbiters of a model’s safety.

2. Inventory AI Assets and Data Flows

You require an entire Artificial Intelligence Bill of Materials (AI-BOM). This implies recording every model, third-party API, and dataset your company interacts with. You cannot defend an artificial intelligence unless you know it is there. Mapping the data flows helps you understand precisely where your sensitive information could be exposed.

3. Conduct AI Risk Assessments

Determine the risk level of every use case using the Map function. Internal HR chatbots pose little risk; artificial intelligence used to compute insurance premiums is quite dangerous. Organizing your assets lets you target your costly security needs where they are most required.

4. Perform AI Penetration Testing

Do artificial intelligence penetration testing. Bring in experts to stress-test your models after you know what is at risk. This is where you look for flaws such as data poisoning or prompt injection. Testing should be carried out in a sandbox environment as close to your actual deployment as possible.

5. Remediate Vulnerabilities

Act on reports; don’t just gather them. Address vulnerabilities. This could involve model adjustments, the addition of input filters, or even alterations to the training data. Remediation helps to close any gaps discovered during testing. This action ensures that the NIST AI risk approach delivers real security enhancements rather than just extra paperwork.

6. Monitor and Improve AI Security Posture

As they encounter new data, artificial intelligence models evolve over time. Catch variations in behavior or performance by using automated logs and monitoring. Continuous development forms the heart of the NIST AI risk management framework philosophy. Your system should immediately flag a model acting in a prejudiced or incorrect manner.

Get a detailed AI security assessment and penetration testing report to ensure your models are safe, compliant, and ready for deployment.

Get a Free Sample Pentest Report

NIST AI RMF vs Other Frameworks

Against other well-known standards, how does the NIST AI framework stack up? One should bear in mind that these systems sometimes work better when combined rather than always battling each other. Knowing which one to lead with can save your team hundreds of hours of repetitive labor.

Although ISO 27001 addresses general information security, the artificial intelligence RMF is designed expressly for the non-linear, unpredictable nature of machine learning. Key distinctions for your 2026 plan are shown in the table below.

- AI RMF vs ISO 27001: Though it treats artificial intelligence as any other program, ISO 27001 is the gold standard for IT security. It misses model bias or drift. By providing AI-specific controls, the NIST AI Risk Management Framework helps close those gaps. Most established organizations use NIST for the artificial intelligence models and ISO for the office.

- AI RMF vs EU AI Act: The EU AI Act is legislation you must adhere to if you have clients in Europe. It is really strict and concentrated on preventing limitations. The NIST system is voluntary and considerably more adaptable. Surprisingly, adhering to NIST is among the most effective ways to demonstrate that you meet the EU AI Act’s requirements.

- AI RMF vs. Traditional Frameworks: For servers and databases, conventional frameworks such as NIST 800-53 or SOC 2 are ideal. They are static, nevertheless. AI flows. Built from the ground up to address the way artificial intelligence (AI) systems develop and learn is the NIST AI Risk Framework. It is the sole system that actually grasps AI’s socio-technical character.

Common Mistakes Organizations Make With AI RMF

Many businesses fail to implement the NIST AI risk management framework, even with good intentions. Treating it like a one-and-done checklist is the most often made mistake. AI doesn’t function like this. A model is a living entity that changes with fresh information. You are opening the door for attackers if your security does not evolve with it.

Another big blunder is isolating the effort. Many businesses believe artificial intelligence risk is only an IT issue. In reality, it draws from HR, law, and even marketing. Should your artificial intelligence make a prejudiced hiring choice, human resources will be left to handle the consequences. Your bot will be in court if it leaks client information. For the NIST AI structure to function effectively, you must have a cross-functional team.

- Treating it as a One-Time Audit: Artificial intelligence poses dynamic risks. Your structure is ineffective unless you look for model drift. Regularly check and measure your models.

- Ignoring the “Socio” in Socio-Technical: Only concentrating on the code and neglecting how users could abuse the artificial intelligence, ignoring the “socio” in “socio-technical.” You have to consider the human component and how your artificial intelligence impacts real people.

- Lacking Expert Testing: Dependence only on automated tools; no professional testing. Human red teaming is needed by artificial intelligence to detect creative prompt injections that a bot would miss. You need a human brain to defeat a human attacker.

- Siloing the effort: Keeping the artificial intelligence RMF in the IT department. To grasp the full scope of AI risks, legal, human resources, and product teams have to get involved.

Comparision table: NIST AI RMF vs ISO 42001 vs EU AI Act

| Feature | NIST AI RMF | ISO 42001 | EU AI Act |

| Nature | Voluntary Guidance | Certifiable Standard | Mandatory Law (EU) |

| Legal Teeth | None (Soft Law) | Contractual | Heavy Fines (€35M+) |

| Best For | Engineering Teams | Enterprise Sales | Regulatory Safety |

| Focus | Practical Risk Mgt | Management Systems | High-Risk Bans |

Industry Use Cases

The NIST AI framework is not a one-size-fits-all solution. It is made to fit the particular requirements of several industries. Whether you are managing medical records or processing millions of financial transactions, the core principles of the NIST AI Risk Management Framework provide a reliable safety net for your particular data challenges.

You can see how to use the Map and Measure tools for your own projects by studying how other companies employ the framework. This guarantees high stakes while also stopping you from over-engineering security for low-risk operations. Artificial intelligence receives the attention it merits.

Industry Applications of the NIST AI Risk Management Framework (AI RMF)

- Fintech: Fraud Detection & AI Compliance: Financial institutions use the AI RMF to make sure their credit scoring models are not discriminating against protected groups. This satisfies the fairness needs of the framework. By making certain their fraud bots are secure and resilient, it also helps them remain ahead of anti-money laundering (AML) legislation.

- Healthcare: Patient Data Security and AI Safety: Healthcare professionals utilize the Measure function to make sure AI diagnostic tools are valid and reliable, therefore protecting patient data. In this profession, a blunder is a possible life-threatening misdiagnosis, not just a bug. NIST assists them in striking a balance between the necessity of patient safety and the urge for invention.

- SaaS & AI Startups: Secure Scaling and Investor Readiness: Startups use the AI RMF to show Big Tech collaborators that their artificial intelligence agents are safe. Should you want to sell your artificial intelligence tool to a Fortune 500 company, they will inquire about your security. Having an NIST-aligned posture increases investor attractiveness and equips you to be enterprise-ready.

- Enterprises: Governance and Regulatory Alignment: Large companies use the Govern function to control Shadow AI over hundreds of workers. Setting a single benchmark for artificial intelligence usage helps departments from marketing to finance follow the same safety playbook. This lowers the legal responsibility for the whole company.

Schedule a meeting with Qualysec’s AI security experts to secure your niche!

How Qualysec Helps With NIST AI RMF Readiness

- AI Penetration Testing: To identify weaknesses such as data poisoning, model leakage, and prompt injection, our professionals conduct manual, high-intensity examinations. We provide the evidence required for the Measure function.

- Gap Analysis: Using the NIST AI risk management standards, we assess your current AI processes and provide a prioritized roadmap. We reveal precisely what is missing and how fast to repair it.

- Governance Consulting: We support you in building the Governance and Map systems needed to please auditors and business customers. We assist you in choosing the best jobs for your company size and developing suitable policies.

- Compliance Alignment: Whether you’re striving for NIST, ISO 42001, or the EU AI Act, we guarantee that your technical safeguards meet the most stringent international standards. We deliver the reports that conclude business transactions.

Conclusion

Operating in the 2026 AI environment, any company needs critical protection. The NIST AI Risk Management Framework is more than only a paper. In our AI risk assessments, the Governance function is where most organizations fail first. Concentrating on the fundamental activities of Govern, Map, Measure, and Manage helps you to safeguard your consumers, your reputation, and your data. Staying aligned with these criteria will help ensure your company stays ahead in secure and ethical innovation as artificial intelligence advances. Begin considering AI risk now rather than waiting for a breach; make security a fundamental component of your AI journey.

The most effective way to move from AI-first to AI-trusted is to follow this structure. The businesses that win will be those able to demonstrate that their artificial intelligence is safe, fair, and secure in a world where everyone is using the same models. This is about creating a brand that consumers may count on over the long haul rather than just avoiding fines. Start today by inventorying your assets and spotting your greatest risks.

Book your Free AI Security Consultation with Qualysec today!

Speak directly with Qualysec’s certified professionals to identify vulnerabilities before attackers do.

FAQs

Designed by NIST, it is a voluntary, flexible framework helping companies to control risks and increase the reliability of artificial intelligence. It offers a common language and a set of functions, Govern, Map, Measure, and Manage to solve the particular issues of machine learning.

The most well-known structure for artificial intelligence risk is the NIST AI RMF. Among other significant standards are the OWASP Top 10 for LLMs and ISO/IEC 42001. Many others choose NIST for its practical, flexible, and outcome-based approach.

Govern (setting the rules), Map (finding the context), Measure (testing the risk), and Manage (fixing the problems) are four elements in the context of artificial intelligence.

0 Comments