Key Takeaways

- Chatbot security risks are about understanding how these systems interact with data, users, and other tools.

- Most risks don’t come from one place. They build across inputs, integrations, and everyday usage.

- Prompt injection and data leakage are some of the easiest ways things go wrong, and they don’t always look obvious.

- Integrations like APIs, plugins, and external data sources tend to open up more exposure than expected.

- Security here is not a one-time setup. It needs regular testing, monitoring, and updates as the system changes.

Inroduction

Sometimes, a chatbot gives an answer it shouldn’t. Not obviously wrong. Just a bit too much.Maybe it pulls in internal data. Maybe it responds to something it should have ignored. Nothing crashes, no alerts go off, and it all looks perfectly fine in the moment.

That’s where things get tricky. These systems now tie into your tools, your data and your day-to-day operations. But the risks they bring along are easy to miss until you look back and realise what already happened.

Teams keep improving performance and user experience, while security concerns stay in the background. Meanwhile, the market is already reacting. Chatbot security risks spending is expected to grow from USD 207 million in 2024 to nearly USD 794.75 million by 2034.

So before you rely on it a little more, ask yourself this. Would you notice if it crossed the line?

What Is Chatbot Security and Why It’s Different in 2026

Chatbot security is about protecting what runs behind the conversation. The model, the data it pulls, how users interact with it, and the systems it connects to.These systems don’t work like regular applications. You don’t always receive the same output for the same input. Responses change based on context, and past interactions can influence what comes next. That’s where a lot of chatbot cybersecurity issues start to show up.

The risk landscape has evolved. Some are new, like prompt injection or ways to influence how the model responds. Others are familiar, like weak APIs or poor access control. Most traditional tools were not built for this kind of behaviour. They catch obvious problems, but they miss what happens inside the conversation.

As more companies in fintech, SaaS, and healthcare rely on chatbots, the impact of getting this wrong is getting harder to ignore.

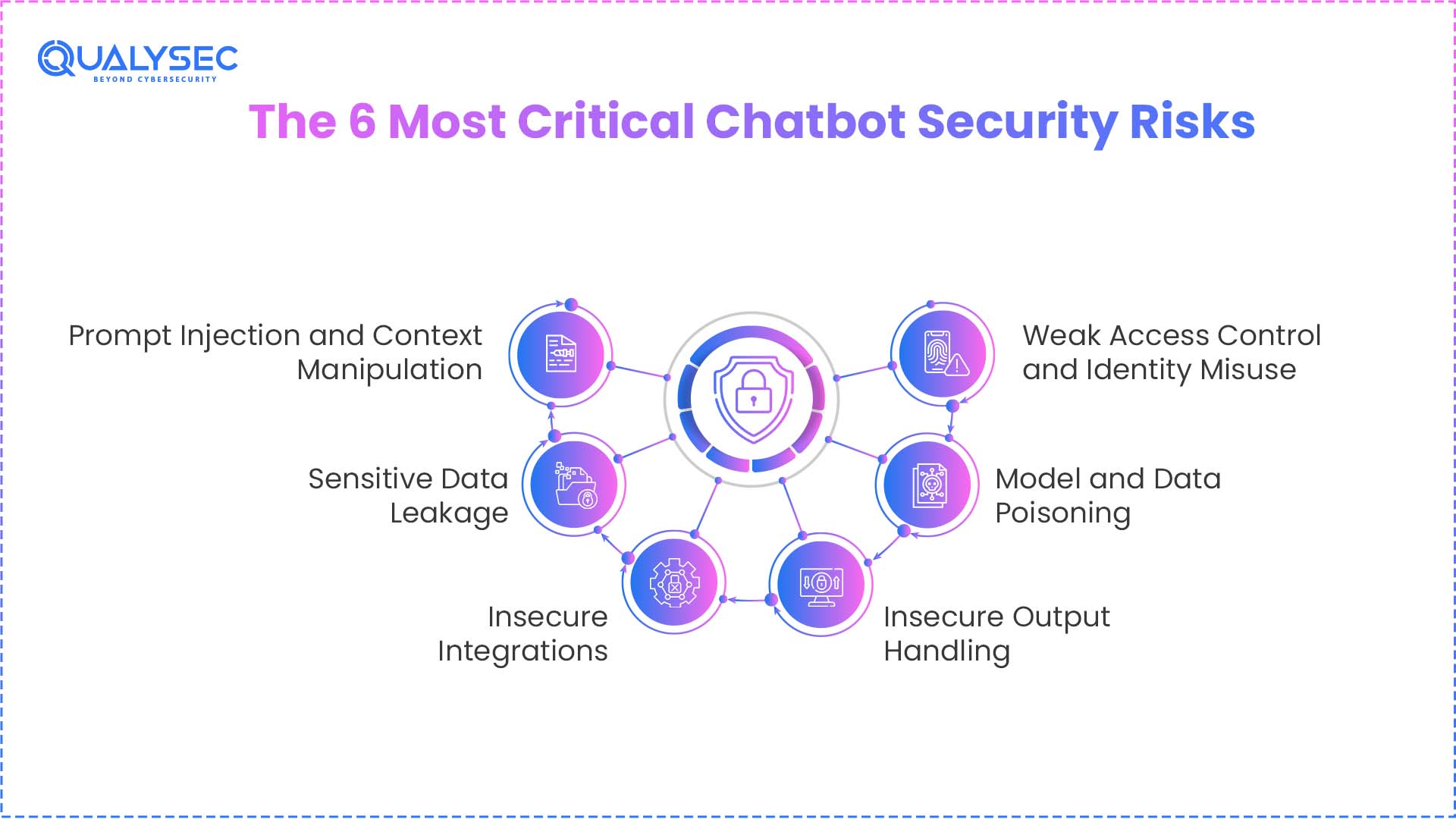

The 6 Most Critical Chatbot Security Risks

These risks don’t come from one place. They show up in how the chatbot takes input and connects to other systems.

1. Prompt Injection and Context Manipulation

Prompt injection attacks are basically ways to steer the chatbot off course.An attacker adds instructions that look harmless but slowly change how the model responds. This can come through a message, but also through a document, external data, or even a plugin response. Nothing looks broken, but the behaviour starts to shift.

Over a few interactions, the chatbot may begin to follow those injected instructions instead of its original rules. It might reveal data it should not, or act on something it was never supposed to do.

2. Sensitive Data Leakage

Chatbots handle more data than people realise. Customer details, internal documents, and even API responses can all pass through a single conversation.

The problem is not just access, it’s exposure. Data can leak through model responses, stored conversation history, logs, or third-party integrations connected in the background. Some of the most serious AI chatbot vulnerabilities show up here, where the system shares more without any clear warning.

There’s also an internal side to this. Employees may paste confidential data into the chatbot without thinking twice, especially during testing or daily use. What this leads to:

- Compliance issues under regulations like GDPR or HIPAA

- Exposure of sensitive business data

- Loss of intellectual property

3. Insecure Integrations

Most chatbot risks don’t start inside the model. They start where the chatbot connects to other systems. APIs, plugins, and data pipelines often carry more access. An API without proper authentication, a plugin with broad permissions, or unsafe data flowing into retrieval systems can quietly create exposure.

Once one of these points is compromised, the impact spreads fast. A plugin can be used to reach internal systems. A weak API setup can allow actions beyond intended limits.

This layer is usually the widest in terms of access, but it is not monitored closely enough.

4. Insecure Output Handling

Sometimes the problem is not the input. It is what the chatbot sends back. In many systems, responses are not just displayed. They can trigger scripts, run queries, or kick off workflows. If that output is used without checks, it creates an opening.

For example, a generated script might get executed in the backend, especially when responses are rendered in web applications without proper sanitisation. This can result in system-level access or injection attacks.

5. Model and Data Poisoning

This one is harder to catch because nothing actually “breaks.” The model keeps working, responses still look fine, but something underneath has changed. That usually starts with the data. This can happen either during training/fine-tuning or through external data sources used in retrieval systems (RAG), where manipulated content influences responses.

If that data is manipulated, the model slowly picks up unwanted patterns. Not enough to raise alarms, but enough to shift behaviour over time. The result can be subtle bias or hidden triggers that only show up in specific situations. To reduce the risk:

- Check and clean data before using it

- Verify the source of any external data

- Watch for unusual changes in how the model responds

6. Weak Access Control and Identity Misuse

If access controls are weak or improperly implemented, the chatbot may not correctly distinguish between users and their permissions. If access is not clearly defined, the system has no way to tell who should see what. One user might end up viewing data meant for someone else, or triggering actions outside their role.

It happens internally. A slightly different question, a follow-up, and the chatbot returns information that was never meant to be exposed. In some cases, it goes further. Requests that look normal can lead to unauthorised API calls or higher-level access without drawing attention.

How Chatbot Attacks Actually Happen

Most of the time, nothing looks wrong at the start. Someone sends a message. The chatbot replies. Then it replies again, but this time with a bit more detail than it should. That detail becomes useful. It gets reused to reach other systems, usually through APIs. From there, actions follow like sending emails, changing records, or triggering transactions.

If you break it down, the flow is simple:

- A message nudges the chatbot in a certain direction

- The response includes information that was not meant to be shared

- That information is used to access connected systems

- Actions happen inside those systems

Each step feels normal on its own. Put together, it turns into a full path from input to impact. Security checks usually look for a single mistake. This kind of activity builds gradually, which is why it often goes unnoticed early on.

How to Secure AI Chatbots

You can’t secure a chatbot by fixing one layer and ignoring the rest. Each part of the system needs its own controls, and gaps start to connect.

Input Security

Everything begins with what users send. If you do not control inputs, the chatbot can produce responses you never intended.

Prompt filtering helps block harmful instructions before they reach the model. Clear context boundaries also matter. Data from external sources should not be treated the same as internal instructions. Keeping that separation reduces the chances of manipulation.

Data Protection

Chatbots handle sensitive data. Customer details and API responses can all pass through a single interaction.

Encrypt data in transit and at rest so unauthorised parties cannot easily access it. Limit how much information the model actually sees. If full data is not required, avoid sending it. Tokenisation helps by replacing sensitive values with placeholders.

These controls also help reduce AI chatbot compliance risks, especially when dealing with regulated data across industries.

Integration Security

Most real exposure comes from connections. APIs should require proper authentication. Plugins should not have broad access by default. Limit permissions based on actual need.

It is also important to validate any external data before it enters the system. Unchecked data can influence how the chatbot behaves.

Output Security

What the chatbot returns can be just as risky as what it receives. Responses should not be used directly without checks. If outputs trigger actions or interact with other systems, validate them first. Apply strict rules for how outputs are processed.

When execution is involved, use controlled environments so unexpected behaviour does not affect core systems.

Access Control

Define roles clearly and restrict what each role can view or perform. Add identity checks where needed, especially for sensitive actions. Without these controls, it becomes easier for users to access information or trigger actions outside their scope.

Monitoring

Log interactions so you can trace what happened if something goes wrong. Watch for patterns that stand out, such as repeated unusual queries or unexpected data access.

Testing

Run regular assessments to identify gaps. Test how the chatbot behaves under unusual or manipulated inputs. Simulating real scenarios helps uncover issues that normal usage does not reveal. As the system evolves, new risks come with it. Continuous testing helps keep those risks under control.

| Feature | Unsecured Chatbot | Secured Chatbot |

| Data Handling | Undetected leaks of sensitive customer data or internal notes via replies | Data is filtered, encrypted, and shared only as needed |

| User Inputs | Accepts all inputs, allowing manipulation via prompt injection | Pre-model input filtering blocks early manipulation |

| Integrations (APIs & Plugins) | Poorly monitored tools with broad access create vulnerabilities, risking system-wide exposure. | All connections use strict permissions, authentication, and regular reviews |

| Testing and Monitoring | Static configurations let problems go unnoticed over time | Frequently tested, monitored, and updated as systems evolve

|

Chat with our intelligent AI Assistant and get tailored insights in seconds.

Enterprise Chatbot Security Best Practices

If your chatbot is part of core operations, security needs to be built in from day one, not added later. Start during development. Define what the chatbot can access, how data moves through it, and where checks are needed before anything goes live.

Do not assume requests are safe just because they come from inside. A zero-trust approach keeps every interaction verified, no matter where it originates.

Set a habit of reviewing the parts that usually get ignored:

- APIs

- Integrations

- Data pipelines

These areas tend to introduce risk quietly, so regular checks make a difference. Testing also needs a different approach. Standard checks are not enough here. You need to see how the chatbot behaves with unexpected inputs and edge cases.

It helps to follow established frameworks. Guidance from OWASP Top 10 for LLM Applications, along with ISO 27001 and SOC 2, gives you a solid structure to work with.

One more thing that gets overlooked is internal use. People often share data with chatbots without thinking twice. A bit of training goes a long way in avoiding that.

Future Risks: Agentic AI and Autonomous Actions

Things change once you allow a chatbot to take action.

Give it access to send emails, trigger payments, or update systems, and it becomes part of how work gets done. At that point, a mistake is not just a bad response. It can lead to something actually happening.

If one agent is compromised, it moves quickly across systems. It can carry out multiple actions in sequence without much resistance. You start seeing situations like:

- Payments are going through without proper checks

- Emails sent with sensitive or incorrect information

- Changes made inside systems without approval

These are the kinds of AI model security risks that become harder to control once actions are involved, not just responses. To keep this under control:

- Keep humans involved in important actions

- Add a verification step before execution

- Set clear limits on what the system is allowed to do

Once actions are involved, control needs to be just as strict as access.

How Qualysec Helps Secure AI Chatbots

Qualysec Technologies focuses on penetration testing for modern systems, including AI-driven chatbots.

Their team tests across web, API, cloud, and AI environments. They combine manual and automated methods to uncover issues that are easy to miss, like hidden vulnerabilities. They help you:

- Identify prompt injection risks

- Test chatbot integrations and APIs

- Detect where data could leak

Testing from an attacker’s point of view, so you see how real exploits would play out.

With 450-plus assets secured and 110-plus clients globally, their work is backed by hands-on experience. You also get: Clear reports, fix recommendations and retesting support

If your chatbot is connected to critical systems, it’s worth checking where the gaps are before they get exploited.

Conclusion

Chatbot security risks are already part of how work gets done now. They handle data, connect with systems, and sometimes take action on their own. Issues usually don’t show up as clear failures. They build slowly through small gaps that are easy to miss in day-to-day use. Keeping things secure means checking how the system behaves and testing it regularly, instead of dealing with them later.

If you want to understand where your chatbot stands today, Qualysec can help you find and fix those gaps before they cause real damage.

Speak directly with Qualysec’s certified professionals to identify vulnerabilities before attackers do.

FAQs

Q.What are the risks of AI chatbot data security?

The biggest problem is that systems expose things without anyone realising it. A chatbot might pull in customer info, internal notes, or data from another system and include it in a reply. Nothing looks wrong on the surface, but the damage has already occurred.

Q.What are the 4 types of AI risk?

You can think of it in four parts. Data is exposing itself or being misused. The model gives wrong or influenced answers. System-level gaps through APIs or integrations. And then, simple human mistakes while using it.

Q.What is ‘Prompt Injection’ in chatbot security?

It’s basically tricking the chatbot through input. Someone phrases a request in a way that makes the system ignore its original instructions and follow new ones hidden in the message.

Q.Can a chatbot leak company secrets through RAG?

Yes, and it doesn’t take anything complex. If the chatbot pulls from internal documents or knowledge bases, it can return sensitive details as part of a normal answer if nothing is filtering that data.

Q.What is ‘Data Poisoning’ in AI models?

This happens when bad or manipulated data gets into the system. The model keeps working, but the answers start shifting. Sometimes slightly wrong, sometimes very specific.

0 Comments